Relaxed Operational Semantics of Concurrent Programming Languages

We propose a novel, operational framework to formally describe the semantics of concurrent programs running within the context of a relaxed memory model. Our framework features a “temporary store” where the memory operations issued by the threads are recorded, in program order. A memory model then specifies the conditions under which a pending operation from this sequence is allowed to be globally performed, possibly out of order. The memory model also involves a “write grain,” accounting for architectures where a thread may read a write that is not yet globally visible. Our formal model is supported by a software simulator, allowing us to run litmus tests in our semantics.

💡 Research Summary

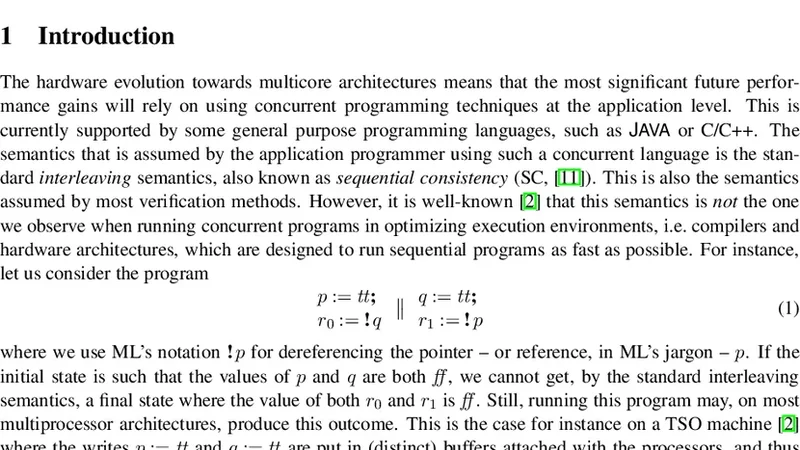

The paper introduces an operational framework for describing the semantics of concurrent programs under relaxed memory models. Instead of relying on abstract relations such as happens‑before or memory order, the authors model the concrete execution steps of each thread by recording every memory operation (loads, stores, fences, etc.) in a per‑thread “temporary store” in program order. This temporary store acts as a buffer that mirrors the way modern weak‑memory hardware postpones, reorders, or locally buffers operations before they become globally visible.

A memory model in this framework is defined by two complementary components. First, a set of “possibility conditions” specifies when a pending operation in the temporary store may be globally performed (committed). These conditions capture data‑dependency, address‑dependency, and synchronization constraints, ensuring that operations that must appear ordered cannot be arbitrarily reordered. Second, the authors introduce the notion of a “write grain,” which formalizes the ability of a thread to read a write that has not yet been propagated to the global memory. This captures the well‑known phenomenon on architectures such as ARM and POWER where a thread can see its own buffered store before other threads do.

The operational semantics proceeds in three phases for each operation: issue (the operation is placed into the temporary store), wait (the operation remains pending while the model checks the possibility conditions), and commit (the operation updates the global memory state). The transition rules are defined formally, updating both the global state and the per‑thread temporary stores. Because the model explicitly enumerates all allowed reorderings, it can represent subtle race conditions that are often omitted in higher‑level axiomatic specifications.

To validate the theory, the authors built a software simulator that implements the framework. Users supply litmus tests written in C, assembly, or a simple domain‑specific language. The simulator constructs the temporary stores, explores all admissible transition sequences according to the chosen memory model, and reports every reachable final memory configuration together with the concrete sequence of commits that produced it. The output can be inspected textually or visualized as a state‑transition graph, making it straightforward to verify whether a given model permits or forbids a particular outcome.

The paper also compares the proposed semantics with existing models such as C11, the Java Memory Model, ARMv8, and POWER. The comparison shows that the operational approach can express the same guarantees while providing finer granularity: the write grain concept, for instance, captures intra‑thread read‑after‑write forwarding that many axiomatic models treat as an implicit side‑effect. Moreover, extending or designing a new memory model reduces to tweaking the possibility‑condition predicates and the write‑grain parameters, demonstrating high modularity.

In summary, the authors present a novel “temporary store + possibility condition + write grain” operational semantics that bridges the gap between abstract memory‑model specifications and concrete hardware behavior. By offering a runnable simulator, the work supplies both a research tool for formal verification of weak‑memory programs and a practical platform for architects to prototype and test new consistency policies. The approach promises to improve our understanding of relaxed memory behaviors and to facilitate more reliable concurrent software development on modern multicore systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment