Document Clustering Evaluation: Divergence from a Random Baseline

Divergence from a random baseline is a technique for the evaluation of document clustering. It ensures cluster quality measures are performing work that prevents ineffective clusterings from giving high scores to clusterings that provide no useful result. These concepts are defined and analysed using intrinsic and extrinsic approaches to the evaluation of document cluster quality. This includes the classical clusters to categories approach and a novel approach that uses ad hoc information retrieval. The divergence from a random baseline approach is able to differentiate ineffective clusterings encountered in the INEX XML Mining track. It also appears to perform a normalisation similar to the Normalised Mutual Information (NMI) measure but it can be applied to any measure of cluster quality. When it is applied to the intrinsic measure of distortion as measured by RMSE, subtraction from a random baseline provides a clear optimum that is not apparent otherwise. This approach can be applied to any clustering evaluation. This paper describes its use in the context of document clustering evaluation.

💡 Research Summary

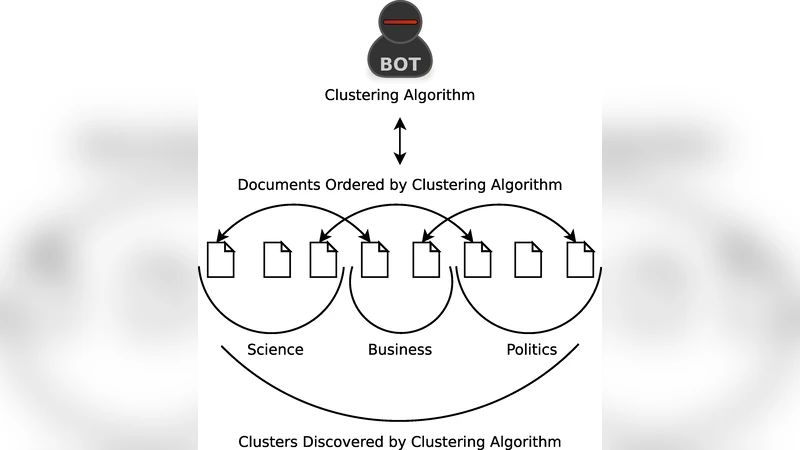

The paper introduces a novel evaluation technique for document clustering called “Divergence from a Random Baseline” (DRB). The core idea is to generate a completely random clustering that matches the target clustering in the number of clusters and the size distribution of each cluster, then subtract the quality score of this random baseline from the quality score of the actual clustering. This difference directly measures how much information the real clustering retains beyond what would be expected by chance, thereby preventing ineffective or trivial clusterings from receiving artificially high scores.

The authors apply DRB to both intrinsic and extrinsic evaluation methods. For intrinsic evaluation they use distortion measured by Root Mean Square Error (RMSE). Traditional RMSE decreases monotonically as the number of clusters grows, making it difficult to identify an optimal cluster count. By subtracting the RMSE of a random baseline, a clear minimum emerges, providing an objective criterion for selecting the appropriate number of clusters.

For extrinsic evaluation two approaches are examined. The first follows the classic “clusters‑to‑categories” paradigm, comparing clusters to pre‑defined topic categories using metrics such as Purity, Entropy, and Normalised Mutual Information (NMI). The second adopts an ad‑hoc information‑retrieval perspective: each cluster is treated as a result set for a set of queries, and retrieval effectiveness (e.g., MAP) is measured. In both cases, DRB reveals whether a clustering truly improves retrieval performance or merely reflects random structure.

Experiments are conducted on the INEX 2009 XML Mining track dataset, which contains roughly 12,000 XML documents organized into ten thematic categories. Multiple clustering algorithms (K‑means, hierarchical agglomerative, spectral clustering, etc.) are run with cluster counts ranging from 5 to 200. Results show that without DRB many standard metrics inflate scores as cluster count increases, masking the presence of ineffective clusterings. After applying DRB, scores are normalised similarly to NMI, but the method works with any metric, including RMSE, which now exhibits a distinct optimum. In the ad‑hoc retrieval experiments, only clusterings with positive DRB values improve query performance; those with negative values actually degrade it.

The study demonstrates that DRB effectively eliminates the “random‑cluster bias” inherent in many evaluation measures, offering a universal normalisation technique that does not rely on external labels. It can be applied to any clustering domain, including those lacking ground‑truth categories, making it especially valuable for unsupervised learning scenarios. The authors discuss limitations such as the need for accurate replication of size distributions in the random baseline for highly imbalanced data, and propose future work extending DRB to image clustering, social‑network community detection, and multi‑objective optimisation frameworks.

In conclusion, Divergence from a Random Baseline provides a robust, label‑independent way to assess whether a clustering delivers genuine informational gain over chance. It clarifies optimal cluster numbers, aligns intrinsic and extrinsic evaluations, and can be seamlessly integrated into existing evaluation pipelines, thereby enhancing the reliability of clustering research across a wide range of applications.