Multivariate information measures: an experimentalists perspective

Information theory is widely accepted as a powerful tool for analyzing complex systems and it has been applied in many disciplines. Recently, some central components of information theory - multivariate information measures - have found expanded use in the study of several phenomena. These information measures differ in subtle yet significant ways. Here, we will review the information theory behind each measure, as well as examine the differences between these measures by applying them to several simple model systems. In addition to these systems, we will illustrate the usefulness of the information measures by analyzing neural spiking data from a dissociated culture through early stages of its development. We hope that this work will aid other researchers as they seek the best multivariate information measure for their specific research goals and system. Finally, we have made software available online which allows the user to calculate all of the information measures discussed within this paper.

💡 Research Summary

This paper provides a comprehensive review and practical comparison of multivariate information measures from the perspective of an experimentalist. The authors begin by reminding the reader that information theory is a model‑independent framework that relies solely on probability distributions, making it applicable across a wide range of scientific disciplines. While the univariate entropy and bivariate mutual information are well‑understood, the landscape of measures that quantify interactions among three or more variables is fragmented: different research groups have introduced a variety of quantities under different names and notations, often leading to confusion about what each measure actually quantifies.

The core of the manuscript systematically introduces the most commonly used multivariate information measures, explains their mathematical definitions, and discusses their conceptual interpretations, especially with respect to “synergy” (information that is only available when variables are considered together) and “redundancy” (information that is shared across variables). The measures covered include:

- Interaction Information (II) and Co‑information – extensions of mutual information that can be positive (interpreted as synergy) or negative (interpreted as redundancy), but whose sign depends on the parity of the number of variables.

- Total Correlation (TC) – also known as multi‑information, defined as the Kullback‑Leibler divergence between the joint distribution and the product of the marginals; it captures the overall dependence among a set of variables.

- Dual Total Correlation (DTC) – a complementary quantity to TC that measures the excess entropy beyond the sum of conditional entropies; it is sometimes called excess entropy or binding information.

- ΔI – introduced by Nirenberg and Latham to quantify the cost of ignoring correlations in neural coding; it is the KL‑distance between the true conditional distribution of a target variable given the predictors and a model that assumes predictor independence.

- Redundancy‑Synergy Index (RSI) – the difference between the total mutual information of a set of predictors and the sum of the individual mutual informations; positive values indicate synergy, negative values indicate redundancy.

- Varadan’s Synergy (VS) – defined as the total mutual information minus the maximum mutual information contributed by any subset of predictors; it reduces to interaction information for two predictors.

- Partial Information Decomposition (PID) – a framework that decomposes the information that a set of predictors provides about a target into unique, shared (redundant), and synergistic components.

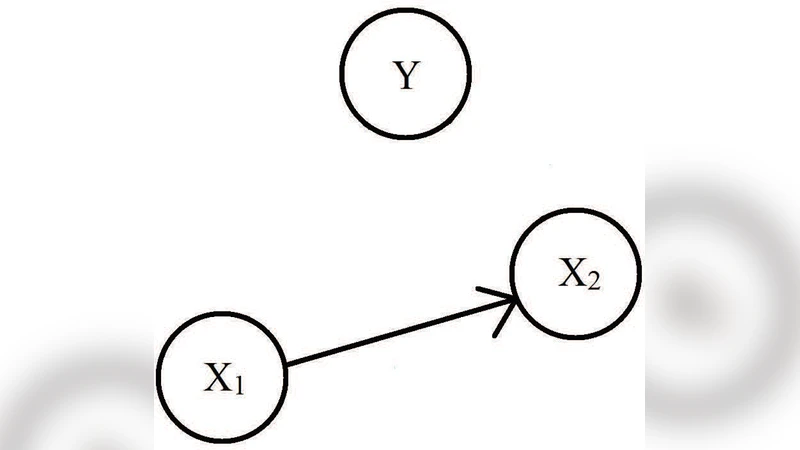

After laying out the theoretical background, the authors apply each measure to four synthetic model systems that illustrate pure synergy (XOR gate), pure redundancy (AND gate), mixed interactions, and continuous multivariate Gaussian variables. These examples reveal how different measures respond to the same underlying statistical structure. For instance, II and co‑information change sign depending on the parity of the variable set, TC tends to overestimate total dependence because it aggregates all pairwise and higher‑order contributions, and DTC isolates the part of the dependence that cannot be explained by conditioning on the rest of the variables. ΔI is near zero when predictors are independent, while RSI and VS are highly sensitive to how subsets are chosen, sometimes yielding contradictory assessments of synergy versus redundancy.

The most compelling part of the study is the analysis of real experimental data: multi‑electrode recordings from a dissociated neuronal culture during early development. Spike trains are binarized in 10 ms windows, yielding binary variables for ~50 neurons. The authors compute all the multivariate measures across successive developmental days. They find that TC and DTC increase markedly as the network matures, reflecting a rise in global synchrony. In contrast, RSI and VS highlight specific small subnetworks that exhibit positive synergy, suggesting the emergence of functional clusters. ΔI remains low in early stages but grows later, indicating that ignoring pairwise correlations becomes increasingly costly for decoding stimulus‑related information. These complementary patterns demonstrate that no single measure captures all aspects of network dynamics; instead, a suite of measures provides a richer, multidimensional picture.

To facilitate adoption, the authors release a MATLAB toolbox that implements all the discussed measures with a unified interface. The toolbox handles probability estimation (including bias correction), computes each metric, and provides basic visualization tools. By making the code publicly available, the authors lower the barrier for experimentalists in neuroscience, genetics, physics, and other fields to apply sophisticated multivariate information analyses to their own data.

In conclusion, the paper argues that the choice of multivariate information measure should be guided by the specific scientific question. If the goal is a quick assessment of overall dependence, TC or DTC are computationally efficient and informative. When the research focus is on disentangling synergistic versus redundant contributions, PID offers the most detailed decomposition, albeit at higher computational cost. The authors’ systematic comparison, together with the open‑source software, equips researchers with both the conceptual clarity and practical tools needed to select and apply the most appropriate multivariate information measure for their particular system and hypothesis.

Comments & Academic Discussion

Loading comments...

Leave a Comment