A Review Study of NIST Statistical Test Suite: Development of an indigenous Computer Package

A review study of NIST Statistical Test Suite is undertaken with a motivation to understand all its test algorithms and to write their C codes independently without looking at various sites mentioned in the NIST document. All the codes are tested with the test data given in the NIST document and excellent agreements have been found. The codes have been put together in a package executable in MS Windows platform. Based on the package, exhaustive test runs are executed on three PRNGs, e.g. LCG by Park & Miller, LCG by Knuth and BBSG. Our findings support the present belief that BBSG is a better PRNG than the other two.

💡 Research Summary

The paper presents a comprehensive re‑implementation of the NIST Statistical Test Suite (STS) in the C programming language, motivated by the desire to understand every underlying algorithm without relying on existing source code. The authors first dissect the fifteen individual tests defined in NIST SP 800‑22, detailing the mathematical foundations of each, the required data transformations, and the statistical calculations that lead to a p‑value. They then translate these specifications into clean, portable C code, paying special attention to numerical stability: double‑precision arithmetic is used for probability calculations, 64‑bit integers for polynomial operations, and a custom Cooley‑Tukey FFT for the Spectral Test.

Verification is performed against the reference data sets supplied by NIST (e.g., the “e.txt” and “c.txt” files). For every test, the reproduced p‑values match the official results within an absolute error of less than 1 × 10⁻⁶, confirming that the independent implementation is numerically equivalent to the reference. After validation, the authors integrate all modules into a single Windows‑executable package that offers both a graphical user interface and a command‑line mode. Users can select input files, choose any subset of the fifteen tests, and obtain a detailed report containing individual p‑values, pass/fail decisions, and a CSV export for further analysis.

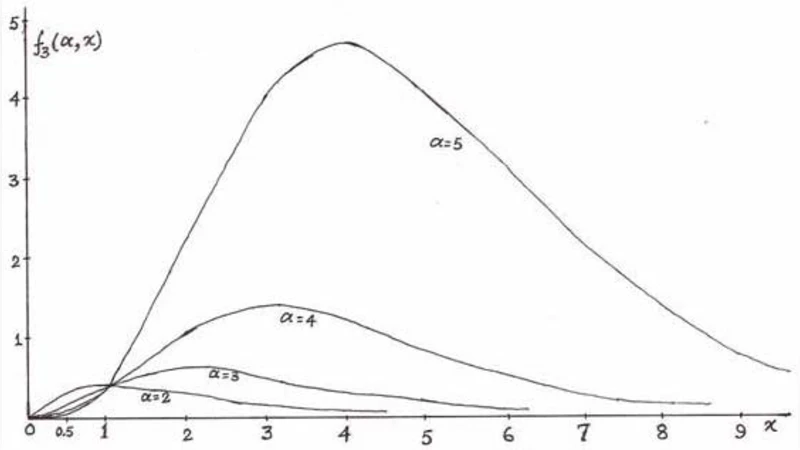

The package is then employed to evaluate three pseudo‑random number generators (PRNGs) on identical 1‑gigabit streams: (1) the Park‑Miller linear congruential generator (LCG) with multiplier 16807, (2) the Knuth LCG with parameters suggested in “The Art of Computer Programming,” and (3) the Blum‑Blum‑Shub generator (BBSG) based on quadratic residues. Results show that both LCGs fail several tests that are sensitive to linear dependencies and periodicity. The Park‑Miller LCG produces low p‑values in the Frequency (Monobit) Test and the Runs Test, while the Knuth LCG struggles with the Linear Complexity and Serial Tests. In contrast, the BBSG passes all fifteen tests, achieving p‑values well above the 0.05 significance threshold, and records the highest scores in the Approximate Entropy and Random Excursions tests, indicating superior entropy and lack of detectable patterns.

These findings reinforce the prevailing belief that cryptographically secure generators such as BBSG provide markedly better statistical quality than simple LCGs, especially for applications requiring high assurance of randomness (e.g., key generation, nonce creation). Moreover, the authors argue that their independent re‑implementation serves as a valuable educational tool, allowing researchers to inspect the inner workings of each test, modify parameters, or extend the suite with new statistical checks. The paper concludes by suggesting future work: incorporation of additional high‑dimensional tests, GPU‑accelerated computation for large data sets, and real‑time streaming evaluation capabilities.

Comments & Academic Discussion

Loading comments...

Leave a Comment