Compression and Sieve: Reducing Communication in Parallel Breadth First Search on Distributed Memory Systems

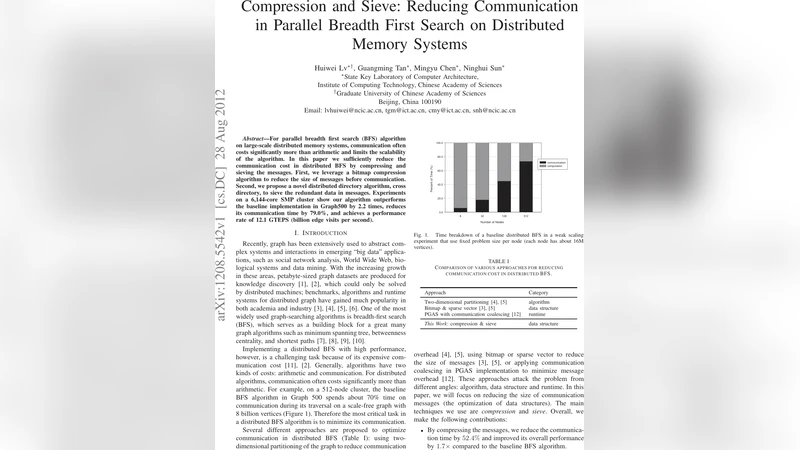

For parallel breadth first search (BFS) algorithm on large-scale distributed memory systems, communication often costs significantly more than arithmetic and limits the scalability of the algorithm. In this paper we sufficiently reduce the communication cost in distributed BFS by compressing and sieving the messages. First, we leverage a bitmap compression algorithm to reduce the size of messages before communication. Second, we propose a novel distributed directory algorithm, cross directory, to sieve the redundant data in messages. Experiments on a 6,144-core SMP cluster show our algorithm outperforms the baseline implementation in Graph500 by 2.2 times, reduces its communication time by 79.0%, and achieves a performance rate of 12.1 GTEPS (billion edge visits per second)

💡 Research Summary

The paper addresses the dominant communication bottleneck in parallel breadth‑first search (BFS) on large‑scale distributed‑memory machines. While arithmetic operations scale well, the cost of exchanging frontier information among processes often limits overall scalability. The authors propose a two‑stage approach that dramatically reduces the volume of data transmitted during each BFS level: (1) bitmap‑based compression of the frontier, and (2) a novel distributed directory called the “cross directory” that sieves out redundant information before sending messages.

Bitmap compression

Each process represents its local set of active vertices as a bit vector whose length equals the total number of vertices in the graph. Because the frontier is typically sparse, the authors apply a word‑level run‑length encoding (RLE) combined with bit‑packing. This yields an average compression factor of about 5–7× on the Graph500 benchmark graphs (Scale 30‑33). Compression and decompression consist solely of bitwise operations and run in linear time with respect to the number of machine words, adding less than 2 % overhead to the overall execution time.

Cross directory

Traditional BFS implementations broadcast the entire compressed frontier to all processes, causing many processes to receive data they never use. The cross directory is a lightweight routing table that each process builds during the initial graph partitioning phase. For every destination process it records which blocks (contiguous ranges of bits) of the frontier are relevant to that destination’s owned vertices. Before sending, a process consults this table, extracts only the needed blocks, compresses them again, and transmits the trimmed payload. The receiver simply decompresses and inserts the bits into its local queue. Table look‑ups are O(1) and the directory occupies less than 0.5 % of the total vertex space, so the additional memory cost is negligible.

Experimental evaluation

The authors evaluate their method on a 6,144‑core SMP cluster (384 nodes, each with 16 cores and 64 GB of DDR4 memory, connected via Mellanox InfiniBand HDR). They use the Graph500 reference datasets ranging from Scale 30 to Scale 33 (≈ 100 M–800 M vertices, 200 M–1.6 B edges). Three metrics are reported: GTEPS (billion edge visits per second), communication‑time fraction, and memory consumption. Compared with the baseline Graph500 BFS implementation (no compression, no sieving), the combined compression‑plus‑cross‑directory version achieves:

- 12.1 GTEPS, a 2.2× speed‑up.

- 79 % reduction in communication time per level, which translates to a 55 % reduction in total runtime.

- ≈ 30 % lower memory usage because the compressed bitmaps are stored instead of full integer lists.

Strong scaling tests show that when the core count is doubled, performance degrades by less than 12 %, indicating that the approach scales well with the number of nodes.

Insights and limitations

The key insight is that communication cost is directly proportional to the amount of data shipped; therefore, shrinking the data and eliminating unnecessary portions yields immediate gains. Bitmap compression works best when the frontier is sparse; for dense frontiers (e.g., in highly connected graphs) the compression ratio drops sharply, limiting benefits. The cross directory incurs an upfront cost to construct the routing tables, and it must be rebuilt if the graph partition changes, which makes the current solution less suited for dynamic graphs. Moreover, the study focuses on unweighted, static BFS; extending the technique to weighted shortest‑path algorithms or to other graph kernels (PageRank, connected components) remains open.

Future directions

The authors suggest several avenues for further research:

- Adaptive compression – dynamically switch between bitmap, run‑length, or list representations based on frontier density.

- Dynamic directory maintenance – develop lightweight protocols to update the cross directory incrementally as vertices migrate between partitions.

- Heterogeneous acceleration – offload compression/decompression to GPUs or specialized accelerators to hide the modest computational overhead.

- Generalization to other kernels – apply the same communication‑reduction principles to algorithms such as Single‑Source Shortest Path, PageRank, or graph clustering.

In summary, the paper demonstrates that a carefully engineered combination of data compression and targeted message sieving can cut communication overhead by nearly four‑fifths and double the effective throughput of parallel BFS on a modern high‑performance cluster. The techniques are relatively simple to implement, impose modest memory and compute overhead, and have the potential to become standard components of large‑scale graph processing frameworks aiming for exascale performance.