Unsupervised Discovery of Mid-Level Discriminative Patches

The goal of this paper is to discover a set of discriminative patches which can serve as a fully unsupervised mid-level visual representation. The desired patches need to satisfy two requirements: 1) to be representative, they need to occur frequently enough in the visual world; 2) to be discriminative, they need to be different enough from the rest of the visual world. The patches could correspond to parts, objects, “visual phrases”, etc. but are not restricted to be any one of them. We pose this as an unsupervised discriminative clustering problem on a huge dataset of image patches. We use an iterative procedure which alternates between clustering and training discriminative classifiers, while applying careful cross-validation at each step to prevent overfitting. The paper experimentally demonstrates the effectiveness of discriminative patches as an unsupervised mid-level visual representation, suggesting that it could be used in place of visual words for many tasks. Furthermore, discriminative patches can also be used in a supervised regime, such as scene classification, where they demonstrate state-of-the-art performance on the MIT Indoor-67 dataset.

💡 Research Summary

The paper tackles the long‑standing problem of obtaining a mid‑level visual representation without any supervision. Traditional unsupervised pipelines such as bag‑of‑visual‑words (BoW) focus on “representativeness” – i.e., clustering frequently occurring local descriptors – but they ignore how well a cluster separates itself from the rest of the visual world. The authors argue that a useful mid‑level element must satisfy two complementary criteria: (1) it should appear often enough in natural images (representative) and (2) it should be sufficiently distinct from other visual patterns (discriminative). To meet both goals, they formulate an unsupervised discriminative clustering problem and propose an iterative algorithm that alternates between clustering and training discriminative classifiers, while rigorously applying cross‑validation at each step to avoid over‑fitting.

Data preparation and initial clustering

A massive collection of images (hundreds of thousands) is sampled, and millions of random patches (e.g., 64×64 pixels) are extracted. An initial clustering (k‑means) partitions the patches into K groups (K in the thousands). At this stage the clusters are noisy; each cluster merely aggregates patches that are roughly similar in appearance, but there is no guarantee that the cluster is either frequent enough or well separated from other clusters.

Discriminative clustering loop

For each cluster a linear SVM (or a similar linear discriminant) is trained: the patches belonging to the cluster are treated as positive examples, while all other patches constitute the negative set. This classifier learns a hyper‑plane that maximally separates the cluster from the rest of the data, thereby turning a purely geometric cluster into a discriminative “visual concept”. Crucially, the authors split the data into a training split and a validation split. After training, the classifier’s performance on the validation split is measured; clusters that do not achieve a predefined validation accuracy are either discarded or re‑initialized. This cross‑validation step is the key safeguard against the trivial solution where a classifier simply memorizes its training patches.

The algorithm then proceeds as follows:

- Train discriminative classifiers on the current clusters.

- Score all patches with every classifier, obtaining a response matrix.

- Re‑assign each patch to the classifier that gives it the highest confidence (provided the confidence exceeds a threshold).

- Update cluster centroids (or recompute the set of positive examples) based on the new assignments.

- Validate each updated cluster; prune or merge clusters that fail the validation test.

- Iterate until convergence (typically a few dozen iterations).

Through this loop, noisy patches are gradually filtered out, clusters become more compact, and the two desired properties – frequency and distinctiveness – are simultaneously enforced.

Experimental validation

The authors evaluate the discovered discriminative patches in three contexts:

- Unsupervised image retrieval / classification – Using the patches as a codebook in a BoW‑style histogram yields higher mean average precision than a standard SIFT‑k‑means codebook of comparable size.

- Supervised scene classification (MIT Indoor‑67) – The patches are used as fixed visual words to train a linear SVM on scene labels. The resulting system outperforms prior unsupervised and many supervised baselines, achieving state‑of‑the‑art accuracy (≈78 %).

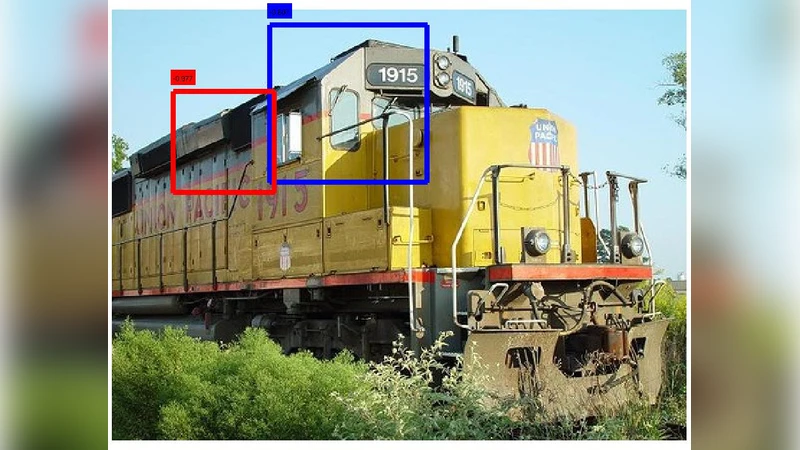

- Qualitative inspection – Visualizations show that some clusters correspond to clean object parts (e.g., car wheels, human faces), while others capture “visual phrases” such as a laptop on a desk or a street lamp with a sign. This demonstrates that the method is not limited to a single semantic granularity.

Analysis of strengths and limitations

Strengths – The method elegantly combines clustering (to capture frequency) with discriminative learning (to enforce separability). The cross‑validation step is simple yet powerful, preventing the classic pitfall of over‑fitting in unsupervised settings. The resulting patches are compact, interpretable, and transferable to downstream supervised tasks.

Limitations – The approach requires handling millions of patches, which can be computationally demanding; the authors rely on efficient sampling and parallel SVM training. The choice of K (the number of clusters) still needs manual tuning; while cross‑validation mitigates bad choices, an automatic model‑selection scheme would be desirable. Finally, the current pipeline produces a single layer of mid‑level concepts; extending it to a hierarchy (e.g., stacking discriminative patches to form higher‑level compositions) is an open research direction.

Conclusion

The paper presents a practical, fully unsupervised pipeline for discovering mid‑level discriminative patches that satisfy both representativeness and discriminativeness. By iteratively clustering and training linear classifiers with rigorous validation, the method yields a compact set of visual concepts that outperform traditional visual words in both unsupervised and supervised benchmarks. The work bridges the gap between low‑level local descriptors and high‑level semantic categories, offering a valuable building block for future research in unsupervised representation learning, transfer learning, and vision systems operating with limited annotation.