High-dimensional structure estimation in Ising models: Local separation criterion

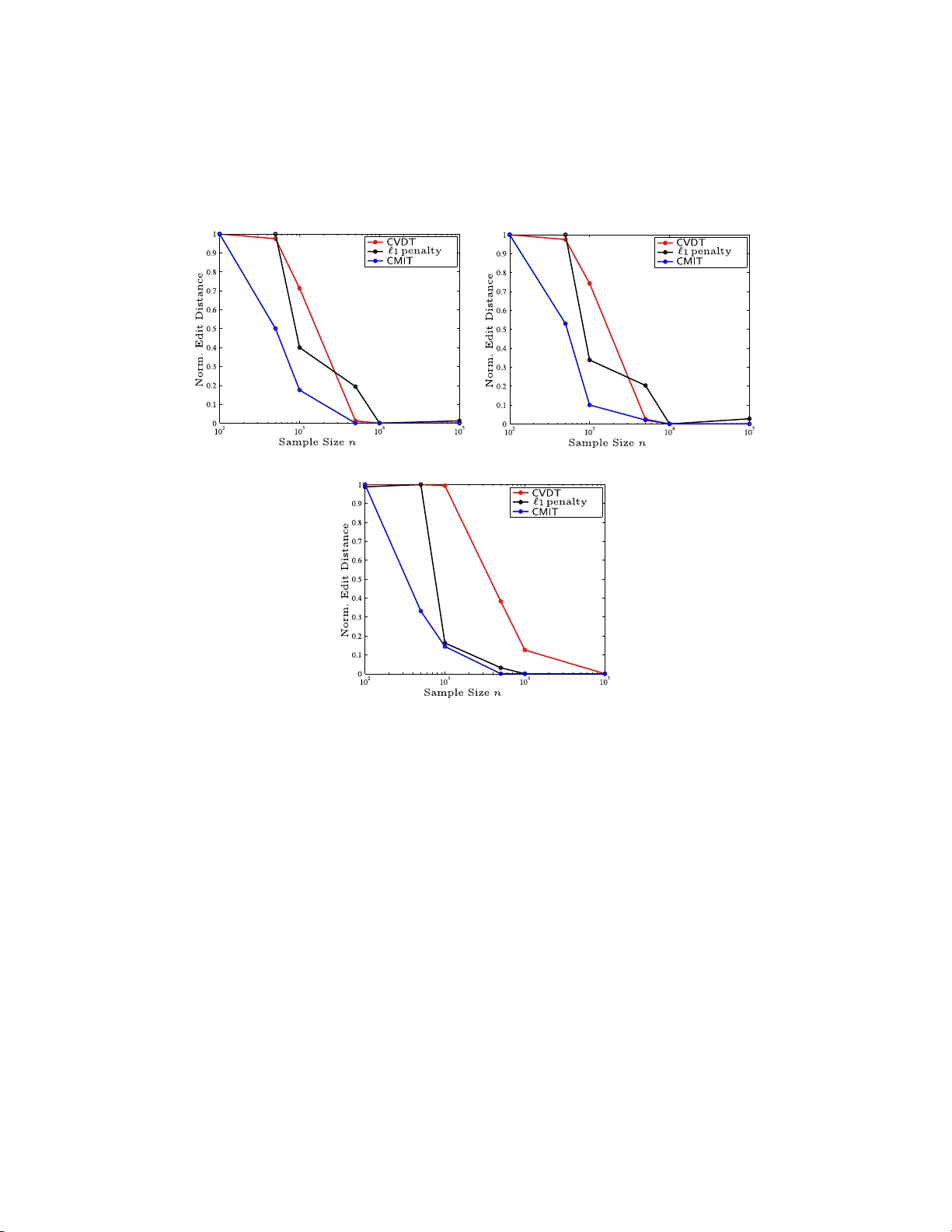

We consider the problem of high-dimensional Ising (graphical) model selection. We propose a simple algorithm for structure estimation based on the thresholding of the empirical conditional variation distances. We introduce a novel criterion for tract…

Authors: Animashree An, kumar, Vincent Y. F. Tan