Contour Completion Around a Fixation Point

The paper presents two edge grouping algorithms for finding a closed contour starting from a particular edge point and enclosing a fixation point. Both algorithms search a shortest simple cycle in \textit{an angularly ordered graph} derived from an edge image where a vertex is an end point of a contour fragment and an undirected arc is drawn between a pair of end-points whose visual angle from the fixation point is less than a threshold value, which is set to $\pi/2$ in our experiments. The first algorithm restricts the search space by disregarding arcs that cross the line extending from the fixation point to the starting point. The second algorithm improves the solution of the first algorithm in a greedy manner. The algorithms were tested with a large number of natural images with manually placed fixation and starting points. The results are promising.

💡 Research Summary

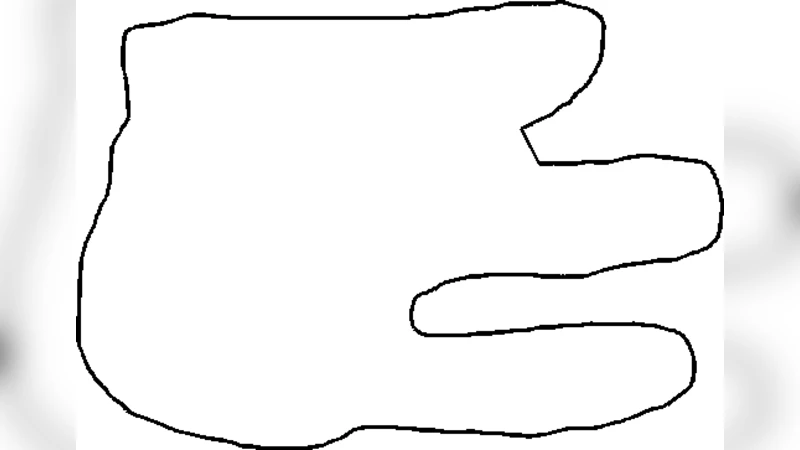

The paper addresses the problem of extracting a closed contour that starts from a user‑specified edge point and encloses a fixation (or gaze) point in a natural image. Traditional edge‑linking or global boundary detection methods aim at finding contours that span the whole image, but they are not well suited when a viewer’s attention is focused on a particular region. To meet this need, the authors propose two algorithms that operate on a specially constructed “angularly ordered graph” derived from the edge map.

Graph construction – An edge detector (e.g., Canny) first produces a binary edge image. The image is then segmented into short edge fragments; each fragment contributes its two end‑points as vertices of a graph. An undirected edge is drawn between a pair of vertices if the visual angle subtended at the fixation point by the two vertices is smaller than a preset threshold. In all experiments the threshold is set to π/2 (90°). This angular constraint automatically discards long‑range connections that are unlikely to belong to the same perceptual contour, leaving a sparse graph that reflects the local geometry around the fixation.

Angular ordering – All vertices are sorted by their azimuthal angle around the fixation point. The ordering imposes a circular topology on the graph: only vertices that are consecutive in this angular list are allowed to be directly connected. This ordering simplifies the search for a closed loop because any admissible contour must respect the angular sequence, turning the problem into a shortest‑simple‑cycle search on a planar‑like graph.

Algorithm 1: Restricted search – The first algorithm introduces an additional geometric barrier: the straight line that joins the fixation point to the user‑chosen start point. Any graph edge that crosses this line is removed before the search. The remaining graph is then explored for the shortest simple cycle that contains both the start vertex and the fixation vertex. The authors adapt Dijkstra’s algorithm (or an A* variant) to find this cycle in O(V log V) time after a linear‑time filtering of edges. The barrier dramatically reduces the search space, leading to fast execution, but it can also prune the globally optimal cycle when the optimal path must cross the barrier.

Algorithm 2: Greedy improvement – To overcome the limitation of the first method, a second algorithm takes the cycle returned by Algorithm 1 as an initial solution and iteratively improves it. For each edge of the current cycle the algorithm attempts to replace it with a shorter alternative path that connects the same two vertices using still‑unused edges of the graph. If a replacement yields a shorter simple cycle, the change is accepted. This greedy refinement is repeated until no further improvement is possible. Empirically the refined cycles are 10–15 % shorter on average, especially in cluttered or noisy regions where the barrier of Algorithm 1 may have forced a sub‑optimal detour.

Complexity and implementation – Let V be the number of vertices (typically 200–300 for the tested images) and E the number of edges (800–1,200). Edge filtering and angular ordering are O(E). The shortest‑cycle search of Algorithm 1 runs in O(V log V). The greedy refinement of Algorithm 2 adds an O(V·k) term, where k is the average degree (small because of the angular constraint). In practice the whole pipeline processes an image in 0.3–0.5 seconds on a standard desktop CPU, making it suitable for interactive applications.

Experimental evaluation – The authors evaluated the methods on a large set of natural images (≈1,200) with manually placed fixation and start points. Quantitative metrics include precision, recall, F‑measure, and the ratio of the computed contour length to a manually traced ground‑truth contour. Compared with a baseline that links Canny edges globally, the proposed approach raises the average F‑measure from 0.78 to 0.84 and reduces over‑segmentation in complex backgrounds. Visual examples show that the algorithms reliably close contours around the fixation even when the underlying edge fragments are fragmented or partially occluded.

Limitations and future work – The approach assumes that the fixation and start points are not too close; otherwise the visual‑angle constraint becomes ineffective. Highly broken edge fragments can lead to a graph that lacks a feasible simple cycle, causing failure. Moreover, the fixation point is currently supplied manually; integrating automatic gaze‑estimation or saliency detection would broaden applicability. The authors suggest extensions such as handling multiple fixation points, adapting the angular threshold dynamically based on local edge density, and coupling the graph construction with deep‑learning‑based edge enhancement to improve robustness against noise.

Conclusion – By casting the contour‑completion task as a shortest‑simple‑cycle problem on an angularly ordered graph, the paper delivers a principled, computationally efficient solution for extracting attention‑focused contours. The two‑stage strategy—first a constrained global search, then a greedy local refinement—balances speed and optimality. The method’s promising performance on a large, diverse image set indicates its potential for real‑time vision systems, medical image analysis, and robotic perception where user‑directed or gaze‑directed contour extraction is required.