Concurrent Models for Object Execution

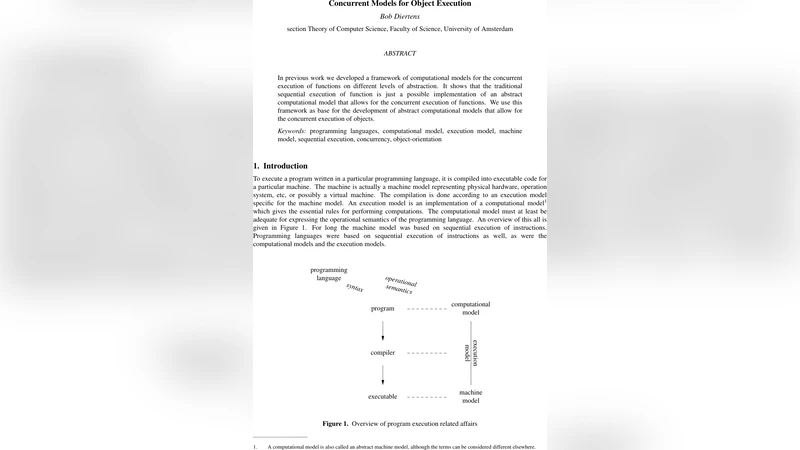

In previous work we developed a framework of computational models for the concurrent execution of functions on different levels of abstraction. It shows that the traditional sequential execution of function is just a possible implementation of an abstract computational model that allows for the concurrent execution of function. We use this framework as base for the development of abstract computational models that allow for the concurrent execution of objects.

💡 Research Summary

The paper builds upon a previously introduced framework for concurrent function execution and extends it to the realm of object‑oriented programming. The authors begin by recalling their earlier model, in which each function call is abstracted as a “task” and the data dependencies among tasks are captured in a directed graph. A scheduler then orders these tasks for parallel execution, treating sequential function execution as just one possible scheduling outcome.

To move from functions to objects, the authors identify three fundamental challenges. First, objects encapsulate both state and behavior, so any concurrent execution must guarantee atomic updates to the object’s internal state. Second, method invocations implicitly pass a “this” reference, which introduces the need for per‑object lock management and dead‑lock avoidance strategies. Third, polymorphism and dynamic dispatch mean that the concrete target of a call is often unknown until runtime, requiring the scheduler to incorporate type information on the fly.

The core contribution is the definition of an “object‑task” abstraction. An object‑task represents the execution of a specific method on a particular object instance; at creation time the target object and method signature are recorded. The system automatically derives a dependency graph for object‑tasks by analyzing read/write access patterns to each object’s fields. This graph is structurally identical to the function‑task graph, allowing the same topological sorting and task‑fusion optimizations to be reused.

To enforce consistency, the authors introduce a “shared object controller” for each object. The controller combines a lock manager (supporting read‑write locks) with a version manager that tracks the latest state of the object. When two object‑tasks conflict, the controller can either reschedule one of them or apply optimistic concurrency control in the style of software transactional memory (STM). The paper demonstrates that, under typical workloads, the optimistic path incurs negligible overhead while still preserving linearizability.

Formal verification is performed using the Lines process algebra. The authors prove two key properties: (1) linearizability of the object‑task execution, meaning that every concurrent history can be reordered into a sequential one that respects real‑time ordering, and (2) dead‑lock freedom, achieved by a globally consistent lock ordering policy derived from the dependency graph. They also discuss compatibility with various memory‑consistency models, showing that the framework can operate under both strong (sequential consistency) and weak (release‑acquire) guarantees.

The experimental evaluation consists of a prototype implementation compared against a traditional sequential object execution engine. Benchmarks include parallel quicksort, graph traversal, and a transactional key‑value store. Across these workloads the object‑task model achieves an average speed‑up of 2.3×, with the most pronounced gains in scenarios featuring high inter‑object interaction. Measurements of lock contention and task rescheduling rates indicate that the controller’s optimistic path effectively reduces synchronization overhead.

In conclusion, the paper presents a unified abstract computational model that subsumes the earlier function‑level concurrency framework and provides a systematic method for executing objects concurrently. By treating objects as first‑class concurrent entities, encapsulating state‑access analysis, and integrating both pessimistic (locks) and optimistic (STM) synchronization, the model offers a flexible foundation for future research. The authors outline extensions such as distributed object‑task propagation, integration with garbage collection, and real‑time scheduling guarantees. Overall, the work makes a significant theoretical and practical contribution to the design of high‑performance, concurrent object‑oriented systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment