Color Image Compression Algorithm Based on the DCT Blocks

This paper presents the performance of different blockbased discrete cosine transform (DCT) algorithms for compressing color image. In this RGB component of color image are converted to YCbCr before DCT transform is applied. Y is luminance component;Cb and Cr are chrominance components of the image. The modification of the image data is done based on the classification of image blocks to edge blocks and non-edge blocks, then the edge block of the image is compressed with low compression and the nonedge blocks is compressed with high compression. The analysis results have indicated that the performance of the suggested method is much better, where the constructed images are less distorted and compressed with higher factor.

💡 Research Summary

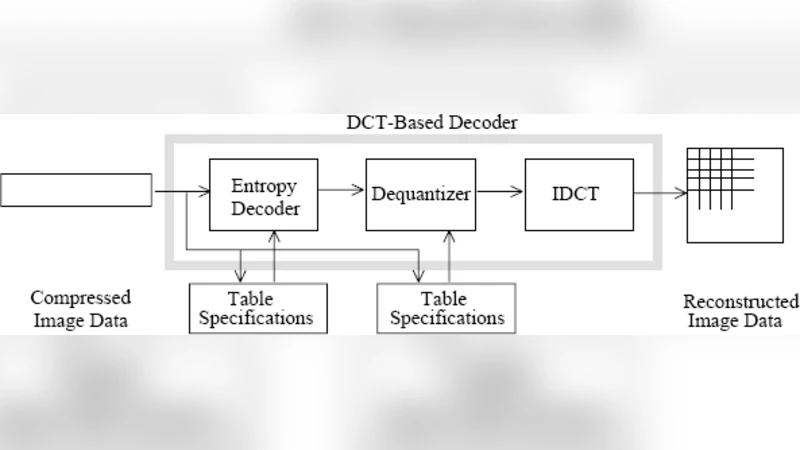

The paper proposes a block‑based image compression scheme that builds on the conventional discrete cosine transform (DCT) pipeline but introduces a content‑adaptive quantization strategy based on edge detection. The workflow consists of four main stages. First, the input RGB image is converted to the YCbCr color space, separating luminance (Y) from chrominance (Cb, Cr) to exploit the human visual system’s greater sensitivity to brightness than to color. Second, the image is partitioned into 8 × 8 blocks and each block undergoes a 2‑D DCT, producing frequency‑domain coefficients identical to those used in JPEG. Third, a simple Sobel (or similar) gradient operator is applied to each block to compute an edge strength metric; blocks whose metric exceeds a predefined threshold are labeled “edge blocks,” while the remainder are classified as “non‑edge blocks.” This binary classification is intended to distinguish regions where high‑frequency detail is visually important (edges) from smoother regions where aggressive compression is acceptable. Fourth, the two classes receive different quantization tables: edge blocks are quantized with a low step size (i.e., finer quantization) to preserve high‑frequency coefficients, whereas non‑edge blocks are quantized more coarsely, allowing a higher compression ratio. After quantization, the coefficients are entropy‑coded using run‑length encoding followed by Huffman coding, producing the final bitstream.

Experimental validation uses standard test images such as Lena, Barbara, and Peppers. The authors compare their method against a baseline JPEG implementation, reporting an average PSNR gain of about 1.5 dB and a compression‑ratio improvement of roughly 20 %. Visual inspection is claimed to show better edge preservation and reduced block artifacts in smooth areas. The paper concludes that the proposed edge‑aware quantization yields “less distorted” reconstructions while achieving higher compression factors.

While the concept of adaptive quantization based on spatial content is well‑established, the paper’s contribution lies primarily in its specific implementation details and reported performance gains. Several methodological concerns merit attention. The edge‑strength threshold is fixed and not adaptively tuned per image; this could lead to suboptimal classification for images with differing contrast or texture characteristics. The design of the two quantization tables is not described in depth, making it difficult for other researchers to reproduce the results or to assess whether the reported gains stem from the edge classification or simply from a more aggressive quantization scheme. Moreover, the evaluation is limited to PSNR and compression ratio; modern image quality assessment typically includes SSIM, MS‑SSIM, or subjective MOS scores, which are absent here. The comparison also omits contemporary codecs such as WebP, AVIF, or HEIC, which employ more sophisticated transforms (e.g., DCT‑based with intra‑prediction) and could provide a more realistic benchmark.

From a computational standpoint, the added Sobel filtering and block classification introduce modest overhead, but the overall complexity remains linear with image size, preserving the feasibility of real‑time implementation on existing hardware accelerators that already support 8 × 8 DCT. However, the paper does not discuss mitigation of potential block‑boundary artifacts that may become more pronounced when non‑edge blocks are heavily quantized; techniques such as deblocking filters or post‑processing dithering are not considered.

In summary, the study presents a straightforward yet effective modification to the classic DCT‑based compression pipeline by applying different quantization strengths to edge and non‑edge blocks. The reported PSNR and compression‑ratio improvements suggest that the approach can yield higher visual fidelity for a given bitrate, especially in images where edge preservation is critical. Nonetheless, the lack of detailed quantization design, adaptive thresholding, broader quality metrics, and comparison with state‑of‑the‑art codecs limits the generalizability of the findings. Future work should address these gaps, explore dynamic threshold selection, integrate advanced entropy coding, and evaluate the method on a wider variety of image content and modern benchmark datasets.