Low-Rank Matrix Approximation with Weights or Missing Data is NP-hard

Weighted low-rank approximation (WLRA), a dimensionality reduction technique for data analysis, has been successfully used in several applications, such as in collaborative filtering to design recommender systems or in computer vision to recover structure from motion. In this paper, we study the computational complexity of WLRA and prove that it is NP-hard to find an approximate solution, even when a rank-one approximation is sought. Our proofs are based on a reduction from the maximum-edge biclique problem, and apply to strictly positive weights as well as binary weights (the latter corresponding to low-rank matrix approximation with missing data).

💡 Research Summary

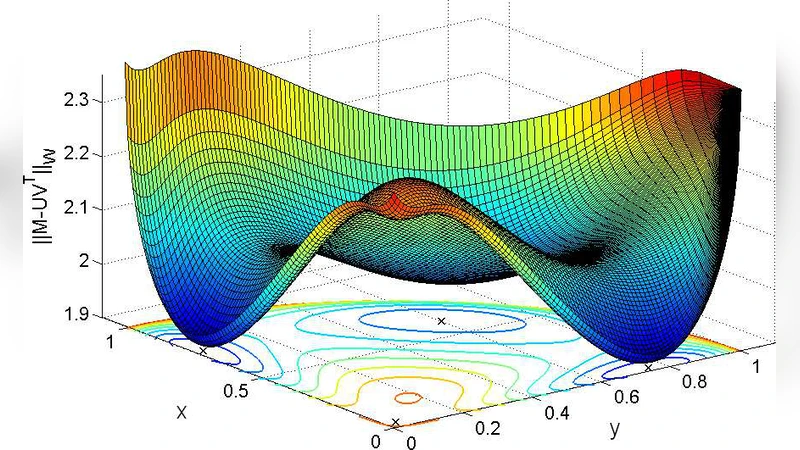

The paper investigates the computational complexity of Weighted Low‑Rank Approximation (WLRA), a fundamental tool for dimensionality reduction when data entries have heterogeneous reliability or are partially missing. While the unweighted low‑rank approximation problem can be solved optimally in polynomial time via singular value decomposition, the introduction of element‑wise weights dramatically changes the landscape. The authors focus on two weight regimes: (i) strictly positive real weights (e.g., all entries lie in a bounded interval such as

Comments & Academic Discussion

Loading comments...

Leave a Comment