Comparison of different T-norm operators in classification problems

Fuzzy rule based classification systems are one of the most popular fuzzy modeling systems used in pattern classification problems. This paper investigates the effect of applying nine different T-norms in fuzzy rule based classification systems. In the recent researches, fuzzy versions of confidence and support merits from the field of data mining have been widely used for both rules selecting and weighting in the construction of fuzzy rule based classification systems. For calculating these merits the product has been usually used as a T-norm. In this paper different T-norms have been used for calculating the confidence and support measures. Therefore, the calculations in rule selection and rule weighting steps (in the process of constructing the fuzzy rule based classification systems) are modified by employing these T-norms. Consequently, these changes in calculation results in altering the overall accuracy of rule based classification systems. Experimental results obtained on some well-known data sets show that the best performance is produced by employing the Aczel-Alsina operator in terms of the classification accuracy, the second best operator is Dubois-Prade and the third best operator is Dombi. In experiments, we have used 12 data sets with numerical attributes from the University of California, Irvine machine learning repository (UCI).

💡 Research Summary

This paper investigates how the choice of T‑norm operator influences the performance of fuzzy rule‑based classification systems (FRBCS). In a typical FRBCS, fuzzy rules are generated from fuzzified input attributes, then selected and weighted based on two data‑mining inspired merit measures: confidence (how often a rule predicts the correct class) and support (how many instances the rule covers). Traditionally, both measures are computed using the product T‑norm, which simply multiplies the membership values of antecedent conditions. While computationally convenient, the product can excessively suppress small membership values, potentially discarding useful information in noisy or highly uncertain data.

To explore alternatives, the authors replace the product with nine different T‑norms: Aczel‑Alsina, Dubois‑Prade, Dombi, Hamacher, Schweizer‑Sklar, Yager, Łukasiewicz, Nilsson, and the standard product. Each operator defines a distinct way of aggregating fuzzy antecedents, ranging from smooth exponential decay (Aczel‑Alsina) to conservative minimum‑based intersections (Dubois‑Prade) and parameter‑controlled families (Dombi, Schweizer‑Sklar). The study evaluates how these operators affect rule selection, rule weighting, and ultimately classification accuracy.

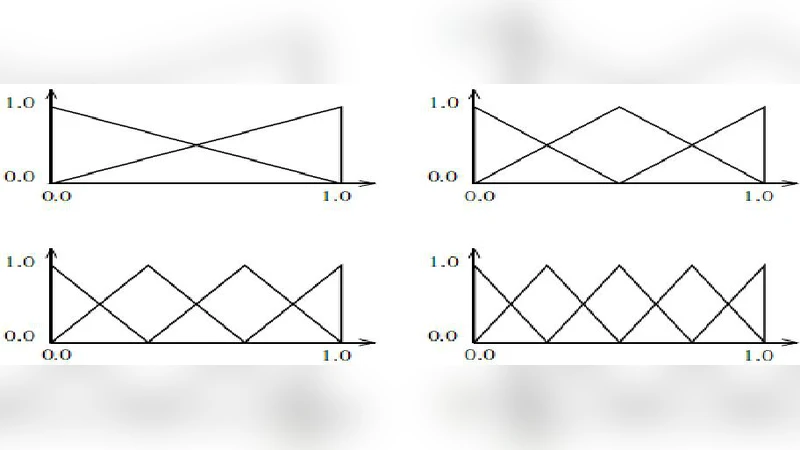

The experimental protocol uses twelve well‑known numeric data sets from the UCI Machine Learning Repository. All attributes are fuzzified with five triangular or Gaussian membership functions, and a full rule base is generated by exhaustive combination of antecedents. For each rule, confidence and support are recomputed using each T‑norm, and rules that exceed predefined thresholds are retained. The retained rules are then weighted according to the same T‑norm‑based merit values, and classification of test instances is performed by a weighted sum of rule activations. Ten‑fold cross‑validation is employed to obtain reliable accuracy estimates.

Results show a clear ranking of T‑norms. The Aczel‑Alsina operator yields the highest average classification accuracy across all data sets. Its exponential formulation preserves low membership values better than the product, allowing the system to exploit subtle fuzzy information. The Dubois‑Prade operator ranks second; its conservative minimum‑maximum interpolation provides robustness in noisy environments. The Dombi operator, with its tunable λ parameter, occupies the third position, demonstrating that parameterized T‑norms can adapt to varying data characteristics. In contrast, the standard product operator performs worse on several data sets, confirming that the naïve multiplication of memberships is not universally optimal. Other operators (Hamacher, Schweizer‑Sklar, Yager, etc.) achieve competitive results on specific data sets but do not surpass the top three in overall performance.

The study highlights several important implications. First, the choice of T‑norm is a critical design decision in FRBCS, directly affecting rule evaluation and system accuracy. Second, no single T‑norm dominates across all problem domains; practitioners should consider dataset properties (noise level, distribution of membership values) and possibly conduct a preliminary T‑norm comparison before finalizing a model. Third, parameterized T‑norms offer an additional degree of freedom; systematic tuning of parameters such as λ (Dombi) or p (Schweizer‑Sklar) could further improve performance, suggesting a fruitful avenue for automated hyper‑parameter optimization.

Limitations of the work include the exclusive use of numeric attributes and a uniform fuzzification scheme, which may not capture the best possible membership functions for each data set. Moreover, the experiments do not explore categorical data or high‑dimensional feature spaces, leaving open questions about generalizability. Future research directions proposed by the authors involve (a) experimenting with diverse membership function families and adaptive fuzzification, (b) integrating automatic T‑norm parameter optimization (e.g., via evolutionary algorithms), and (c) combining FRBCS with deep learning feature extractors to create hybrid models where T‑norm selection could be learned jointly with representation learning.

In conclusion, the paper provides empirical evidence that alternative T‑norms, particularly Aczel‑Alsina, Dubois‑Prade, and Dombi, can substantially improve the classification accuracy of fuzzy rule‑based systems compared to the conventional product T‑norm. This insight encourages researchers and practitioners to revisit the underlying fuzzy aggregation operators when designing FRBCS, opening the door to more robust and accurate fuzzy classifiers.