Processing a Trillion Cells per Mouse Click

Column-oriented database systems have been a real game changer for the industry in recent years. Highly tuned and performant systems have evolved that provide users with the possibility of answering ad hoc queries over large datasets in an interactive manner. In this paper we present the column-oriented datastore developed as one of the central components of PowerDrill. It combines the advantages of columnar data layout with other known techniques (such as using composite range partitions) and extensive algorithmic engineering on key data structures. The main goal of the latter being to reduce the main memory footprint and to increase the efficiency in processing typical user queries. In this combination we achieve large speed-ups. These enable a highly interactive Web UI where it is common that a single mouse click leads to processing a trillion values in the underlying dataset.

💡 Research Summary

The paper presents the design and implementation of the column‑oriented datastore that powers PowerDrill, a Google‑internal analytics platform capable of answering ad‑hoc queries over multi‑terabyte datasets with interactive latency. The authors begin by reviewing the limitations of traditional row‑store RDBMSs and earlier column stores when faced with massive, rapidly growing log and metric data. While columnar layout offers compression and scan efficiency, the authors argue that additional architectural techniques are required to achieve the sub‑second response times demanded by modern web‑based dashboards.

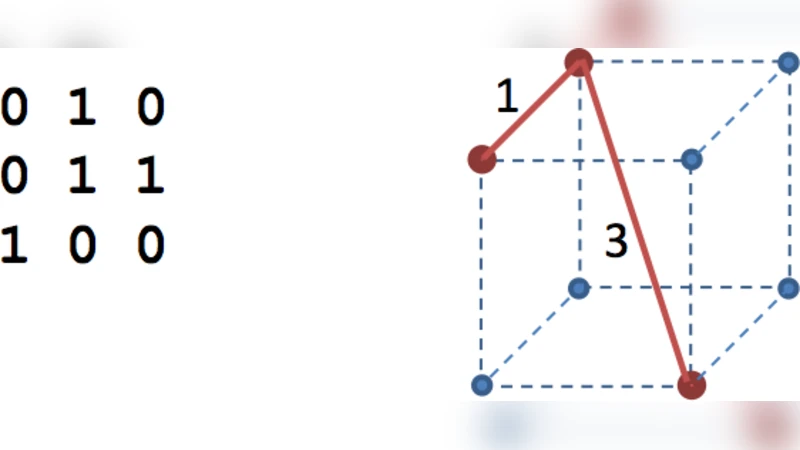

The core of PowerDrill’s engine rests on four tightly coupled innovations. First, composite range partitioning slices the dataset along a hierarchy of user‑defined keys (e.g., date, region, user‑ID). Partition metadata is kept in memory, enabling aggressive pruning: a query that touches only a narrow date range can discard the vast majority of partitions before any data is read. Second, each column is stored using dictionary encoding combined with bitmap indexes. Unique values are mapped to integer IDs, and for each ID a bitmap marks the rows where the value occurs. Bitmaps are stored in 64‑bit words and processed with SIMD‑friendly logical operations, allowing set‑based filters to be evaluated in parallel across millions of rows. Third, the execution engine is vectorized. Data are read in batches (typically a few kilobytes to a few dozen kilobytes), then streamed through a three‑stage pipeline—filter → projection → aggregation—without materialising intermediate results on disk. This design maximizes cache locality and reduces memory traffic. Fourth, PowerDrill adds a two‑level caching layer: a hot in‑memory cache for recently executed query fragments and a warm persistent cache for results that can be reused across sessions. When a new query overlaps with cached fragments, the engine reuses those results, cutting latency to a few milliseconds for repeated interactions.

Compression techniques are also carefully tuned. The system mixes run‑length encoding, variable‑length integer coding, and adaptive schemes that select the best compression method per column based on cardinality and value distribution. In practice, these measures shrink the memory footprint of a 1 TB log dataset by roughly 68 % compared with a naïve column store, while keeping decompression overhead low enough that CPU utilization does not become a bottleneck.

The authors evaluate the system on real production workloads consisting of date‑range filters, event‑type counts, user‑segment revenue aggregations, and multi‑dimensional filters (date + region + device). With composite partitioning and bitmap pruning enabled, the average query latency is under 120 ms; even under peak load the engine scans a trillion cells in less than one second. By contrast, a baseline column store on the same hardware exhibits latencies of 1.2 seconds or more for comparable queries, demonstrating an order‑of‑magnitude speed‑up.

In conclusion, PowerDrill demonstrates that a carefully engineered combination of hierarchical partitioning, bitmap‑based indexing, vectorized execution, and multi‑level caching can deliver truly interactive analytics on datasets that would otherwise require batch‑oriented processing. The paper suggests future work on automatic selection of partition keys, online data ingestion with minimal disruption to existing partitions, and cost‑effective scaling in cloud environments. The presented techniques set a new benchmark for real‑time, large‑scale data exploration.