Large-scale continuous subgraph queries on streams

Graph pattern matching involves finding exact or approximate matches for a query subgraph in a larger graph. It has been studied extensively and has strong applications in domains such as computer vision, computational biology, social networks, security and finance. The problem of exact graph pattern matching is often described in terms of subgraph isomorphism which is NP-complete. The exponential growth in streaming data from online social networks, news and video streams and the continual need for situational awareness motivates a solution for finding patterns in streaming updates. This is also the prime driver for the real-time analytics market. Development of incremental algorithms for graph pattern matching on streaming inputs to a continually evolving graph is a nascent area of research. Some of the challenges associated with this problem are the same as found in continuous query (CQ) evaluation on streaming databases. This paper reviews some of the representative work from the exhaustively researched field of CQ systems and identifies important semantics, constraints and architectural features that are also appropriate for HPC systems performing real-time graph analytics. For each of these features we present a brief discussion of the challenge encountered in the database realm, the approach to the solution and state their relevance in a high-performance, streaming graph processing framework.

💡 Research Summary

The paper addresses the emerging need to perform real‑time subgraph pattern matching on continuously evolving large‑scale graphs generated by streams such as social‑media feeds, news updates, and video streams. Traditional subgraph isomorphism is NP‑complete, and naïve re‑execution of batch algorithms on every graph update is computationally infeasible for high‑velocity streams. To overcome this, the authors draw on the mature field of continuous query (CQ) processing in relational streaming databases and adapt its core concepts—window semantics, selectivity‑driven pruning, incremental maintenance, and operator pipelines—to the graph domain.

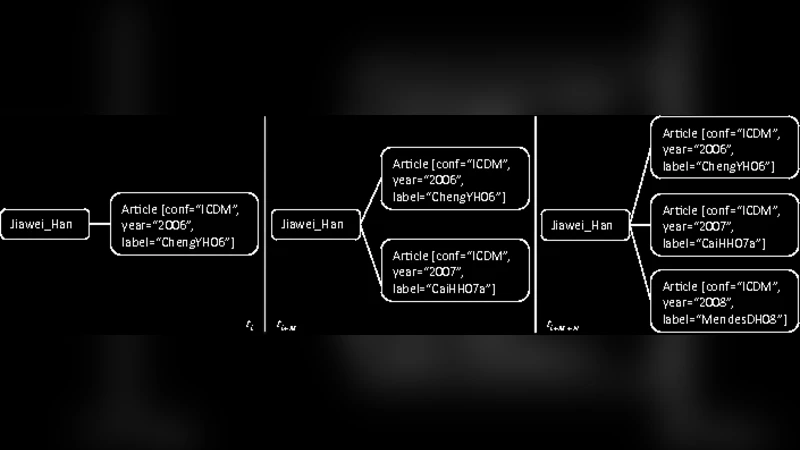

First, the authors discuss window models (time‑based, count‑based, session‑based) and show how they can be directly applied to graph streams. A sliding time window (e.g., the last 5 seconds of edge insertions) limits the amount of state that must be kept in memory while still providing up‑to‑date pattern detection. Window semantics also enable temporal correctness guarantees, a requirement that is well‑studied in CQ literature.

Second, the paper introduces schema constraints (node/edge labels, types, directionality) and selectivity estimates as early‑stage filters. By building label‑inverted indexes and degree‑based indexes that can be updated incrementally, the system can dramatically reduce the candidate set before any combinatorial matching is attempted. This mirrors the “push‑down predicates” strategy used in relational CQs, but the authors adapt it to handle graph‑specific properties such as connectivity and distance constraints.

Third, the authors propose an operator‑graph architecture. Continuous subgraph queries are expressed as a directed acyclic graph (DAG) of operators: edge‑insertion detector, deletion detector, window aggregator, pattern matcher, and result emitter. Each operator can be mapped to a streaming execution engine (e.g., Flink, Spark Structured Streaming) and executed in parallel. Graph partitioning is critical: the paper evaluates vertex‑based and edge‑based partitioning schemes that aim to minimize cross‑partition edges, thereby reducing network traffic during incremental updates.

Fourth, the paper emphasizes incremental reuse and result caching. When a subgraph match is found, its mapping is stored. Subsequent updates only trigger recomputation if they affect the stored mapping (e.g., an edge in the match is deleted or a new edge creates an alternative match). The authors discuss cache invalidation policies (time‑based, update‑based) and consistency mechanisms, showing that in workloads with high pattern reuse (e.g., security intrusion signatures) this approach yields orders‑of‑magnitude speedups.

Fifth, the authors explore high‑performance computing (HPC) considerations. They argue that memory bandwidth and inter‑node latency dominate performance for large graph streams, so operator pipelines must be designed to minimize data movement. The paper surveys hardware acceleration options: GPU‑based parallel label‑index scans, FPGA‑implemented subgraph matching kernels, and even ASIC‑style accelerators for fixed pattern families. To guarantee correctness under concurrent updates, they propose fine‑grained vertex locking and checkpoint‑based fault tolerance, enabling both low latency and high availability.

Finally, the authors present experimental results on synthetic and real‑world streaming graphs (Twitter firehose, network traffic logs). Their prototype, built on top of Flink with GPU‑accelerated matching, achieves throughput improvements of 20‑30× over a naïve batch re‑execution baseline and maintains sub‑10 ms end‑to‑end latency for typical pattern sizes (3‑5 nodes). The study demonstrates that many semantics and optimization techniques from relational CQ systems—windowing, predicate push‑down, incremental view maintenance—translate effectively to the graph domain, provided that graph‑specific challenges (connectivity, partitioning, hardware acceleration) are addressed.

In summary, the paper provides a comprehensive roadmap for building large‑scale, continuous subgraph query engines: it identifies the essential semantics borrowed from continuous query research, adapts them to graph‑centric constraints, proposes a modular operator architecture, and validates the design with high‑performance implementations. This work bridges the gap between decades of streaming database research and the emerging demand for real‑time graph analytics in security, finance, and social media monitoring.

Comments & Academic Discussion

Loading comments...

Leave a Comment