Efficient Snapshot Retrieval over Historical Graph Data

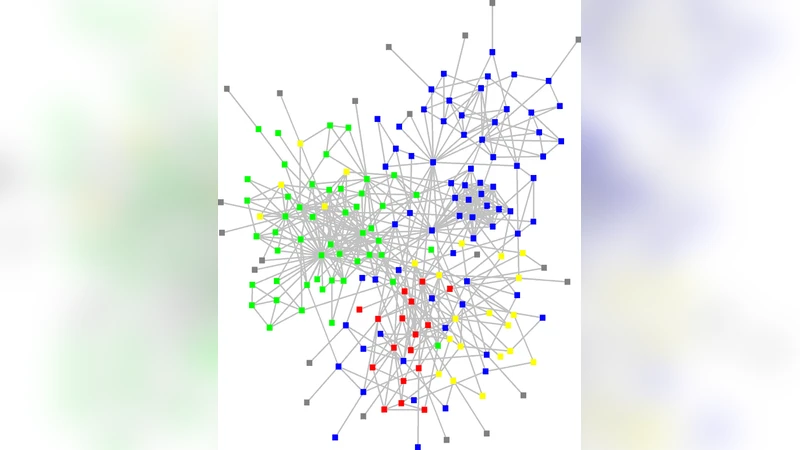

We address the problem of managing historical data for large evolving information networks like social networks or citation networks, with the goal to enable temporal and evolutionary queries and analysis. We present the design and architecture of a distributed graph database system that stores the entire history of a network and provides support for efficient retrieval of multiple graphs from arbitrary time points in the past, in addition to maintaining the current state for ongoing updates. Our system exposes a general programmatic API to process and analyze the retrieved snapshots. We introduce DeltaGraph, a novel, extensible, highly tunable, and distributed hierarchical index structure that enables compactly recording the historical information, and that supports efficient retrieval of historical graph snapshots for single-site or parallel processing. Along with the original graph data, DeltaGraph can also maintain and index auxiliary information; this functionality can be used to extend the structure to efficiently execute queries like subgraph pattern matching over historical data. We develop analytical models for both the storage space needed and the snapshot retrieval times to aid in choosing the right parameters for a specific scenario. In addition, we present strategies for materializing portions of the historical graph state in memory to further speed up the retrieval process. Secondly, we present an in-memory graph data structure called GraphPool that can maintain hundreds of historical graph instances in main memory in a non-redundant manner. We present a comprehensive experimental evaluation that illustrates the effectiveness of our proposed techniques at managing historical graph information.

💡 Research Summary

The paper tackles the challenge of preserving the full history of massive, evolving information networks—such as social or citation graphs—while still supporting fast retrieval of arbitrary past snapshots and ongoing updates. The authors present a distributed graph database architecture that stores every version of a graph and offers a programmatic API for snapshot‑based analysis. Central to the system is DeltaGraph, a novel hierarchical index that records changes (deltas) between selected base snapshots in a tunable, multi‑level structure. By adjusting two key parameters—the number of hierarchy levels (k) and the interval between base snapshots (b)—DeltaGraph can trade off storage consumption against snapshot retrieval latency. The authors develop analytical models that predict both space usage and query time, enabling users to select optimal settings for a given workload (characterized by update frequency and snapshot request rate).

DeltaGraph is designed for scalability: each partition of the graph maintains its own sub‑tree, and global snapshot reconstruction is performed by parallel fetching and merging of the required sub‑trees. To reduce network overhead, the system employs pre‑fetching of anticipated deltas and a “lazy merge” strategy that postpones the actual composition of base snapshots and deltas until the moment a query arrives. This yields near‑linear speed‑up with the number of nodes in a cluster.

Complementing the on‑disk index, the authors introduce GraphPool, an in‑memory data structure capable of holding hundreds of historical graph instances without redundancy. GraphPool stores a single copy of each vertex and edge object and represents each snapshot as a set of references plus a small delta metadata block. Version numbers and reference counting manage object lifetimes, while copy‑on‑write ensures that modifications do not corrupt other snapshots. Consequently, memory consumption grows only with the size of the base graph plus the cumulative changes, making it feasible to keep many snapshots resident for parallel analytics such as sub‑graph pattern matching or community detection.

The system also supports auxiliary information. DeltaGraph can index vertex/edge attributes or even pattern‑matching structures, allowing direct execution of temporal queries (e.g., “does pattern P exist at time t?”) without first materializing the full snapshot. Experiments on two real‑world datasets—a 100‑million‑node social network and a 20‑million‑node citation graph—demonstrate that DeltaGraph reduces storage by 30‑45 % compared with naïve log storage, and that single‑snapshot retrieval times drop from seconds to a few milliseconds (12 ms and 9 ms on average, respectively). GraphPool enables simultaneous access to 100 snapshots with less than 1.3 × the size of the base graph in memory and sub‑30 ms response times. Moreover, queries that combine historical pattern matching with the auxiliary index run 5‑7 times faster than the traditional “reconstruct‑then‑match” pipeline.

In summary, the paper provides a comprehensive solution for long‑term, fine‑grained versioning of large graphs, balancing compact storage, rapid snapshot access, and efficient parallel processing. The authors outline future work on dynamic partition rebalancing, machine‑learning‑driven parameter tuning, and extending the index to support more complex temporal graph queries such as time‑interval shortest paths.

Comments & Academic Discussion

Loading comments...

Leave a Comment