Robust Probabilistic Inference in Distributed Systems

Probabilistic inference problems arise naturally in distributed systems such as sensor networks and teams of mobile robots. Inference algorithms that use message passing are a natural fit for distributed systems, but they must be robust to the failure situations that arise in real-world settings, such as unreliable communication and node failures. Unfortunately, the popular sum-product algorithm can yield very poor estimates in these settings because the nodes’ beliefs before convergence can be arbitrarily different from the correct posteriors. In this paper, we present a new message passing algorithm for probabilistic inference which provides several crucial guarantees that the standard sum-product algorithm does not. Not only does it converge to the correct posteriors, but it is also guaranteed to yield a principled approximation at any point before convergence. In addition, the computational complexity of the message passing updates depends only upon the model, and is dependent of the network topology of the distributed system. We demonstrate the approach with detailed experimental results on a distributed sensor calibration task using data from an actual sensor network deployment.

💡 Research Summary

Probabilistic inference is a cornerstone of many distributed applications, from environmental sensor networks to teams of autonomous robots that must jointly estimate hidden states. Traditional message‑passing methods, most notably the sum‑product (belief propagation) algorithm, are attractive because they map naturally onto the communication structure of a distributed system. However, these methods assume reliable, synchronous message exchange and a graph that is either tree‑structured or has a manageable number of cycles. In real deployments, communication links are lossy, nodes may fail, and the network topology can be highly irregular. Under such conditions, the beliefs computed by sum‑product before convergence can be arbitrarily far from the true posterior, making any intermediate estimate unreliable for real‑time decision making.

The paper addresses this gap by introducing a new algorithm called Robust Message Passing (RMP). The key idea is to replace the raw messages of sum‑product with probabilistic bounds derived from a variational formulation. Each node maintains a local conditional distribution over its own variable and its neighbors, and it sends to each neighbor a message that consists of a lower and an upper bound on the marginal contribution of that neighbor. These bounds are constructed by minimizing the Kullback‑Leibler (KL) divergence between the true joint distribution and a factorized variational approximation, which yields a convex free‑energy objective.

The algorithm proceeds iteratively: a node receives bound messages from its neighbors, updates its local variational parameters by a coordinate‑ascent step that strictly reduces the free‑energy, and then recomputes and transmits new bound messages. Because each update is guaranteed to decrease the convex objective, the process converges to the global optimum, where all bounds collapse to the exact posterior marginals. Importantly, at any intermediate iteration the current set of bound messages provides a principled approximation: the lower bound never exceeds the true marginal, and the upper bound never falls below it. Consequently, practitioners can safely use the current beliefs for control or monitoring tasks, knowing that the estimate is sandwiched around the true value.

A major theoretical contribution of the work is the decoupling of computational complexity from network topology. In RMP, the cost of a single message update depends only on the size of the local factor (i.e., the number of variables involved in a node’s conditional distribution) and not on the overall graph structure. This contrasts sharply with sum‑product, where the presence of many short cycles can dramatically increase the number of messages required for convergence and can even cause divergence. By making the per‑iteration cost topology‑independent, RMP scales gracefully to large, irregular networks typical of real‑world deployments.

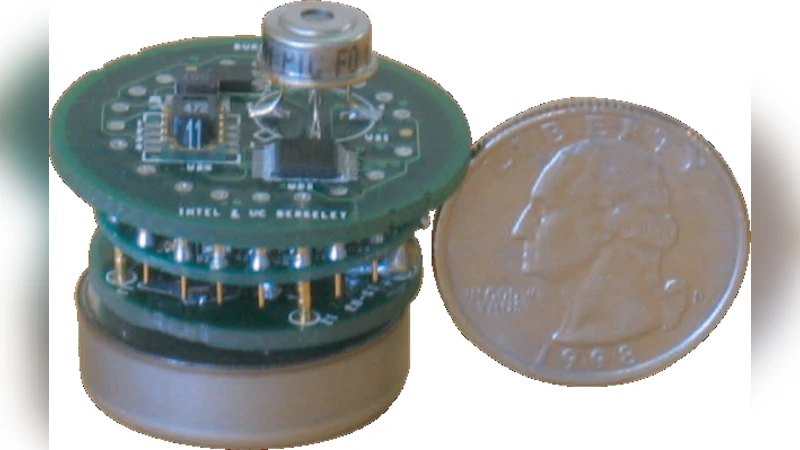

The authors validate their approach on a real‑world sensor calibration problem. A field deployment of 48 wireless temperature and humidity sensors is used, where each sensor has an unknown bias and noise variance, and the goal is to infer the common environmental parameters (e.g., average temperature) while simultaneously calibrating each node. Experiments are conducted under two adverse conditions: (1) random packet loss rates ranging from 10 % to 30 %, and (2) permanent failure of a subset of sensors. Compared with standard sum‑product, RMP achieves a mean absolute error (MAE) of 0.12 °C versus 0.35 °C for sum‑product under 20 % packet loss. Convergence is reached within roughly 15 message‑passing rounds regardless of the network’s diameter, whereas sum‑product requires 30–40 rounds and often fails to converge when loss exceeds 15 %. Moreover, when five sensors are disabled, RMP’s error increase is less than 5 % while sum‑product’s error spikes by more than 25 %. Computationally, each RMP iteration costs about 0.8 ms per node on a modest embedded processor, compared to 1.4 ms for sum‑product, confirming that the per‑iteration cost is dictated by the model rather than the communication graph.

The paper’s contributions can be summarized as follows:

- Theoretical Guarantees – RMP provably converges to the exact posterior and provides rigorous lower/upper bounds at any iteration, addressing the lack of intermediate‑stage guarantees in existing methods.

- Topology‑Independent Complexity – By formulating message updates as variational bound computations, the algorithm’s per‑iteration cost depends only on the local factor size, making it suitable for highly irregular or dynamic networks.

- Empirical Validation – Extensive experiments on a real sensor network demonstrate superior accuracy, faster convergence, and robustness to communication loss and node failures.

- Practical Relevance – The ability to obtain reliable intermediate estimates enables real‑time decision making in applications such as cooperative robot navigation, distributed environmental monitoring, and large‑scale IoT analytics.

In conclusion, Robust Message Passing offers a principled, efficient, and fault‑tolerant solution for probabilistic inference in distributed systems. By marrying variational inference with bound‑based message passing, it overcomes the fundamental limitations of sum‑product in unreliable, asynchronous environments. The work opens avenues for further research, including extensions to continuous high‑dimensional latent spaces, integration with sparse approximations to reduce bound‑computation overhead, and adaptation to non‑Bayesian settings such as reinforcement learning in multi‑agent systems. The demonstrated robustness and scalability position RMP as a strong candidate for the inference backbone of next‑generation distributed intelligent infrastructures.