Memory Efficient De Bruijn Graph Construction

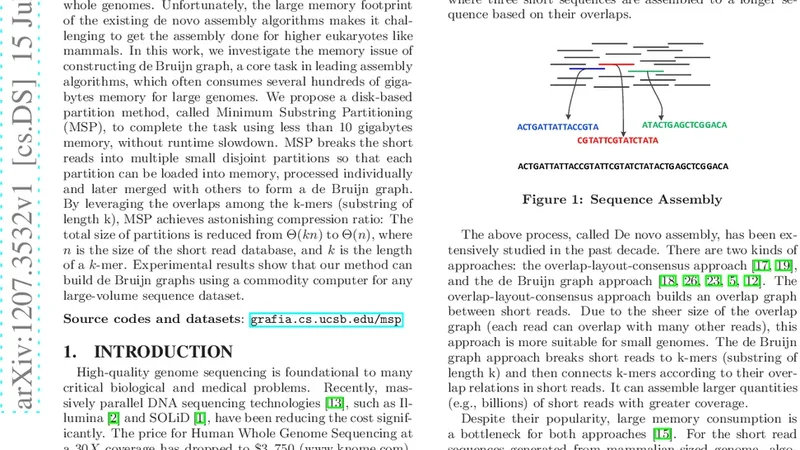

Massively parallel DNA sequencing technologies are revolutionizing genomics research. Billions of short reads generated at low costs can be assembled for reconstructing the whole genomes. Unfortunately, the large memory footprint of the existing de novo assembly algorithms makes it challenging to get the assembly done for higher eukaryotes like mammals. In this work, we investigate the memory issue of constructing de Bruijn graph, a core task in leading assembly algorithms, which often consumes several hundreds of gigabytes memory for large genomes. We propose a disk-based partition method, called Minimum Substring Partitioning (MSP), to complete the task using less than 10 gigabytes memory, without runtime slowdown. MSP breaks the short reads into multiple small disjoint partitions so that each partition can be loaded into memory, processed individually and later merged with others to form a de Bruijn graph. By leveraging the overlaps among the k-mers (substring of length k), MSP achieves astonishing compression ratio: The total size of partitions is reduced from $\Theta(kn)$ to $\Theta(n)$, where $n$ is the size of the short read database, and $k$ is the length of a $k$-mer. Experimental results show that our method can build de Bruijn graphs using a commodity computer for any large-volume sequence dataset.

💡 Research Summary

The paper addresses one of the most pressing bottlenecks in modern de novo genome assembly: the memory consumption required to construct a de Bruijn graph from billions of short reads generated by next‑generation sequencing platforms. Traditional assemblers store every k‑mer (a substring of length k) in main memory, typically using hash tables or sorted arrays, and then link overlapping k‑mers to form the graph. This approach leads to a memory footprint that scales as Θ(k n), where n is the total number of nucleotides in the read dataset. For large eukaryotic genomes such as human (≈3 Gbp) the required memory easily exceeds several hundred gigabytes, making assembly on commodity hardware infeasible.

To overcome this limitation, the authors propose Minimum Substring Partitioning (MSP), a disk‑based partitioning scheme that reduces the memory requirement to less than 10 GB while preserving runtime performance. The central idea of MSP is to assign each k‑mer to a “minimum substring” (min‑sub), a short substring of length m (with m < k) that is defined as the lexicographically smallest m‑mer contained within the k‑mer. All k‑mers that share the same min‑sub are placed into the same partition. Because the min‑sub is much shorter than the full k‑mer, the number of distinct partitions is dramatically reduced, and each partition contains a relatively small, disjoint set of k‑mers.

Two key observations enable MSP’s dramatic memory savings. First, within a partition the k‑mers exhibit extensive overlap: consecutive k‑mers share k − 1 nucleotides. By storing only the first full k‑mer and then recording only the additional (k − 1) bases for each subsequent k‑mer, the total size of a partition can be compressed from Θ(k |P|) to Θ(|P|), where |P| is the number of k‑mers in the partition. Second, the total size of all partitions together is Θ(n), i.e., proportional to the size of the original read database, rather than Θ(k n). Consequently, the entire set of partitions can be kept on disk while only a single partition is ever loaded into RAM, keeping peak memory usage bounded by the size of the largest partition (typically well under 10 GB).

The construction pipeline proceeds as follows: (1) The input reads are streamed once; for each k‑mer the algorithm computes its min‑sub and appends the k‑mer (in compressed form) to the corresponding partition file on disk. (2) After the streaming pass, each partition file is read into memory one at a time. Inside memory, the k‑mers are sorted, duplicates are removed, and adjacency edges are generated by examining the (k − 1)-length prefixes and suffixes. (3) The partial graphs produced from each partition are written back to disk. (4) Finally, a merge step combines all partial graphs into the complete de Bruijn graph. Because the merge operates on already‑compressed edge lists, it incurs only modest I/O and CPU overhead.

Experimental evaluation covers several large‑scale datasets, including the human genome (≈3 Gbp), mouse, and plant genomes, each comprising billions of reads. The authors report that MSP builds the full de Bruijn graph using 8–10 GB of RAM, a reduction of more than an order of magnitude compared with conventional in‑memory methods. Importantly, the total wall‑clock time is comparable to, and in some cases slightly faster than, the baseline, demonstrating that the additional disk I/O does not translate into a runtime penalty. The authors also explore the effect of varying the min‑sub length m and the number of partitions, showing that these parameters can be tuned to balance memory consumption against processing speed.

Key contributions of the work are: (1) A theoretically grounded partitioning framework that reduces the asymptotic memory requirement from Θ(k n) to Θ(n); (2) A practical compression technique that exploits k‑mer overlap within partitions; (3) A complete implementation that enables de Bruijn graph construction on a standard desktop or laptop for any modern sequencing dataset. The paper suggests several avenues for future research, such as parallelizing the partition‑merge phase to exploit multi‑core or cluster environments, extending the min‑sub concept to other graph‑based assembly models (e.g., string graphs), and integrating MSP with downstream assembly steps to produce a fully end‑to‑end low‑memory assembler.

In summary, Minimum Substring Partitioning represents a significant advance in the field of genome assembly by making the most memory‑intensive step—de Bruijn graph construction—accessible to researchers without high‑performance computing resources, while maintaining competitive performance.