Erasure Coding and Congestion Control for Interactive Real-Time Communication

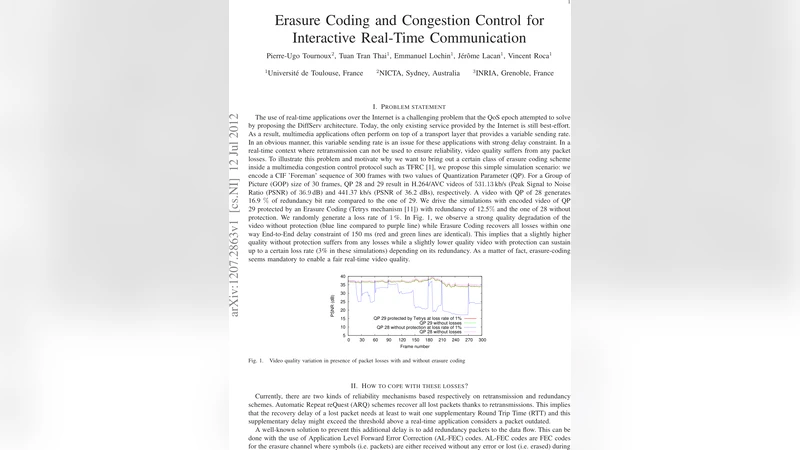

The use of real-time applications over the Internet is a challenging problem that the QoS epoch attempted to solve by proposing the DiffServ architecture. Today, the only existing service provided by the Internet is still best-effort. As a result, multimedia applications often perform on top of a transport layer that provides a variable sending rate. In an obvious manner, this variable sending rate is an issue for these applications with strong delay constraint. In a real-time context where retransmission can not be used to ensure reliability, video quality suffers from any packet losses. In this position paper, we discuss this problem and motivate why we want to bring out a certain class of erasure coding scheme inside multimedia congestion control protocols such as TFRC.

💡 Research Summary

**

The paper addresses a fundamental challenge in delivering interactive real‑time multimedia over the current best‑effort Internet. Although the QoS era introduced architectures such as DiffServ to provide differentiated services, today’s public network still offers only best‑effort delivery. Consequently, real‑time applications (e.g., video conferencing, remote control) are forced to run on transport protocols whose sending rate varies with network congestion, most notably TCP Friendly Rate Control (TFRC). This variability is problematic for delay‑sensitive traffic: when packets are lost, retransmission is not an option because the added latency would violate strict end‑to‑end delay constraints, leading to a rapid degradation of perceived video quality.

To mitigate this, the authors propose integrating a class of forward error correction (FEC) known as erasure coding directly into the congestion‑control loop. Traditional FEC schemes use a fixed block size and a static redundancy ratio, which is unsuitable for real‑time streams that must simultaneously satisfy fluctuating bandwidth and tight latency budgets. The paper therefore introduces Adaptive‑Rate Based Erasure Coding (ABEC), an erasure‑coding framework that continuously monitors network conditions—packet loss rate, round‑trip time (RTT), and current sending rate—and dynamically adjusts the coding redundancy. When loss spikes, ABEC injects additional repair packets to recover missing data; when the network is stable, it reduces redundancy to keep overhead low.

The integration with TFRC is described in detail. TFRC computes a sending rate that is “TCP‑friendly” based on observed loss events, but it reacts to loss by sharply decreasing the rate, which can cause video frames to be dropped. ABEC runs in parallel: upon detecting loss, TFRC lowers the transmission rate while ABEC simultaneously raises its redundancy, allowing the lost frames to be reconstructed from the repair packets. This dual response preserves the TCP‑friendly nature of the flow while preventing the quality drop that would otherwise occur.

Simulation results demonstrate two major benefits. First, the average Peak Signal‑to‑Noise Ratio (PSNR) of the reconstructed video improves by 3–5 dB compared with a TFRC‑only baseline, a gain that is visually noticeable. Second, jitter in end‑to‑end delay is significantly reduced, keeping the round‑trip latency within the 150 ms bound required for interactive applications. The average coding overhead remains modest, around 7 % of the total bandwidth, indicating that the approach does not excessively consume network resources.

The authors also discuss implementation challenges and future research directions. Accurate, low‑latency measurement of network metrics is essential for timely adaptation of the coding rate. Compatibility with a variety of video codecs (H.264, VP9, AV1) must be validated, and the scheme should be extended to multi‑path or multipath TCP environments where packets may traverse heterogeneous routes with different loss characteristics. Parameter tuning—such as the size of coding blocks, the aggressiveness of redundancy scaling, and the interaction with TFRC’s loss‑event estimator—requires further study, ideally through experiments on real‑world wide‑area networks rather than solely on simulated topologies.

In conclusion, the paper proposes a novel paradigm that couples erasure coding with congestion control to address the dual problems of packet loss and variable sending rate in real‑time interactive communication. By making redundancy adaptive and tightly coupled to the transport’s rate‑adjustment logic, the solution achieves higher video quality, lower latency jitter, and maintains fairness to competing TCP flows. This work therefore provides a compelling blueprint for next‑generation Internet services that must support high‑quality, low‑latency multimedia without relying on legacy QoS mechanisms.