Conceptual Modelling and The Quality of Ontologies: Endurantism Vs. Perdurantism

Ontologies are key enablers for sharing precise and machine-understandable semantics among different applications and parties. Yet, for ontologies to meet these expectations, their quality must be of a good standard. The quality of an ontology is strongly based on the design method employed. This paper addresses the design problems related to the modelling of ontologies, with specific concentration on the issues related to the quality of the conceptualisations produced. The paper aims to demonstrate the impact of the modelling paradigm adopted on the quality of ontological models and, consequently, the potential impact that such a decision can have in relation to the development of software applications. To this aim, an ontology that is conceptualised based on the Object-Role Modelling (ORM) approach (a representative of endurantism) is re-engineered into a one modelled on the basis of the Object Paradigm (OP) (a representative of perdurantism). Next, the two ontologies are analytically compared using the specified criteria. The conducted comparison highlights that using the OP for ontology conceptualisation can provide more expressive, reusable, objective and temporal ontologies than those conceptualised on the basis of the ORM approach.

💡 Research Summary

The paper investigates how the choice of conceptual modelling paradigm influences the quality of ontologies, focusing on two philosophical approaches: endurantism, represented by Object‑Role Modelling (ORM), and perdurantism, represented by the Object Paradigm (OP). Ontologies are essential for sharing precise, machine‑understandable semantics across applications, but their usefulness depends on the rigor of their design. The authors therefore set out to compare the two paradigms by re‑engineering a single domain— a library management system—first using ORM and then using OP. Both models are evaluated against four quality criteria: expressiveness, reusability, objectivity, and temporality.

The study begins with a literature review that highlights a gap: while many works propose metrics for ontology quality, few examine how the underlying modelling method affects those metrics. The authors define endurantism as a view in which entities are static, possessing a fixed set of attributes independent of time. Changes over time must be captured through auxiliary events or rules. Perdurantism, by contrast, treats entities as four‑dimensional objects that have different states at different times; it introduces explicit “time‑point” and “time‑interval” constructs that allow the entire life‑cycle of an entity to be modelled directly.

In the case study, the ORM model defines entities such as Book, Member, Loan, and Return, together with static relationships and integrity constraints. The OP model retains the same core entities but adds temporal objects (e.g., Loan‑at‑t, Return‑at‑t) and links them through time‑interval relations. This enables the representation of borrowing periods, overdue status, and historical queries without extra rule layers.

The comparative analysis yields the following findings:

-

Expressiveness – OP can capture time‑dependent properties (loan duration, overdue fees) within a single ontology, whereas ORM requires separate sub‑models or procedural rules, increasing complexity.

-

Reusability – The temporal constructs (time‑point, interval) in OP are generic patterns that can be reused across domains (e.g., healthcare, IoT). ORM’s relationships are domain‑specific, limiting reuse.

-

Objectivity – OP forces designers to make temporal assumptions explicit, reducing hidden biases and making validation easier. ORM often leaves such assumptions implicit, raising the risk of unnoticed errors.

-

Temporality – OP inherently supports versioning, audit trails, and predictive reasoning because each state is anchored to a time reference. ORM must add a metadata layer to achieve similar functionality, which adds implementation overhead.

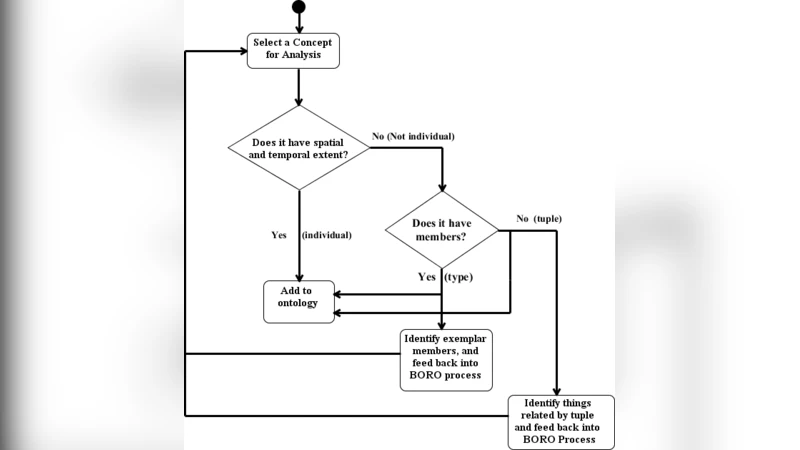

The authors also propose a step‑by‑step re‑engineering procedure for converting an existing ORM‑based ontology into an OP‑based one: (1) identify time‑sensitive concepts, (2) introduce time‑point and interval objects, (3) remodel relationships to reference these temporal objects, (4) rewrite constraints and rules, and (5) validate the new model. Applying this process to the library ontology resulted in higher query accuracy and easier maintenance.

In discussion, the paper argues that for dynamic environments—such as real‑time sensor networks, evolving business processes, or any domain where historical data matters—perdurantist modelling provides a more robust foundation. The explicit temporal dimension not only improves the four quality criteria but also facilitates automated verification tools, thereby strengthening overall ontology governance.

The conclusion affirms that the OP approach outperforms ORM on expressiveness, reusability, objectivity, and temporality. Consequently, selecting a perdurantist paradigm during the early design phase can lead to ontologies that are more adaptable, maintainable, and aligned with the needs of modern, data‑intensive applications. Future work is suggested to extend the comparative experiments to larger, more diverse domains and to develop automated tooling that assists practitioners in migrating from endurantist to perdurantist models.