On the Number of Experiments Sufficient and in the Worst Case Necessary to Identify All Causal Relations Among N Variables

We show that if any number of variables are allowed to be simultaneously and independently randomized in any one experiment, log2(N) + 1 experiments are sufficient and in the worst case necessary to determine the causal relations among N >= 2 variables when no latent variables, no sample selection bias and no feedback cycles are present. For all K, 0 < K < 1/(2N) we provide an upper bound on the number experiments required to determine causal structure when each experiment simultaneously randomizes K variables. For large N, these bounds are significantly lower than the N - 1 bound required when each experiment randomizes at most one variable. For kmax < N/2, we show that (N/kmax-1)+N/(2kmax)log2(kmax) experiments aresufficient and in the worst case necessary. We over a conjecture as to the minimal number of experiments that are in the worst case sufficient to identify all causal relations among N observed variables that are a subset of the vertices of a DAG.

💡 Research Summary

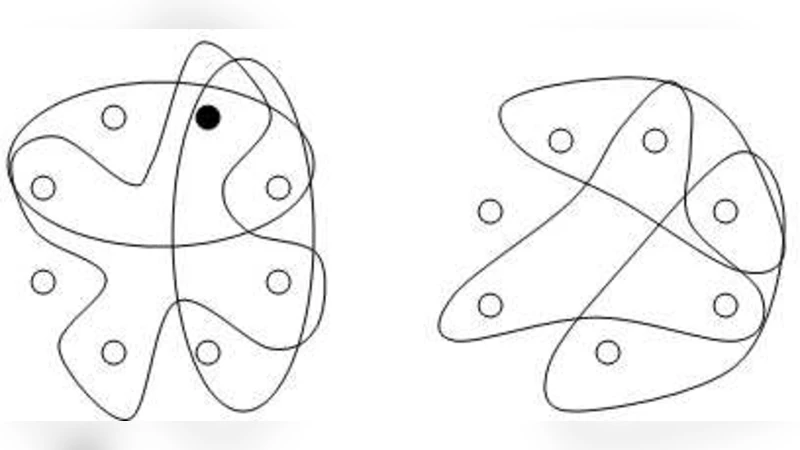

The paper addresses a fundamental question in causal discovery: how many interventional experiments are required, in the worst case, to recover the full causal structure among N observed variables when the underlying model is a directed acyclic graph (DAG) with no latent confounders, no selection bias, and no feedback loops. The authors adopt the standard causal model where an experiment corresponds to a “do‑operation” that simultaneously randomizes an arbitrary subset of variables, thereby cutting all incoming edges to those variables. Two types of information are extracted from each experiment: (1) a directional test, which determines the possible direction of causation between a randomized variable and a non‑randomized one; and (2) an adjacency test, which determines whether a pair of simultaneously randomized variables are directly connected.

The main theoretical contribution is a tight bound on the number of experiments needed when any subset of variables may be randomized in a single trial. By recursively partitioning the variable set into halves, the authors show that ⌈log₂ N⌉ + 1 experiments are sufficient. The first ⌈log₂ N⌉ experiments perform a series of “divide‑and‑conquer” interventions that test all cross‑group edges, while the final experiment randomizes all variables to resolve any remaining adjacency ambiguities. To prove optimality, they construct a worst‑case scenario—a fully connected DAG—where each experiment can reveal at most the information accounted for by the bound, establishing that ⌈log₂ N⌉ + 1 experiments are also necessary. Thus the bound is both an upper and a lower bound, i.e., it is tight.

The paper then relaxes the assumption of unlimited simultaneous randomization. For a fixed budget K (0 < K < N/2) of variables that can be randomized per experiment, the authors derive an upper bound of

\