Use of Dempster-Shafer Conflict Metric to Detect Interpretation Inconsistency

A model of the world built from sensor data may be incorrect even if the sensors are functioning correctly. Possible causes include the use of inappropriate sensors (e.g. a laser looking through glass walls), sensor inaccuracies accumulate (e.g. localization errors), the a priori models are wrong, or the internal representation does not match the world (e.g. a static occupancy grid used with dynamically moving objects). We are interested in the case where the constructed model of the world is flawed, but there is no access to the ground truth that would allow the system to see the discrepancy, such as a robot entering an unknown environment. This paper considers the problem of determining when something is wrong using only the sensor data used to construct the world model. It proposes 11 interpretation inconsistency indicators based on the Dempster-Shafer conflict metric, Con, and evaluates these indicators according to three criteria: ability to distinguish true inconsistency from sensor noise (classification), estimate the magnitude of discrepancies (estimation), and determine the source(s) (if any) of sensing problems in the environment (isolation). The evaluation is conducted using data from a mobile robot with sonar and laser range sensors navigating indoor environments under controlled conditions. The evaluation shows that the Gambino indicator performed best in terms of estimation (at best 0.77 correlation), isolation, and classification of the sensing situation as degraded (7% false negative rate) or normal (0% false positive rate).

💡 Research Summary

The paper addresses a fundamental problem in autonomous robotics: a robot’s internal representation of the world can become inaccurate even when all its sensors are functioning correctly. Causes include using inappropriate sensors (e.g., a laser that cannot see through glass), accumulated localization errors, incorrect prior models, or a mismatch between a static occupancy‑grid map and dynamic objects. In many realistic scenarios—such as a robot entering an unknown building—there is no external ground truth against which the robot can verify its map. The authors therefore ask whether the robot can detect that something is wrong using only the raw sensor data that were used to build the model.

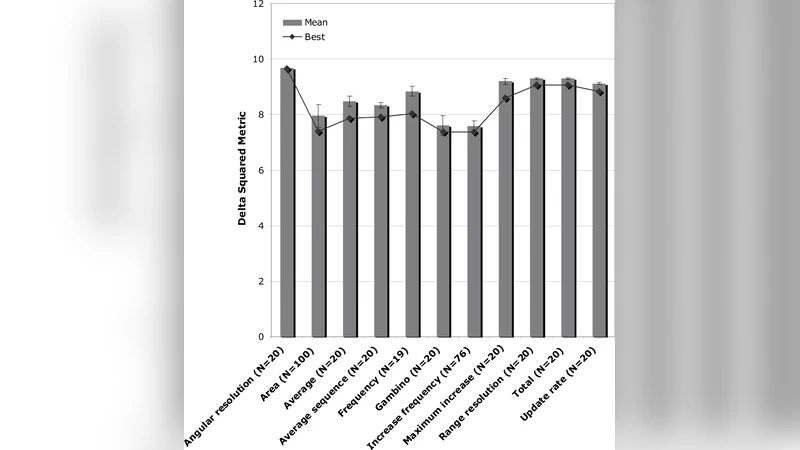

To answer this, they adopt the Dempster‑Shafer theory of evidence, focusing on the conflict metric Con. Given two independent bodies of evidence A and B defined over the same frame of discernment, Con(A,B) = Σ_{X∩Y=∅} m_A(X)·m_B(Y), where m_A and m_B are the basic probability assignments. A high conflict value indicates that the two evidence sets are mutually contradictory, which in the robotics context translates to “the same region of space is being interpreted inconsistently by the sensors.” The authors design eleven interpretation‑inconsistency indicators derived from Con. These indicators differ in how they aggregate conflict (e.g., instantaneous value, temporal average, variance), whether they weight conflict by sensor type, and whether they incorporate the rate of change of uncertainty in the affected cells.

A key contribution is the “Gambino” indicator, which flags a cell as inconsistent when (1) the conflict exceeds a pre‑defined threshold and (2) the uncertainty (the belief mass assigned to the “unknown” hypothesis) simultaneously decreases. This combination is intended to capture genuine sensor disagreement rather than mere noise, because a true inconsistency should both raise conflict and reduce ambiguity as the robot gathers more evidence.

The experimental platform is a mobile robot equipped with both sonar and laser range finders. The robot traverses a series of controlled indoor environments (corridors, rooms, corners) that contain features known to challenge each sensor type: glass walls that block laser returns, specular surfaces that degrade sonar readings, and narrow passages that amplify localization drift. At each pose the robot builds an occupancy‑grid map from the raw measurements, computes the Dempster‑Shafer belief masses for each cell, and then evaluates the eleven indicators.

The evaluation follows three criteria:

- Classification – the ability to label a sensing situation as “normal” (no significant inconsistency) or “degraded” (significant inconsistency). Performance is measured by false‑positive (normal labeled as degraded) and false‑negative (degraded labeled as normal) rates.

- Estimation – how well the indicator’s output correlates with the actual magnitude of the map error, quantified by the Pearson correlation between the indicator score and a ground‑truth error metric (e.g., cell‑wise difference between the robot’s map and a high‑resolution laser scan).

- Isolation – the capacity to pinpoint the source of the problem, either a specific sensor (sonar vs. laser) or a spatial region within the map.

Results show that the Gambino indicator outperforms all others. In classification, it yields a 0 % false‑positive rate and a 7 % false‑negative rate, meaning it never raises an alarm when the environment is truly normal and only rarely misses a genuine degradation. For estimation, the correlation reaches 0.77, the highest among the tested indicators, indicating a strong linear relationship between the Gambino score and the actual map error. In isolation, the indicator correctly identifies whether the inconsistency originates from the sonar or the laser and highlights the affected cells, enabling downstream corrective actions such as sensor re‑calibration or selective data rejection.

Other indicators—such as simple average conflict, variance‑based measures, or raw belief‑mass thresholds—exhibit higher false‑positive rates (often above 20 %) or lower correlation values (typically below 0.5), demonstrating that raw conflict magnitude alone is insufficient for robust diagnosis.

The authors discuss several implications. First, conflict‑based reasoning provides a principled, probabilistic‑free way to fuse heterogeneous sensor data while simultaneously exposing contradictions. Second, because the method relies solely on internally generated belief masses, it can be deployed on robots operating in completely unknown or GPS‑denied environments. Third, the approach is computationally lightweight: conflict can be updated incrementally as new measurements arrive, making real‑time implementation feasible.

Limitations are acknowledged. The experiments are confined to static indoor scenes; highly dynamic environments with moving obstacles could produce rapid, transient conflicts that may be misinterpreted as sensor failure. The thresholds for each indicator were tuned empirically for the test suite, and an adaptive mechanism for threshold selection remains an open research direction. Moreover, the occupancy‑grid representation itself imposes discretization errors that could affect conflict calculations.

Future work outlined includes (a) extending the framework to 3‑D voxel maps and to additional sensor modalities (e.g., RGB‑D cameras), (b) integrating online learning to automatically adjust indicator parameters based on long‑term robot experience, and (c) testing the approach in large‑scale outdoor settings where illumination changes, weather, and terrain variability introduce new sources of inconsistency.

In summary, the paper demonstrates that the Dempster‑Shafer conflict metric, when transformed into carefully designed indicators—particularly the Gambino indicator—enables a robot to autonomously detect, quantify, and localize interpretation inconsistencies using only its own sensor data. This capability enhances the reliability of autonomous navigation systems, especially in scenarios where external validation is unavailable, and opens avenues for self‑diagnosing perception pipelines in future robotic platforms.