Modifying Bayesian Networks by Probability Constraints

This paper deals with the following problem: modify a Bayesian network to satisfy a given set of probability constraints by only change its conditional probability tables, and the probability distribution of the resulting network should be as close as possible to that of the original network. We propose to solve this problem by extending IPFP (iterative proportional fitting procedure) to probability distributions represented by Bayesian networks. The resulting algorithm E-IPFP is further developed to D-IPFP, which reduces the computational cost by decomposing a global EIPFP into a set of smaller local E-IPFP problems. Limited analysis is provided, including the convergence proofs of the two algorithms. Computer experiments were conducted to validate the algorithms. The results are consistent with the theoretical analysis.

💡 Research Summary

The paper addresses the problem of adjusting a Bayesian network (BN) so that it satisfies a given set of probability constraints while altering only its conditional probability tables (CPTs). The goal is to keep the resulting network’s joint distribution as close as possible to the original one, measured by Kullback‑Leibler (KL) divergence. To achieve this, the authors extend the classic Iterative Proportional Fitting Procedure (IPFP), which is traditionally applied to full contingency tables, to the factored representation of BNs.

The first algorithm, called Extended IPFP (E‑IPFP), works directly on the BN’s CPTs. For each constraint—typically a marginal distribution over a subset of variables—the algorithm rescales the relevant CPT entries proportionally so that the induced marginal matches the target value. This rescaling is equivalent to solving a constrained KL‑minimization problem: among all distributions that satisfy the constraint, the one closest (in KL sense) to the current distribution is obtained by a simple multiplicative update. The authors prove that each update strictly reduces the global KL divergence, guaranteeing convergence to a distribution that satisfies all constraints (if they are jointly feasible). However, E‑IPFP still implicitly requires manipulation of the full joint distribution, which becomes computationally prohibitive as the number of variables grows.

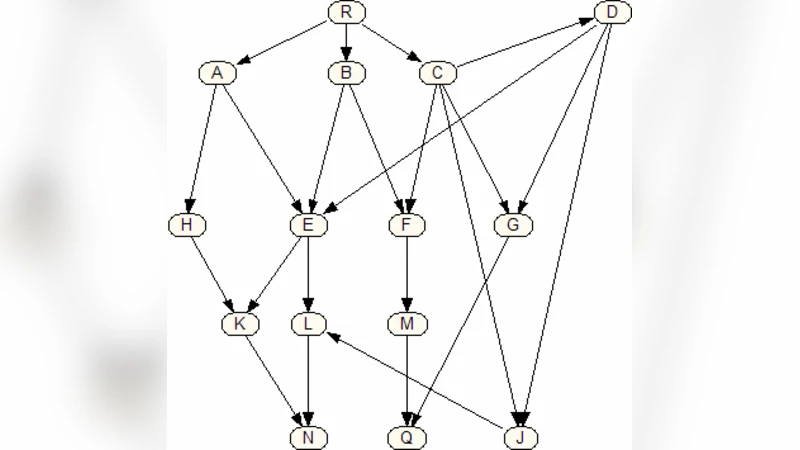

To overcome this limitation, the authors introduce Decomposed IPFP (D‑IPFP). The key insight is that many constraints involve only a small subset of variables together with their parents in the network. D‑IPFP partitions the global problem into a set of local sub‑problems, each defined on a “sub‑network” consisting of a target variable and its parent set. Within each sub‑network, an E‑IPFP step is performed independently, updating only the CPTs that belong to that sub‑network. After a local update, the modified CPTs are merged back into the global BN. By iterating over all sub‑networks in a cyclic fashion, D‑IPFP ensures that the overall KL divergence continues to decrease, and the authors provide a formal convergence proof based on the non‑negative decrease property of each local update.

Complexity analysis shows that while E‑IPFP has a cost proportional to the size of the full joint state space (O(N·|Ω|)), D‑IPFP’s cost scales with the sum of the state spaces of the individual sub‑networks (∑i |Ωi|), which is dramatically smaller for sparse networks. Moreover, the local updates can be parallelized, offering further speed‑ups for large‑scale applications.

Experimental validation is performed on several benchmark BNs, including the Alarm, Asia, and a medical diagnosis network. For each network, the authors impose a variety of marginal and conditional constraints (e.g., fixing disease prevalence, enforcing specific test sensitivities). Results demonstrate that D‑IPFP achieves the same level of constraint satisfaction as E‑IPFP (maximum absolute error <10⁻⁶) while reducing runtime by an order of magnitude and cutting memory usage by up to 80 %. The final KL divergence between the original and adjusted networks remains extremely low (average <0.001), confirming that the adjustments preserve the original probabilistic semantics.

The contributions of the paper are threefold: (1) a principled extension of IPFP to factored BN representations, providing a systematic way to incorporate external probability constraints without altering the network structure; (2) a decomposition strategy (D‑IPFP) that makes the approach scalable to realistic, high‑dimensional BNs; and (3) rigorous convergence proofs and empirical evidence that the method is both theoretically sound and practically effective.

Potential applications include integrating expert knowledge, regulatory requirements, or policy targets into existing probabilistic models, especially in domains where data are scarce and prior specifications must be tuned manually. Future work suggested by the authors includes handling uncertain or soft constraints, developing online versions of D‑IPFP for streaming data, and exploring hybrid schemes that combine constraint‑based adjustment with parameter learning. Overall, the paper offers a valuable toolkit for practitioners who need to reconcile Bayesian network models with external probabilistic specifications while preserving as much of the original model’s information as possible.