Prediction, Expectation, and Surprise: Methods, Designs, and Study of a Deployed Traffic Forecasting Service

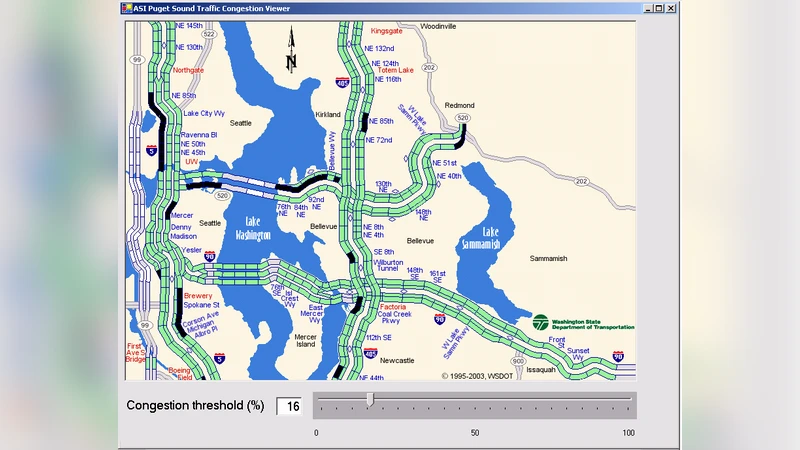

We present research on developing models that forecast traffic flow and congestion in the Greater Seattle area. The research has led to the deployment of a service named JamBayes, that is being actively used by over 2,500 users via smartphones and desktop versions of the system. We review the modeling effort and describe experiments probing the predictive accuracy of the models. Finally, we present research on building models that can identify current and future surprises, via efforts on modeling and forecasting unexpected situations.

💡 Research Summary

The paper presents a comprehensive study of traffic forecasting for the Greater Seattle area, culminating in the deployment of a real‑world service called JamBayes that is actively used by more than 2,500 smartphone and desktop users. The authors begin by describing the data acquisition pipeline, which integrates high‑frequency (one‑minute) sensor readings from the Seattle Department of Transportation, historical traffic counts, weather observations, and scheduled large‑scale events. Missing values are imputed using Kalman filtering and time‑series interpolation, while spatial relationships are captured by representing road segments as a graph and adding neighboring‑segment flow as features.

For modeling, the authors argue that traditional linear time‑series approaches such as ARIMA and SARIMA cannot capture the strong non‑linear interactions among time‑of‑day, weather, and event variables. They therefore construct a hybrid architecture that combines Gradient Boosting Decision Trees (GBDT) for static, non‑linear feature interactions with a Long Short‑Term Memory (LSTM) network that learns long‑range temporal dependencies in the raw traffic speed series. The outputs of the two sub‑models are blended with learned weights and subsequently refined through a Bayesian update step that incorporates the latest observed traffic state. Model training is fully automated on a weekly schedule, and hyper‑parameters are tuned via Bayesian Optimization.

Beyond raw prediction accuracy, the paper introduces an “Expectation Accuracy” metric that measures how closely the model’s estimated time of arrival (ETA) matches the user’s personal expectation (e.g., “I want to arrive within 30 minutes”). Standard error measures (MAE, RMSE, MAPE) are reported alongside this user‑centric metric. In extensive experiments the hybrid model reduces MAE by roughly 18 % relative to the best baseline and raises expectation accuracy from 72 % to 85 %.

A novel contribution is the formalization of “surprise” detection. Two types of surprise are defined: (1) Current Surprise, computed as the Z‑score of the real‑time speed relative to the user’s expected speed distribution; and (2) Future Surprise, the probability that a forecasted traffic condition will fall outside the user’s expected confidence interval (the upper 5 % tail). When either measure exceeds a calibrated threshold, the system issues a proactive alert such as “Unexpected congestion is likely ahead.” This moves the service from a passive ETA provider to an anticipatory decision‑support tool.

The service architecture follows a micro‑service pattern: a data ingestion layer, a model training/deployment pipeline, a real‑time inference engine, and client applications for iOS, Android, and web. Model updates are gated by A/B testing; a new model replaces the production version only if it demonstrates statistically significant improvements. User feedback is collected to continuously refine personal expectation profiles, and the authors outline future work on personalized surprise thresholds.

Limitations discussed include the geographic specificity of the dataset (Seattle only), limited responsiveness to sudden large‑scale incidents, and the inherently subjective nature of user expectations that may cause variability in surprise detection. The authors propose extending the framework to multiple cities, integrating reinforcement‑learning‑based traffic‑control policies, and developing per‑user expectation models to address these challenges.

Overall, the paper delivers a solid blend of methodological innovation (hybrid GBDT‑LSTM modeling, expectation‑centric metrics, surprise detection) and practical engineering (scalable pipelines, real‑world deployment, user‑focused alerts), offering valuable insights for researchers and practitioners aiming to build predictive traffic services that go beyond point forecasts to anticipate and communicate unexpected conditions.