Principles and Overview of Network Steganography

The paper presents basic principles of network steganography, which is a comparatively new research subject in the area of information hiding, followed by a concise overview and classification of network steganographic methods and techniques.

💡 Research Summary

The paper provides a comprehensive introduction to network steganography, a relatively new branch of information hiding that embeds covert data directly into network traffic rather than into static files. It begins by distinguishing steganography from cryptography, emphasizing that while encryption scrambles the content of a message, steganography seeks to conceal the very existence of the message within ordinary communications. Because network traffic is massive, continuous, and often encrypted, embedding hidden data in the transmission process can be highly stealthy.

The authors then map the OSI/TCP‑IP protocol stack onto five layers—physical, data‑link, network, transport, and application—and identify the fields, options, and timing characteristics in each layer that can serve as covert channels. Examples include the IP identification field, TCP sequence and acknowledgment numbers, window size, optional header fields, padding bytes, packet length, and application‑layer constructs such as HTTP custom headers, DNS query types, or SIP parameters.

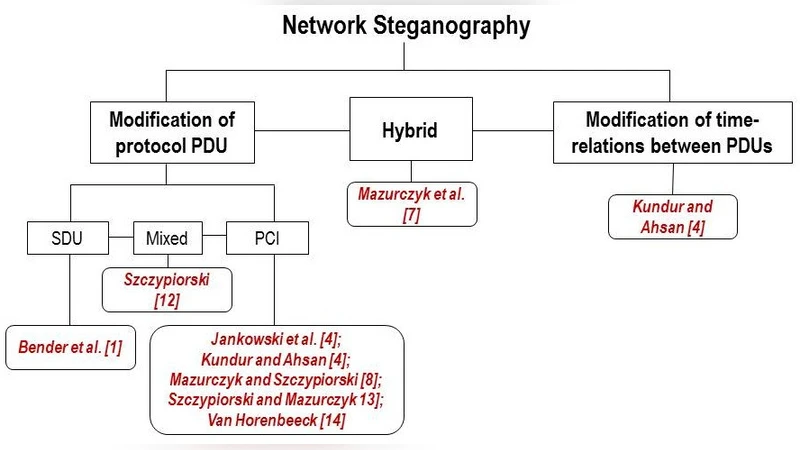

Steganographic techniques are classified along two orthogonal axes. Structural modification manipulates packet headers, payload bits, padding, or optional fields without altering the timing of packets. Typical methods are low‑order‑bit (LSB) embedding, field reordering, packet size modulation, and intentional error insertion. Temporal modification, by contrast, encodes information in the timing of packet transmission—delays, inter‑packet gaps, packet ordering, retransmission patterns, or traffic bursts. The paper argues that combining both axes yields the highest resistance to detection because many existing detectors focus on a single dimension.

A taxonomy of existing work is presented, grouping methods into ten categories: (1) header‑field manipulation, (2) packet‑size modulation, (3) padding/option exploitation, (4) flow‑timing alteration, (5) loss/retransmission based schemes, (6) covert channels inside encrypted traffic, (7) multiplexed steganography, (8) protocol‑variant based channels, (9) hybrid cryptographic‑steganographic constructions, and (10) application‑specific protocols. For each category the authors discuss representative papers, implementation complexity, achievable covert bandwidth, stealth (detectability), and compatibility with standard protocol behavior.

The discussion highlights two emerging trends. Multiplexed steganography simultaneously employs several covert channels across different layers, making it difficult for a detector that analyzes only one layer to spot anomalies. Covert channels inside encrypted traffic exploit TLS/SSL handshakes, encrypted record lengths, or padding in ciphertexts, thereby bypassing payload‑inspection detectors that rely on clear‑text analysis. Both trends increase the need for multi‑layer, behavior‑based detection frameworks.

Detection techniques are surveyed next. Statistical anomaly detection examines distributions of packet sizes, header bit patterns, or inter‑arrival times; however, subtle manipulations often fall within normal variance, leading to high false‑positive rates. Machine‑learning approaches train classifiers on large traffic corpora to recognize hidden patterns, but the growing prevalence of end‑to‑end encryption reduces the amount of observable features. Protocol‑conformance checking validates that fields and options follow the specifications; yet protocol extensions, vendor‑specific implementations, and legitimate deviations complicate this method.

Finally, the paper outlines open challenges and future research directions. It calls for (1) development of integrated, multi‑layer detection architectures that combine structural and temporal analysis, (2) lightweight real‑time monitoring algorithms suitable for high‑speed networks, (3) standardized benchmarks and datasets for evaluating both steganographic methods and detectors, and (4) policy discussions on the legitimate use and regulation of network steganography. The authors conclude that while network steganography is still in its infancy, its potential to evade traditional security controls makes it a critical area for continued academic and industry attention.