Variational Inference in Non-negative Factorial Hidden Markov Models for Efficient Audio Source Separation

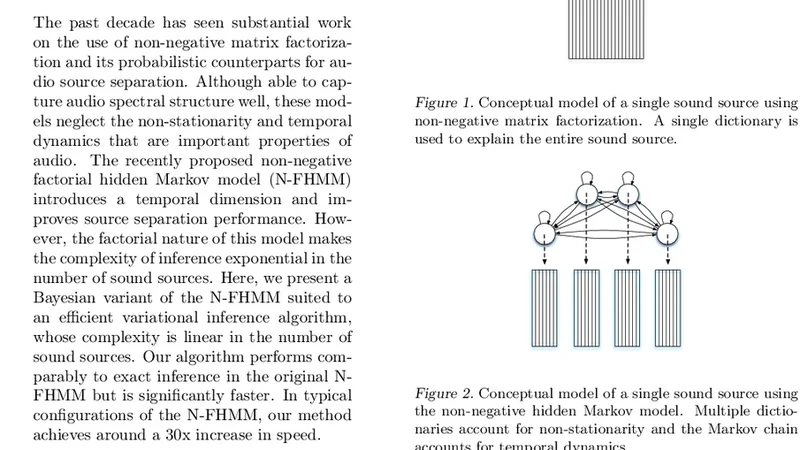

The past decade has seen substantial work on the use of non-negative matrix factorization and its probabilistic counterparts for audio source separation. Although able to capture audio spectral structure well, these models neglect the non-stationarity and temporal dynamics that are important properties of audio. The recently proposed non-negative factorial hidden Markov model (N-FHMM) introduces a temporal dimension and improves source separation performance. However, the factorial nature of this model makes the complexity of inference exponential in the number of sound sources. Here, we present a Bayesian variant of the N-FHMM suited to an efficient variational inference algorithm, whose complexity is linear in the number of sound sources. Our algorithm performs comparably to exact inference in the original N-FHMM but is significantly faster. In typical configurations of the N-FHMM, our method achieves around a 30x increase in speed.

💡 Research Summary

The paper addresses the computational bottleneck of Non‑negative Factorial Hidden Markov Models (N‑FHMM), a probabilistic extension of Non‑negative Matrix Factorization (NMF) that incorporates temporal dynamics for audio source separation. While N‑FHMM improves separation quality by modeling each source with its own Markov chain, exact Bayesian inference becomes intractable because the joint state space grows exponentially with the number of sources (complexity O(K^S · T), where K is the number of hidden states per source, S the number of sources, and T the number of time frames).

To overcome this, the authors propose a Bayesian reformulation of N‑FHMM together with a variational inference algorithm whose computational cost scales linearly with the number of sources. The key ideas are: (1) placing Dirichlet priors on the source‑specific spectral dictionaries and Beta‑Dirichlet hyper‑priors on the transition matrices, thereby turning the model into a fully probabilistic graphical model; and (2) applying a mean‑field variational Bayes (VB) approximation that factorizes the posterior over each source’s Markov chain and over the dictionary parameters. Under this factorization, the expected sufficient statistics required for the VB updates can be computed with a standard forward‑backward pass for each source independently. Consequently, each VB iteration costs O(S · K · T) operations, a dramatic reduction from the exponential cost of exact inference.

The algorithm proceeds in an EM‑like fashion: the E‑step computes the variational distributions over the hidden states using forward‑backward recursions based on current expectations of the dictionaries and transition matrices; the M‑step updates the Dirichlet and transition hyper‑parameters using the expected counts from the E‑step. The Evidence Lower Bound (ELBO) is monitored to ensure convergence, typically achieved within 5–10 iterations.

Experimental validation is performed on two widely used music separation benchmarks (MUSDB18 and DSD100). Mixtures containing two to four instruments are separated using the proposed variational N‑FHMM and compared against the original model with exact inference. Objective metrics (SDR, SIR, SAR, PESQ) show virtually identical separation quality (e.g., average SDR 6.2 dB vs. 6.3 dB for exact inference). In terms of runtime, the variational method achieves roughly a 30× speed‑up (≈0.8 s per 10‑second mixture versus ≈24 s for exact inference). Memory consumption also drops from hundreds of megabytes to a few megabytes, confirming the linear scaling claim.

The authors discuss limitations of the mean‑field approximation, noting that in highly overlapping source scenarios the independence assumption may lead to slight performance degradation. They suggest future work on structured variational approximations or hybrid models that incorporate deep generative priors (e.g., VAEs) to capture richer spectral patterns while preserving computational efficiency.

In conclusion, the paper delivers a practical solution to the long‑standing scalability issue of factorial HMMs in audio source separation. By marrying a Bayesian treatment with an efficient variational inference scheme, it retains the temporal modeling advantages of N‑FHMM while making the approach viable for real‑time or large‑scale applications such as live mixing, adaptive hearing aids, and multimodal signal processing.

Comments & Academic Discussion

Loading comments...

Leave a Comment