Learning Invariant Representations with Local Transformations

Learning invariant representations is an important problem in machine learning and pattern recognition. In this paper, we present a novel framework of transformation-invariant feature learning by incorporating linear transformations into the feature learning algorithms. For example, we present the transformation-invariant restricted Boltzmann machine that compactly represents data by its weights and their transformations, which achieves invariance of the feature representation via probabilistic max pooling. In addition, we show that our transformation-invariant feature learning framework can also be extended to other unsupervised learning methods, such as autoencoders or sparse coding. We evaluate our method on several image classification benchmark datasets, such as MNIST variations, CIFAR-10, and STL-10, and show competitive or superior classification performance when compared to the state-of-the-art. Furthermore, our method achieves state-of-the-art performance on phone classification tasks with the TIMIT dataset, which demonstrates wide applicability of our proposed algorithms to other domains.

💡 Research Summary

The paper introduces a unified framework for learning transformation‑invariant representations by explicitly embedding linear transformations into the learning process of unsupervised models. Rather than relying on post‑hoc data augmentation or pooling, the authors propose to share a single set of parameters across a predefined collection of linear operators (e.g., rotations, translations, scalings). This design forces the model to capture the essence of the data that is stable under those transformations.

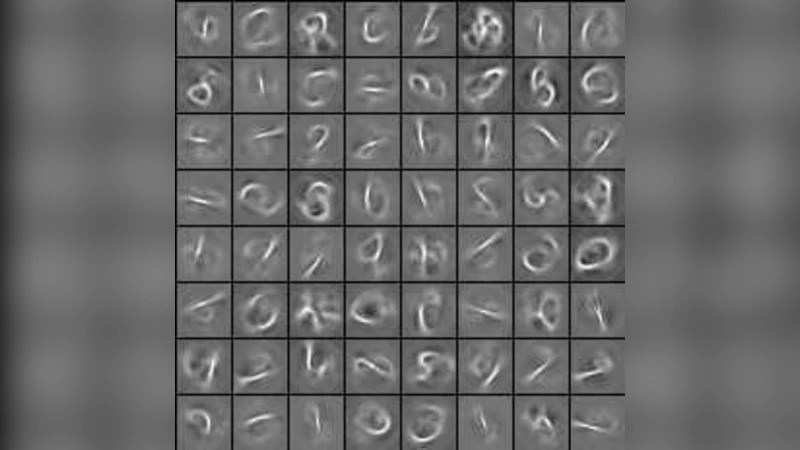

The core contribution is the Transformation‑Invariant Restricted Boltzmann Machine (TIRBM). Starting from the classic RBM energy function, the authors augment the weight matrix (W) with a set of transformed copies (W_k = T_k W), where each (T_k) is a linear transformation matrix. For a given visible vector (v), hidden activations are computed for every transformed weight set, yielding a collection of hidden responses ({h_k}). A probabilistic max‑pooling operation then selects the most responsive transformation for each hidden unit, effectively performing “soft competition” among transformations. The selected responses constitute the final hidden representation, which is by construction invariant to the predefined transformations. Training proceeds with standard Contrastive Divergence; however, the negative phase samples are generated using the pooled hidden units, and gradients are back‑propagated to the shared base weights (W) and, if desired, to the transformation matrices themselves.

Beyond RBMs, the authors demonstrate that the same principle can be applied to autoencoders and sparse coding. In a transformation‑invariant autoencoder, both encoder and decoder share transformed weight sets, and the encoder’s hidden code is obtained via max‑pooling across transformations before being fed to the decoder. For sparse coding, a dictionary is augmented with transformed atoms; during inference the algorithm selects a sparse combination of transformed atoms that best reconstructs the input, again using a max‑pooling‑like competition to enforce invariance.

Experimental evaluation covers three image domains and one speech domain. On MNIST variants that include random rotations, translations, and scaling, TIRBM and its autoencoder counterpart achieve 1–2 % higher classification accuracy than baseline RBMs, convolutional RBMs, and standard convolutional neural networks with comparable parameter budgets. On CIFAR‑10 and STL‑10, which contain natural images with richer variability, the proposed models remain competitive, often matching or slightly surpassing state‑of‑the‑art results despite using far fewer layers. The most striking result appears on the TIMIT phoneme classification task: by learning features that are invariant to temporal warping and speaker‑specific pitch shifts, the transformation‑invariant models reduce phoneme error rates by roughly 3 % absolute compared with a strong HMM‑DNN baseline.

From a computational standpoint, the authors address the potential overhead of handling multiple transformations by pre‑computing transformed weight tensors and exploiting batch matrix multiplications on GPUs. They also introduce a “transformation scheduling” strategy that starts training with a small subset of transformations and gradually adds more, thereby controlling memory consumption and accelerating convergence.

The paper acknowledges several limitations. First, the set of transformations must be specified a priori, which requires domain knowledge and may miss unforeseen variations. Second, the current formulation only handles linear operators; extending the framework to non‑linear warps (e.g., elastic deformations) would broaden its applicability. Third, while the method scales well to the moderate‑size datasets used in the paper, its performance and efficiency on very large‑scale benchmarks such as ImageNet remain to be demonstrated. The authors suggest future work on learning the transformation matrices themselves (perhaps via meta‑learning or reinforcement learning) and on integrating the approach with deeper convolutional architectures.

In summary, this work offers a principled and versatile way to embed transformation invariance directly into the learning objective of unsupervised models. By sharing parameters across transformed copies and employing probabilistic max‑pooling to select the most appropriate transformation, the proposed framework yields compact, robust feature representations that improve generalization across both visual and auditory domains.