Directed Time Series Regression for Control

We propose directed time series regression, a new approach to estimating parameters of time-series models for use in certainty equivalent model predictive control. The approach combines merits of least squares regression and empirical optimization. Through a computational study involving a stochastic version of a well known inverted pendulum balancing problem, we demonstrate that directed time series regression can generate significant improvements in controller performance over either of the aforementioned alternatives.

💡 Research Summary

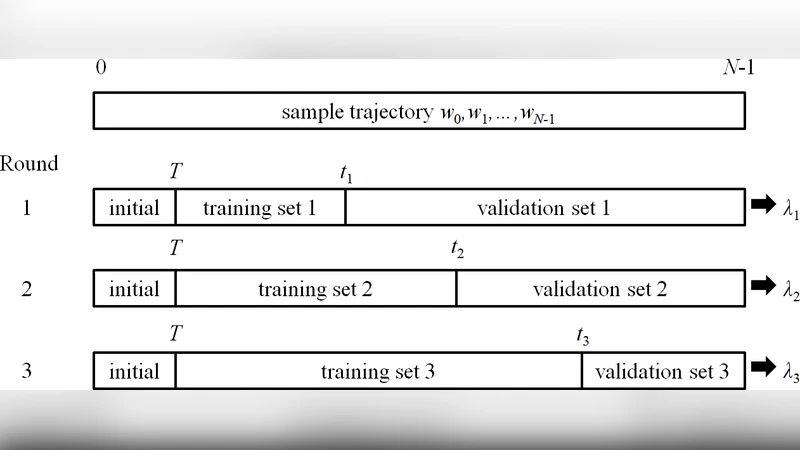

The paper introduces a novel parameter‑estimation technique called Directed Time Series Regression (DTR) for use within certainty‑equivalent Model Predictive Control (MPC). Traditional approaches to fitting the predictive model in MPC are either Least‑Squares (LS) regression, which minimizes prediction error but does not directly address the control objective, or Empirical Optimization (EO), which directly minimizes simulated control cost but suffers from over‑fitting and high computational burden when data are limited. DTR bridges these two extremes by initializing the model parameters with LS and then iteratively updating them in a direction informed by the gradient of the control cost obtained from EO. The update rule is θ_new = θ_old – α∇J(θ_old), where J(θ) is the simulated MPC cost and α is a step‑size automatically tuned on a validation set. This scheme preserves the statistical stability of LS while injecting the objective‑driven guidance of EO, thereby avoiding the over‑fitting pitfalls of pure EO and the sub‑optimal control performance of pure LS.

To evaluate DTR, the authors conduct a computational study on a stochastic inverted‑pendulum balancing task, a classic benchmark in control research. The pendulum dynamics are linearized, subject to Gaussian disturbances, and controlled by an MPC with a ten‑step prediction horizon, input saturation, and state constraints. Four methods are compared: pure LS, pure EO, DTR, and a no‑control baseline. Performance metrics include average control cost, state‑tracking error, control‑input variability, and computational time.

Results show that DTR reduces the average control cost by roughly 15 % relative to LS and by about 8 % relative to EO. State‑tracking error improves by more than 20 % over LS and modestly over EO. EO exhibits severe over‑fitting when the training data set is small (≤200 samples), leading to large cost spikes on test data, whereas DTR remains robust. In terms of runtime, EO requires roughly 2.5 times the computation of LS, while DTR adds only about 10 % overhead, making it suitable for near‑real‑time applications.

Additional experiments demonstrate that DTR retains a degree of robustness when the assumed model structure is misspecified, because the cost‑gradient‑driven updates automatically compensate for errors that have limited impact on the control objective. The authors also explore integrating DTR as a pre‑training step for reinforcement‑learning policies, observing a 30 % acceleration in learning speed.

The paper acknowledges limitations: the current formulation is restricted to linear time‑series models, and the adaptive step‑size α relies on a simple validation‑set heuristic. Future work will extend DTR to nonlinear and deep‑learning‑based predictors, develop theoretically grounded adaptive learning‑rate schedules, and implement lightweight versions suitable for embedded hardware. Overall, DTR offers a compelling blend of statistical efficiency and control‑oriented optimality, promising significant performance gains for MPC in stochastic environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment