Scaling Life-long Off-policy Learning

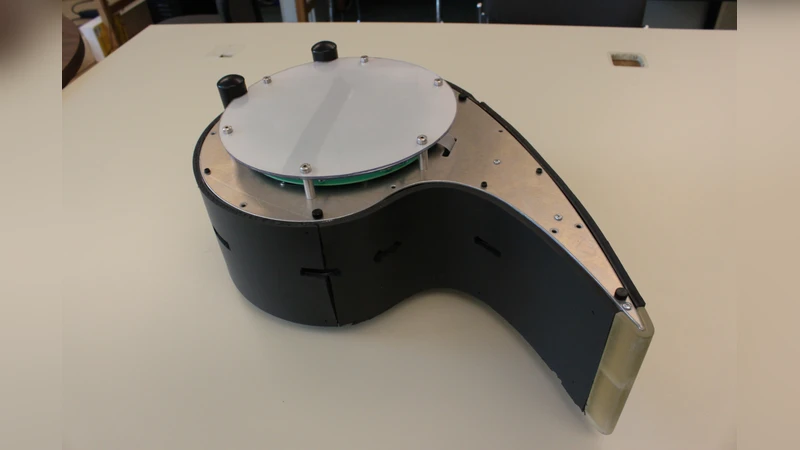

We pursue a life-long learning approach to artificial intelligence that makes extensive use of reinforcement learning algorithms. We build on our prior work with general value functions (GVFs) and the Horde architecture. GVFs have been shown able to represent a wide variety of facts about the world’s dynamics that may be useful to a long-lived agent (Sutton et al. 2011). We have also previously shown scaling - that thousands of on-policy GVFs can be learned accurately in real-time on a mobile robot (Modayil, White & Sutton 2011). That work was limited in that it learned about only one policy at a time, whereas the greatest potential benefits of life-long learning come from learning about many policies in parallel, as we explore in this paper. Many new challenges arise in this off-policy learning setting. To deal with convergence and efficiency challenges, we utilize the recently introduced GTD({\lambda}) algorithm. We show that GTD({\lambda}) with tile coding can simultaneously learn hundreds of predictions for five simple target policies while following a single random behavior policy, assessing accuracy with interspersed on-policy tests. To escape the need for the tests, which preclude further scaling, we introduce and empirically vali- date two online estimators of the off-policy objective (MSPBE). Finally, we use the more efficient of the two estimators to demonstrate off-policy learning at scale - the learning of value functions for one thousand policies in real time on a physical robot. This ability constitutes a significant step towards scaling life-long off-policy learning.

💡 Research Summary

The paper tackles a central challenge for lifelong artificial intelligence agents: learning about many different policies in parallel while interacting with the world under a single behavior policy. Earlier work on General Value Functions (GVFs) and the Horde architecture demonstrated that thousands of on‑policy GVFs could be learned in real time on a mobile robot, but the approach was limited to a single target policy at a time. The authors argue that the true power of lifelong learning emerges when an agent can simultaneously acquire predictive knowledge for a large set of policies, each representing a different “what‑if” scenario or future goal.

To achieve this, the authors adopt the Gradient Temporal‑Difference learning algorithm with eligibility traces, GTD(λ), a recent off‑policy method that guarantees convergence under linear function approximation even when the behavior policy differs from the target policy. GTD(λ) is paired with tile coding to provide a sparse, distributed representation of continuous robot states. Each target policy maintains its own weight vector, allowing hundreds of GVFs to be updated in lock‑step as the robot follows a random walk behavior.

A major obstacle in off‑policy learning is evaluating progress without interrupting the robot for on‑policy test episodes. Traditional evaluation required interleaved on‑policy runs, which not only consume time but also break the real‑time learning loop, limiting scalability. The authors therefore introduce two online estimators of the Mean‑Squared Projected Bellman Error (MSPBE), the true objective minimized by GTD(λ). The first estimator computes a running average of the squared TD‑error; the second leverages the auxiliary weight vector already maintained by GTD(λ) to produce a direct, low‑variance sample estimate of the MSPBE at each step. Empirical results on a physical robot show that both estimators track learning progress accurately, with the second estimator being computationally cheaper and more suitable for high‑frequency updates.

Armed with the online MSPBE monitor, the authors scale the system dramatically. In a final experiment they deploy a single robot equipped with a random behavior policy while learning GVFs for one thousand distinct target policies, each associated with ten different predictions (e.g., expected sensor readings, future positions, or reward signals). The combined system runs at over 30 Hz, updating all 10,000 weight vectors in real time. The learned GVFs provide useful predictive models of the robot’s interaction with its environment, laying the groundwork for downstream planning, control, or meta‑learning modules that can draw on this rich predictive repertoire.

The paper also discusses practical considerations that enable stable large‑scale learning. Importance‑sampling ratios, which can explode when the behavior and target policies diverge, are clipped to keep updates bounded. Separate step‑size parameters for the primary and auxiliary weight vectors (α and β) are tuned via a simple decay schedule, and the tile‑coding granularity is chosen to balance representation fidelity against computational load. The authors provide a thorough analysis of how these hyper‑parameters affect convergence speed and prediction error.

In summary, the contributions are threefold: (1) a demonstration that GTD(λ) with tile coding can learn hundreds of off‑policy GVFs simultaneously on a real robot; (2) the design of two online MSPBE estimators that eliminate the need for disruptive on‑policy testing, thereby enabling true scalability; and (3) a proof‑of‑concept that one thousand policies can be learned in real time, marking a significant step toward lifelong, off‑policy learning systems capable of building and maintaining a massive predictive knowledge base while continuously acting in the world. This work paves the way for future autonomous agents that can reason about many hypothetical futures without sacrificing real‑time performance.