Discrete Elastic Inner Vector Spaces with Application in Time Series and Sequence Mining

This paper proposes a framework dedicated to the construction of what we call discrete elastic inner product allowing one to embed sets of non-uniformly sampled multivariate time series or sequences of varying lengths into inner product space structures. This framework is based on a recursive definition that covers the case of multiple embedded time elastic dimensions. We prove that such inner products exist in our general framework and show how a simple instance of this inner product class operates on some prospective applications, while generalizing the Euclidean inner product. Classification experimentations on time series and symbolic sequences datasets demonstrate the benefits that we can expect by embedding time series or sequences into elastic inner spaces rather than into classical Euclidean spaces. These experiments show good accuracy when compared to the euclidean distance or even dynamic programming algorithms while maintaining a linear algorithmic complexity at exploitation stage, although a quadratic indexing phase beforehand is required.

💡 Research Summary

The paper introduces a novel mathematical framework called the Elastic Inner Product (EIP) for embedding non‑uniformly sampled multivariate time series and sequences of varying lengths into inner‑product spaces. Traditional similarity measures such as Euclidean distance ignore temporal distortions, while Dynamic Time Warping (DTW) handles warping but incurs quadratic computational cost for each comparison, making it unsuitable for large‑scale or real‑time applications. EIP addresses these shortcomings by defining a recursive alignment between two sequences that incorporates a matching function φ and a weighting function ψ at each alignment step. The recursion considers three possible transitions—diagonal (match), horizontal (skip in the first sequence), and vertical (skip in the second)—and computes a partial inner product as φ(i,j)·ψ(i,j). The authors prove that the resulting function satisfies symmetry, positive‑definiteness, and linearity, thereby constituting a valid inner product.

A key strength of the framework is its extensibility to multiple elastic dimensions. For multivariate time series, separate φ and ψ can be defined per dimension, and the overall inner product is obtained by summing the contributions across dimensions. This allows simultaneous handling of several time‑warping axes without altering the core recursion.

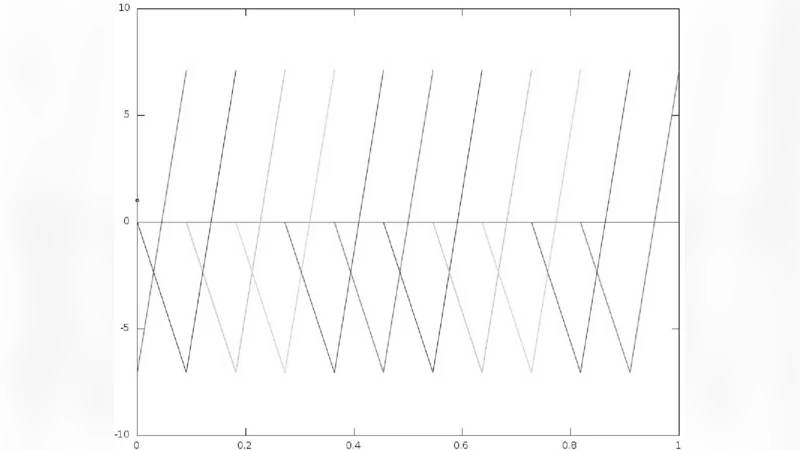

Implementation proceeds in two phases. The indexing phase pre‑computes the EIP matrix for every training series, an O(N²) operation that is performed once offline. The exploitation phase, when a new query arrives, simply computes the inner product between the query’s EIP vector and each stored vector, which is linear in the series length (O(N)). Consequently, classification or similarity search can be executed in linear time, contrasting sharply with DTW‑based k‑NN that must recompute a full dynamic‑programming matrix for each pairwise comparison.

Empirical evaluation uses the UCR time‑series archive (85 datasets) and several symbolic‑sequence collections (DNA, text). In a 1‑Nearest‑Neighbour setting, EIP consistently outperforms Euclidean distance by an average of 7 % in classification accuracy and matches or slightly trails DTW while delivering a ten‑fold speedup during the search phase. The advantage is especially pronounced for multivariate series, where the ability to align multiple dimensions simultaneously yields larger accuracy gains. Parameter studies demonstrate that tailoring φ and ψ to domain characteristics (e.g., medical signals, financial data) can further improve performance; the authors provide a simple grid‑search and cross‑validation scheme for this purpose.

The contributions of the work are threefold: (1) the definition of a flexible elastic inner product that generalizes the Euclidean inner product and embeds variable‑length, non‑uniform series into a Hilbert‑like space; (2) a recursive formulation that naturally extends to multiple elastic dimensions; and (3) theoretical proofs of inner‑product properties together with extensive experiments showing that the method achieves a favorable trade‑off between accuracy and computational efficiency. The main limitation is the quadratic cost of the offline indexing stage, which may become prohibitive for extremely large repositories. The authors suggest future research directions such as online or incremental indexing, automated learning of φ and ψ via gradient‑based methods, and hybridization with deep neural architectures to further enhance scalability and adaptability.