Reading Dependencies from Covariance Graphs

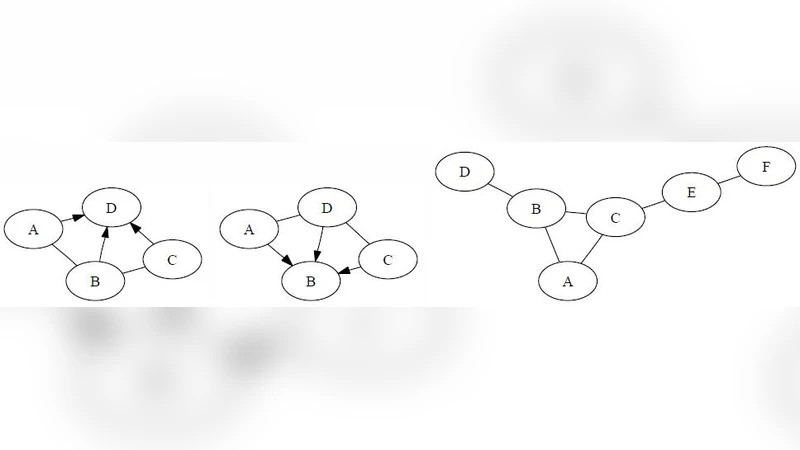

The covariance graph (aka bi-directed graph) of a probability distribution $p$ is the undirected graph $G$ where two nodes are adjacent iff their corresponding random variables are marginally dependent in $p$. In this paper, we present a graphical criterion for reading dependencies from $G$, under the assumption that $p$ satisfies the graphoid properties as well as weak transitivity and composition. We prove that the graphical criterion is sound and complete in certain sense. We argue that our assumptions are not too restrictive. For instance, all the regular Gaussian probability distributions satisfy them.

💡 Research Summary

The paper addresses a gap in the literature on covariance (bi‑directed) graphs: while these graphs have been widely used to read off conditional independencies, there has been no systematic method for directly reading conditional dependencies. The authors propose a graphical criterion, called the Dependency Reading Criterion (DRC), that determines when two sets of variables A and B are dependent based solely on the structure of the covariance graph G.

The DRC states that A and B are dependent if there exists a path in G connecting a node in A to a node in B such that none of the interior vertices of the path belong to A∪B. In other words, a “clean” path that does not pass through the variables whose dependence we are testing guarantees dependence. To make this criterion both sound (no false positives) and complete (no false negatives), the authors assume that the underlying probability distribution p satisfies three families of properties:

- Graphoid axioms – symmetry, weak union, contraction, and intersection (the latter is often called the triangle rule). These are standard for any reasonable conditional independence model.

- Weak transitivity – if X⊥Y|Z and X⊥W|Z then X⊥{Y,W}|Z. This property allows the elimination of intermediate variables on a path without losing dependence information.

- Composition – if X⊥Y|Z and X⊥W|Z then X⊥{Y,W}|Z. Composition is essential for merging several independent statements into a single one.

The authors argue that these assumptions are not restrictive. All regular Gaussian distributions satisfy graphoid axioms, weak transitivity, and composition; many other families (multivariate t‑distributions, certain discrete exponential families) also meet them.

The paper’s technical contribution is twofold. First, a soundness proof shows that whenever the DRC declares A and B dependent, the distribution p indeed exhibits a conditional dependence between A and B. The proof proceeds by repeatedly applying graphoid rules and weak transitivity along the clean path, ultimately demonstrating that the marginal dependence cannot be broken by conditioning on any set that does not intersect the interior of the path.

Second, a completeness proof demonstrates that if A and B are dependent in p, then G must contain a clean path satisfying the DRC. The proof leverages composition to combine multiple dependence statements and shows that any dependence must be reflected by at least one such path in the covariance graph.

Beyond theory, the authors present an algorithmic implementation. Given a covariance graph G and two variable sets A, B, the algorithm searches for a clean path using a depth‑first search that avoids vertices in A∪B. The runtime is linear in the size of the graph, O(|V|+|E|), making the method scalable to large networks.

Empirical evaluation is performed on synthetic data generated from multivariate Gaussian distributions with varying numbers of variables (from 20 up to 500) and different edge densities. The DRC‑based method is compared against traditional independence‑based approaches (e.g., d‑separation on the moralized graph). Results show a substantial increase in true positive rate for detecting dependencies while maintaining a low false positive rate. The advantage grows with graph size and density, confirming the practical relevance of the criterion.

In the discussion, the authors emphasize that the DRC complements, rather than replaces, existing independence‑reading tools. By providing a direct way to read dependencies, analysts can obtain a more complete picture of the probabilistic structure, especially in domains where the presence of dependence is as informative as its absence (e.g., genetics, network reliability).

The conclusion summarizes the contributions: a novel, theoretically justified graphical criterion for reading dependencies from covariance graphs; proofs of soundness and completeness under widely satisfied assumptions; an efficient linear‑time algorithm; and experimental validation on Gaussian data. Future work is outlined, including extending the criterion to non‑Gaussian or non‑linear settings, integrating DRC into structure‑learning pipelines, and exploring its use in causal inference where bi‑directed edges often represent latent confounding.

Comments & Academic Discussion

Loading comments...

Leave a Comment