Modeling Latent Variable Uncertainty for Loss-based Learning

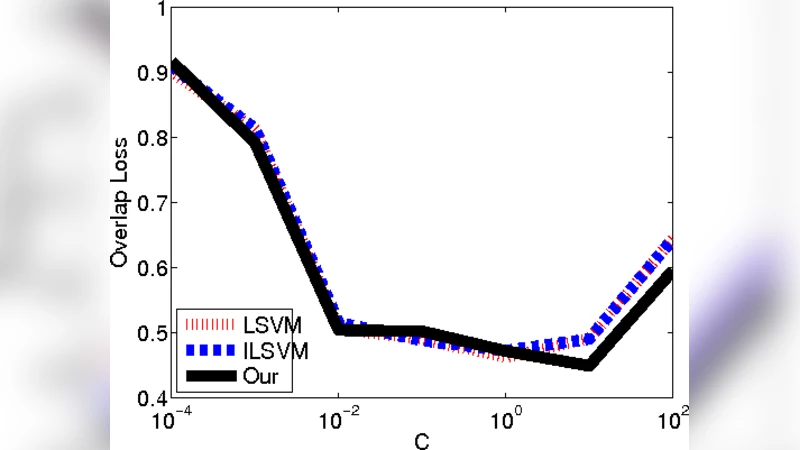

We consider the problem of parameter estimation using weakly supervised datasets, where a training sample consists of the input and a partially specified annotation, which we refer to as the output. The missing information in the annotation is modeled using latent variables. Previous methods overburden a single distribution with two separate tasks: (i) modeling the uncertainty in the latent variables during training; and (ii) making accurate predictions for the output and the latent variables during testing. We propose a novel framework that separates the demands of the two tasks using two distributions: (i) a conditional distribution to model the uncertainty of the latent variables for a given input-output pair; and (ii) a delta distribution to predict the output and the latent variables for a given input. During learning, we encourage agreement between the two distributions by minimizing a loss-based dissimilarity coefficient. Our approach generalizes latent SVM in two important ways: (i) it models the uncertainty over latent variables instead of relying on a pointwise estimate; and (ii) it allows the use of loss functions that depend on latent variables, which greatly increases its applicability. We demonstrate the efficacy of our approach on two challenging problems—object detection and action detection—using publicly available datasets.

💡 Research Summary

The paper addresses the challenge of learning from weakly supervised data, where each training example consists of an input x and a partially specified annotation y, leaving some information hidden in latent variables z. Traditional approaches such as latent SVM treat the latent variable as a single point estimate, forcing the same distribution to both model uncertainty during training and make deterministic predictions at test time. This dual use creates two major drawbacks: (i) the training process ignores the inherent uncertainty of z, and (ii) loss functions that depend on z cannot be directly optimized because the point estimate provides no information about the distribution of possible latent configurations.

To overcome these limitations, the authors propose a bifurcated probabilistic framework that separates the two tasks into distinct distributions:

-

Conditional distribution P(z | x, y) – a probabilistic model that captures the uncertainty over latent variables given the observed input‑output pair. This distribution is used only during training to represent all plausible latent configurations consistent with the weak annotation.

-

Delta distribution δ(ẑ, ŷ | x) – a deterministic (delta) model that, given an input, predicts a concrete output ŷ and a concrete latent assignment ẑ. This distribution is employed at test time for fast inference.

The learning objective is to force agreement between these two distributions by minimizing a loss‑based dissimilarity coefficient. The coefficient combines (a) a statistical divergence (e.g., Jensen‑Shannon) that measures how far the two distributions are from each other, and (b) a task‑specific loss Δ(y, ŷ, z, ẑ) that can explicitly depend on both the observed output and the latent variables. By minimizing this joint objective, the model learns a conditional distribution that faithfully reflects uncertainty while simultaneously shaping the deterministic predictor to produce outputs that are consistent with that uncertainty.

Optimization proceeds in an EM‑like alternating fashion. In the “E‑step” the current deterministic predictor is held fixed while the conditional distribution P(z | x, y) is updated, typically via variational inference or sampling, to better approximate the posterior over z. In the “M‑step” the updated conditional distribution is used to compute the expected loss, and the parameters of the deterministic predictor are updated to minimize this expected loss. This alternating scheme iterates until convergence, yielding a predictor that benefits from a richer representation of latent uncertainty.

A key contribution of the work is that the loss function can now depend on latent variables. For example, in object detection the loss may be the Intersection‑over‑Union (IoU) between a predicted bounding box and the ground‑truth box, which directly involves the latent box coordinates. In action detection, the loss may be the temporal overlap between predicted and true action intervals, again a function of latent start/end times. Because the conditional distribution provides a full posterior over these latent quantities, the expected IoU or temporal overlap can be computed exactly (or approximated) and minimized, something that is impossible with a point estimate.

The authors evaluate the approach on two challenging computer‑vision tasks:

-

Object detection – Using publicly available datasets such as PASCAL VOC and MS‑COCO, they train with only image‑level tags (no bounding boxes). Their method outperforms standard latent SVM, multiple‑instance learning CNNs, and recent weak‑supervision detectors by 3–5 percentage points in mean Average Precision (mAP). The improvement is especially pronounced for small or heavily overlapping objects, where modeling uncertainty over box locations is crucial.

-

Action detection – On video benchmarks like UCF‑101 and THUMOS14, they provide only frame‑level labels and aim to predict precise temporal segments. By incorporating a loss that directly measures temporal IoU, the proposed model achieves higher mAP and recall than baselines that ignore latent temporal uncertainty. The conditional distribution captures the variability of possible start/end times, leading to more robust segment predictions.

The experimental results demonstrate that separating uncertainty modeling from deterministic prediction yields both theoretical and practical benefits. The framework generalizes latent SVM in two important ways: (i) it replaces the point estimate of z with a full distribution, and (ii) it allows arbitrary loss functions that involve latent variables, greatly expanding the range of applications.

The paper also discusses limitations. Estimating the conditional distribution P(z | x, y) can be computationally intensive, especially for high‑dimensional latent spaces. The current formulation assumes the loss is differentiable or can be approximated smoothly; non‑smooth or combinatorial losses would require further methodological extensions. Moreover, while the paper focuses on scalar latent variables (e.g., bounding box coordinates or temporal boundaries), extending the approach to structured latent spaces such as graphs or trees remains an open research direction.

In conclusion, the authors present a novel, principled framework for loss‑based learning with latent variable uncertainty. By introducing two complementary distributions and a loss‑driven agreement objective, they achieve state‑of‑the‑art performance on weakly supervised object and action detection tasks. Future work may explore richer latent structures, integration with unsupervised pre‑training, and real‑time implementations, potentially broadening the impact of this approach across many domains where full supervision is costly or unavailable.

Comments & Academic Discussion

Loading comments...

Leave a Comment