On multi-view feature learning

Sparse coding is a common approach to learning local features for object recognition. Recently, there has been an increasing interest in learning features from spatio-temporal, binocular, or other multi-observation data, where the goal is to encode t…

Authors: Rol, Memisevic (University of Frankfurt)

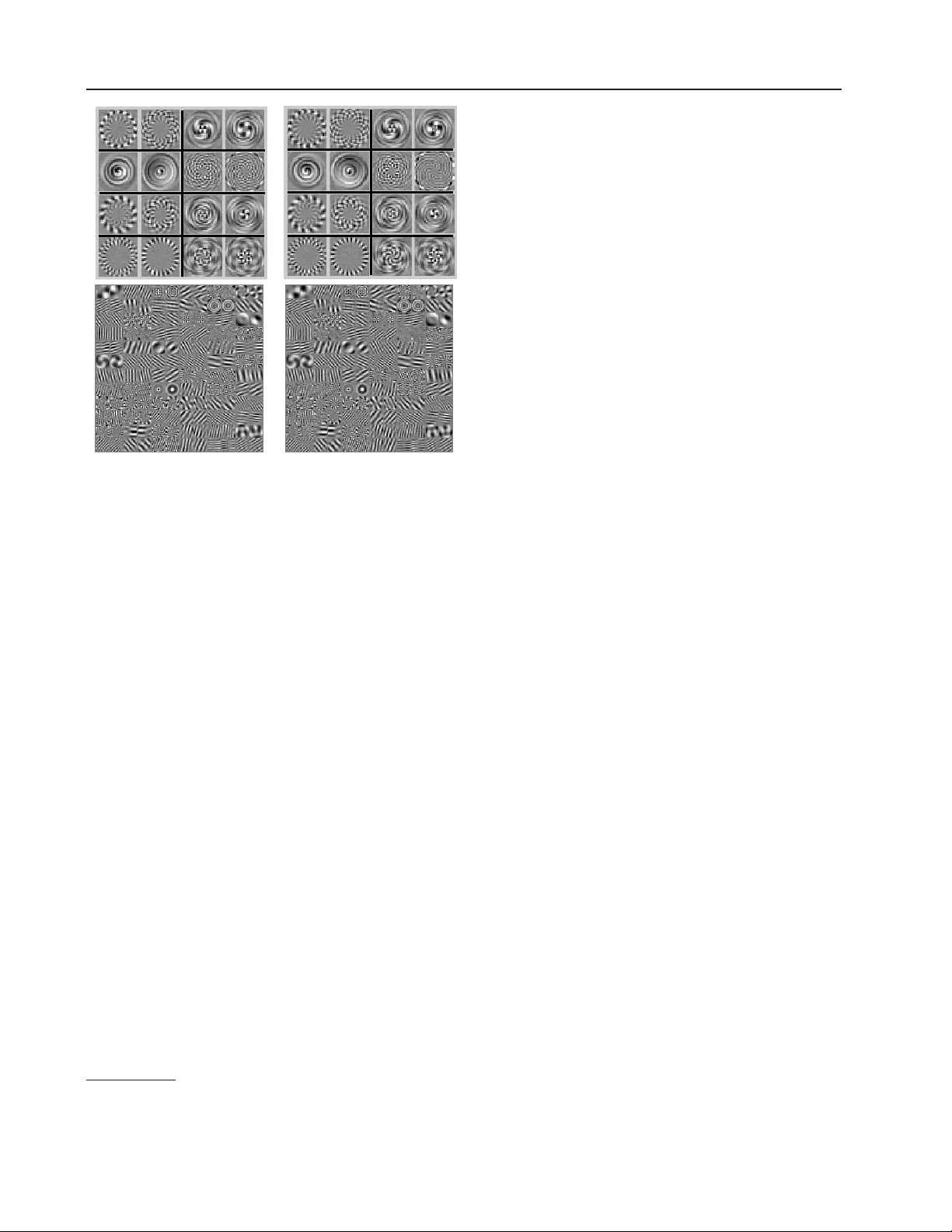

On m ulti-view feature learning Roland Memisevic ro@cs.uni-frankfur t.de Univ ersity of F rankfurt, Rob ert-Ma yer-Str. 10, 60325 F rankfurt, Germany Abstract Sparse co ding is a common approac h to learn- ing lo cal features for ob ject recognition. Re- cen tly , there has been an increasing in ter- est in learning features from spatio-temp oral, bino cular, or other multi-observ ation data, where the goal is to enco de the relationship b et ween images rather than the conten t of a single image. W e provide an analysis of m ulti-view featu r e learning, which sho ws that hidden v ariables encode transformations by detecting rotation angles in the eigenspaces shared among multiple image w arps. Our analysis helps expl ain recent experimental results showing that tr an sf ormati on-s p ecific features emerge when training complex cell mo dels on videos. Our analysis also sho ws that transformation-inv arian t feature s can emerge as a b y-pro duct of learning represen- tations of transformations. 1. In tro duction F eature learning (AKA dictionary learning, or sparse co ding) has gained considerable attention in computer vision in recent y ears, b ecause it can yield image rep- resentations that are useful for recognition. How ever, although recognition is imp ortant in a v ariety of tasks, a lot of problems in vision inv olve the enco ding of the r elationship b etw een observ ations not single observ a- tions. Examples include trac king, multi-view geome- try , action understanding or dealing with inv ariances. A v ariety of multi-view feature l ear ni ng mo dels hav e recently b een suggested as a w ay to learn features that enco de relations b et ween images. The basic idea b e- hind these mo dels is that hidden v ariables sum ov er pro ducts of filter res p onses applied t o two observ a- tions x and y and thereby correlate the resp onses. App earing in Pr o c e e dings of the 29 th International Co n f er- enc e on Machine L e arning , Edinburgh, Scotland, UK, 2012. Copyrigh t 2012 by the author(s)/owner(s). Adapting the filters based on syn thetic transforma- tions on images was shown to yield transformation- sp ecific features lik e p h ase- s hif te d F ourier comp onents when training on shifted image pairs, or “circular” F ourier comp onen ts when t r ainin g on rotated image pairs ( Memisevic & Hinton , 2010 ). T ask-sp ecific filter- pairs emerge when training on natural transforma- tions, lik e facial expression changes ( Susskind et al. , 2011 ) or natural video ( T a ylor et al. , 2010 ), and they w ere shown to yield state-of-the-art recognition p erformance in these domains. Multi-view feature learning models are also c lose ly related to energy mo dels of complex cells ( Adelson & Bergen , 1985 ), whic h, in turn, hav e b een successfully applied to video understand in g, to o ( Le et al. , 2011 ). They ha ve also b een used to learn within-image correla- tions by letting input and output images b e the s ame ( Ranzato & Hinton , 2010 ; Bergst r a et al. , 2010 ). Common to all these metho ds is t hat they dep loy pr o d- ucts of filter r esp onses to learn relations. In this pap e r , w e analyze the role of these m ultiplicative interactions in learning relations. W e also show that the hidden v ari able s in a multi-view feature learning mo del repr e- sen t transformations by detecting rotation angles in eigenspaces that are shared among the transf orma- tions. W e fo cus on image transformations here, but our analysis is not restricted to images. Our analysis has a v ariety of practical applications, that we inv estigate in detail exp erimen tally: (1) W e can train complex cell and energy mo dels using con- ditional sparse co ding mo dels and vice versa, (2) It is possible to extend multi-view feature learning to mo del sequences of three or more images in s te ad of just t wo, (3) It is mandatory t hat hidden v ariables p ool ov er m ultiple subspaces to w ork prop erly , (4) In- v ari ant features c an b e learned by sep ar ating p ooling within subspaces from p ooling across subspaces. Our analysis is related to previous inv estigations of energy mo dels and of complex cells (for example, ( Fleet et al. , 1996 ; Qian , 1994 )), and it extends this line of work to more general transformations t han lo cal tr ans lation . On m ulti-view feature learning 2. Bac kground on m ulti-view spars e co ding F eature learn in g 1 amoun ts to encoding an image patch x using a vector of laten t v ariable s z = σ ( W T x ), where e ach column of W can b e viewed as a linear feature (“filter”) that corresp onds to one h idd en v ari- able z k , and 2 where σ is a non-linearity , suc h as the sigmoid σ ( a ) = 1 + exp( a ) − 1 . T o adapt the param- eters, W , based on a set of example patches { x α } one can use a v ariet y of metho ds, including maximizing the av erage sparsity of z , minimizing a form of recon- struction er r or, maximizing the lik eliho od of th e obser- v ations via Gib bs sampling, and others (see, for exam- ple, ( Hyv¨ arinen et al. , 2009 ) and references therein). T o obtain hidden v ariables z , that enco de the r ela- tionship b etw een tw o images, x and y , one needs to represent correlation patterns b et ween tw o images in- stead. T his is commonly achiev ed by computing the sum ov er pro ducts of filter resp onses: z = W T U T x ∗ V T y (1) where “ ∗ ” is elemen t-wise m ultiplication, and the columns of U and V con tain image fil- ters that are lear ne d along with W from data ( Memisevic & Hinton , 2010 ). Again, one may apply an element-wise non-linearity to z . The hidden units are “m ulti-view” v ariables that enco de transforma- tions n ot the conten t of single images, and they are commonly referred to as “mapping units”. T raining the mo del par ameter s , ( U, V , W ), can b e ac hieved by minimizing the c onditional reconstruc tion error of y k eeping x fixed or vic e versa ( Memisevic , 2011 ), or by conditional v ariants of maxim um likeli- ho od ( Ranzato & Hinton , 2010 ; Memisevic & Hinton , 2010 ). T raining the mo del on transforming random- dot patterns yields transformation-s p ecific features, suc h as phase-shifted F ourier featur es in the case of translation and circular harmonics in the case of ro- tation ( Memisevic & Hinton , 2010 ; Memisevic , 2011 ). Eq. 1 can also b e derived by factorizing the pa- rameter tensor of a conditional sparse co ding mo del ( Memisevic & Hinton , 2010 ). An illustration of the mo del is sho wn in Figure 1 (a). 1 W e use the terms “feature learning”, “dictionary learn- ing” and “sparse co ding” synonymously in th is pa p er. Each term tends to come with a slightly different mean- ing in the literature, but for the purp ose of this work the differences are negligible. 2 In practice, it is common to add constant bias terms to the linear map p in g . In the following, we shall refrain from doing so to av oid cluttering up the deriv ations. W e shall instead think of data and hidden v ariables as b eing in “ho- mogeneous notation” with an extra, constant 1-dimension. 2.1. Energy mo dels Multi-view feature learning is closely r el ated to ener gy mo dels and to mo dels of c omplex c el ls ( Adelson & Bergen , 1985 ; Fleet e t al. , 1996 ; Kohonen ; Hyv¨ arinen & Hoy er , 2000 ). The activity of a hidden unit in an energy model is t ypically defined as the sum o ver squared filter resp onses, which may b e written z = W T B T x ∗ B T x (2) where B con tains image filters i n its columns. W is usually constrained suc h that each hidden v ariable, z k , computes the sum o ver only a subset of all pro ducts. This wa y , hidden v ariables can b e thought of as en- co ding the norm of a pro jection of x on to a subspace 3 . Energy mo dels are also referred to as “subspace” or “square-p ooling” mo dels. F or our analysis, it is imp ortant to note that, when w e apply an energy mo del to the c onc atenation of two images , x and y , we obtain a resp onse that is close ly related to th e resp onse of a m ulti-view sparse co ding mo del (cf., Eq. 1 ): Let b f denote a single column of matrix B . F urther mor e, let u f denote the part of the filter b f that gets applied to image x , and let v f de- note the part that gets applied to image y , so that b T f [ x ; y ] = u T f x + v T f y . Hid de n unit activities, z k , then take the form z k = X f W f k u T f x + v T f y 2 = 2 X f W f k u T f x v T f y + X f W f k u T f x 2 + X f W f k v T f y 2 (3) Th us, up to the quadratic terms in Eq. 3 , hidden unit activities are the same as in a multi-view feature learn- ing mo del (Eq. 1 ). As we shall discuss in Section 3.5 , the quadratic terms do not significantly change the b e- ha vior of the hidden u n its as compared to multi-view sparse co ding mo dels. An illustrati on of the energy mo del is sho wn in Figure 1 (b). 3. Eigenspace analys is W e now sho w that hidden v ariables turn in to sub- space rotation det ec tor s when the models are trained on transformed image pairs. T o s i mpli fy the analy- sis, we shall restrict our attention to transfor mations , L , that are orthogonal, that is, L T L = LL T = I , where I is the identit y matrix. In other words, L − 1 = L T . Linear transformations in “pixel-space” are also 3 It is also common to apply non-linearities, such as a square-ro ot, to the activity z k . On m ulti-view feature learning x y x i y j z z k U V W z k z W x x i y j y ( · ) 2 U V p y p x Im Re p y e iθ p x Im Re (a) (b) (c) (d) Figure 1. (a) Mo deling an ima g e pair using a gated sparse co ding mo del. (b) Mo deling an image pair using an energy mo del applied to the concatenation of the images. (c) Pro jections p x and p y of t wo images onto the complex p la n e, that is sp a n n ed by tw o eigenfeatures. (d) Absorbing eigenv alues into input-featu res amou n ts to p erforming a pro jection and a rotation for image x . Hidden units can detect if this brings th e pro jections into alignment (see text for details) . kno wn as warp . Note that practically all relev ant spa- tial tr ans for mations , lik e translation, rotation or lo cal shifts, can b e expressed appro ximately as an orthog- onal warp, b ecause orthogonal transformations sub- sume, in particular, all p ermutations (“shuffling pix- els”). An imp ortan t fact ab out orthogonal matrices is t hat the eigen-decomp osition L = U D U T is complex, where eigenv alues (diagonal of D ) hav e absolute v alue 1 ( Horn & Johnson , 1990 ). Multiplying by a compl ex n umber with absolute v alue 1 amounts to performing a rotation in the complex plane, as illustrated in Figure 1 (c) and (d). Eac h eigensp ace asso ciated with L is also referred to as invariant subsp ac e of L (as applica- tion of L will keep eigenv ectors within the subspace). Applying an orthogonal warp is thus equiv alen t to (i) pro jecting the i mage onto filter p airs (the real and imaginary parts of each eigenv ector), (ii) p erforming a rotation withi n each inv ariant subs pace , and (iii) pro jecting back into the image-s p ace. In other words, w e can decomp ose an orthogonal transformation into a set of indep endent, 2-dimensional rotations. The most well-kno wn examples are translations: A 1D- translation matrix con tains ones along one of it s sec- ondary diagonals, and it is zero elsewhere 4 . The eigen- v ectors of this matrix are F ourier-comp onents ( Gray , 2005 ), and the rotation in each in v ariant subspace amoun ts to a phase-shift of the corresp onding F ourier- feature. This leav es the norm of the pro jections onto the F ourier-comp onen ts (the p ow er sp ectrum of the signal) constant, which is a well known property of 4 T o be exactly orthogonal it has to contain an additional one in another place, so that it perfo rms a rotation with wrap-around. translations. It is interesting to note that the imaginary and real parts of the eigenv ectors of a translation matrix corre- sp ond to sine and cosine features, resp ectiv ely , reflect- ing the fact that F ourier comp onents naturally come in p airs . These are commonly referred to as quadr atur e p airs in the liter atur e . The same is tru e of Gab or fea- tures, which represent local transl ations ( Qian , 1994 ; Fleet et al. , 1996 ). How ev er, the prop erty that eigen- v ectors come in pairs is not sp ecific to translations. It is shared by all transformations that can b e repre- sen ted by an orthogonal matrix. ( Bethge et al. , 2007 ) use the ter m gener alize d quadr atur e p air to refer to th e eigen-features of these trans for mations . 3.1. Comm uting warps share eigenspaces A central observ ation to our analysis is that eigenspaces can b e sh ar e d among transformations. When eigenspaces are shared, then the only wa y in whic h t wo transformations differ, is in the angles of rotation within the eigenspaces. In this case, we can represent m u lt ipl e tr ansformations with a single set of fe atur es as we shall show. An example of a shared eigenspace is the F ourier-basis, whic h is shared among translations ( Gra y , 2005 ). Less ob vious examples are Gab or features which may b e though t of as the eigenbases of lo cal translations, or features that rep r es ent spatial rotations. F ormally , a set of matrices share eigenv ectors if the y c ommute ( Horn & Johnson , 1990 ). This can b e seen by con- sidering any t wo matrices A and B with AB = B A and with λ, v an eigen v alue/eigen vector pair of B with m ultiplicity one. It holds that B Av = AB v = λAv . On m ulti-view feature learning Therefore, Av is also an eigenv ector of B with the same eigen v alue. 3.2. Extracting transformations Consider the follo wing task: Giv en tw o images x and y , determine the transformation L that relates them, assuming that L b elongs to a given class of transfor- mations. The imp ortance of commuting transformations for our analysis is that, s inc e they share an eigen basis, any t wo transformations differ only in the angles of rotation in the joint eigenspaces. As a r esult, one may extract the transformation from the given image pair ( x , y ) simply b y reco vering the angles of rotation b et ween the pro jections of x and y onto the eigenspaces. T o this end, consider the real and complex parts v R and v I of some eigen-feature v = v R + i v I , where i = √ − 1. The real and imaginary co ordinates of the pro jection p v x of x on to the inv ariant subspace asso ciated with v are given by v T R x and v T I x , resp ectiv ely . F or the pro jection p v y of the output image on to the in v ariant subspace, they are v T R y and v T I y . Let φ x and φ y denote the angles of the pro jections of x and y with the real axis in the complex plane. If we normalize the pro jections to ha ve unit norm, then the cosine of the angle b et ween the pro jections, φ y − φ x , ma y b e written cos( φ y − φ x ) = c os φ y cos φ x + sin φ y sin φ x b y a tr igonometr i c identit y . This is eq uiv alent to com- puting the inner pro duct betw een t wo normalized pro- jections (cf. Figure 1 (c) and (d)). In other words, to estimate the (cosine of ) the angle of rotation b et ween the pro ject ions of x and y , we need to sum over the pr o duct of t w o filter r esp ons es . 3.3. The subspace ap erture prob lem Note, how ev er, that normalizing each pro jection to 1 amoun ts to dividing by the sum of squared filter re- sp onses, an op eration that is highly un s tabl e if a pro- jection is close to zero. This will be the case, whenever one of the images is almost orthogonal to the inv ariant subspace. This, in turn, means that the rotation an- gle c annot b e r e c over e d fr om t he given image , b ecause the image is to o close to the axis of rotation. One ma y view this as a subspace-generalization of the well- kno wn ap ertur e pr oblem b eyond translation, to the set of orthogonal tr ansf ormati ons . Normalization would ignore this prob lem and provide the illusion of a re- co vered angle ev en when the ap erture problem makes the detect ion of the transformation comp onen t imp os- sible. In the next s ec ti on w e di sc us s ho w one ma y o vercome this problem b y rephrasing the problem as a dete ction task. 3.4. Mapping units as rotation detectors F or each eigenv ector, v , and r otati on angle, θ , define the complex output image filter v θ = exp( iθ ) v whic h represents a pro jection and simultaneous ro- tation by θ . This allows us to define a subspace rotation-detector with preferred angle θ as follows: r θ = ( v T R y )( v θ R T x ) + ( v T I y )( v θ I T x ) (4) where subscripts R and I denote the real and imagi- nary part of the filters like b efore. If pro jections are normalized to length 1, w e hav e r θ = cos φ y cos( φ x + θ ) + sin φ y sin( φ x + θ ) = cos( φ y − φ x − θ ) , (5) whic h is maximal whenever φ y − φ x = θ , thus when the observ ed angle of rotation, φ y − φ x , is equal to the preferred angle of rotation, θ . How ev er, like b efore, normalizing pro jections is not a go o d idea b ecause of the subsp ace ap erture problem. W e now show that mapping units are well-suited to detecting s u bs pace rotations, if a num b er of conditions are met. If features and data are c ontr ast normalize d , then the pro jections will dep end only on ho w w ell the image pair represents a given subspace rotation. The v alue r θ , in turn, will depend (a) on the transformation (via the subspace angle) and (b) on the conten t of the im- ages (via the angle b et ween e ach image and the in- v ari ant subspace). Thus, the output of th e detector factors in b oth, the presence of a transformation and our ability to discern it. The fact that r θ dep ends on image conten t makes it a subop timal rep r es entation of th e transfor mation . Ho wev er, note that r θ is a “conserv ativ e” detector, that tak es on a large v alue only if an input image pair ( x , y ) complies with its tr ans for mation. W e can therefore define a conten t-indep enden t representation b y p o oling o ver m ultiple detectors r θ that represent the same transformation but res p ond to differ e nt images. Therefore, b y stacking eigenfeature s v and v θ in ma- trices U and V , resp ectiv ely , we may define the rep- resentation t of a transformation, given tw o images x and y , as t = W T P U T x ∗ V T y (6) On m ulti-view feature learning where P is a band-diagonal within-subspace p o oling matrix, and W is an appropriate across-subspace po ol- ing matrix that supp orts conten t-indep endence. F urthermore, the following conditions need to b e met: (1) Images x and y are contrast-normalized, (2) F or eac h ro w u f of U there ex is t s θ such that the corre- sp onding row v f of V can b e written v f = exp( iθ ) u f . In other words, filter pairs are related through rota- tions only . Eq. 6 takes the same form as inference in a m ulti- view feature learning mo del (cf., Eq. 1 ), if we absorb the within-subspace p ooling matrix P into W . L e arn- ing amounts to iden tifying both the subspaces and the p ooling matrix, so training a m ulti-view feature learn- ing mo del can b e thought of as p erforming multiple sim ultaneous diagonalizations of a set of transforma- tions. When a dataset contains more than one trans- formation class, learning inv olv es partitioning the set of orthogonal warps into commutativ e subsets and si- m ultaneously diagonalizing each subset. Note that, in practice, complex filters can be represented by learn- ing tw o-dimensional subspaces in the form of filter pairs. It is uncommon, albeit p ossible, to learn ac- tually complex-v alued features in practi ce . It is in teresting t o note that condition (2) ab o ve implies that filters are normalized to hav e the same lengths. Imp osing a norm constrain t has b een a common approach to stabilizing learn- ing ( Ranzato & Hinton , 2010 ; Memisevic , 2011 ; Susskind et al. , 2011 ), but it has not b e en clear why imp osing norm constrain ts help. Pooling o ver multi- ple subspaces may , in addition to providing conten t- indep enden t representations, also help deal with edge effects and noise, as well as with the fact that learned transformations may not b e exactly orthogonal. In practice, it is also common to app ly a sigmoid non- linearity after computing mapping unit activities, so that the outp ut of a hidden v ariable can b e interpreted as a probability . Note that diagonalizing a single tran sf ormati on, L , w ould amount to p erforming a kind of canonical cor- relations analysis (CCA), so learning a multi-view fea- ture learning mo del ma y b e though t of as p erform- ing multiple canonical correlation analyzes with tied features. Similarly , mo deling within-image structure b y setting x = y ( Ranzato & Hinton , 2010 ) would amoun t to learning a PCA mixture with tied weigh ts. In th e same wa y that neural netw orks can b e used to implemen t CCA and PCA up to a linear transforma- tion, the result of training a m ulti-view feature learn- ing mo del is a sim ultaneous diagonalization only up to a linear transformation. 3.5. Relation to energy mo dels By concatenating images x and y , as w ell as filters v and v θ , we may approximate the subspace rotation de- tector (Eq. 4 ) with the resp onse of an energy detector: r θ = ( v R T y ) + ( v θ R T x ) 2 + ( v I T y ) + ( v θ I T x ) 2 = 2 ( v R T y )( v θ R T x ) + ( v I T y )( v θ I T x ) + ( v R T y ) 2 + ( v θ R T x ) 2 + ( v I T y ) 2 + ( v θ I T x ) 2 (7) Eq. 7 is equiv alen t to Eq. 4 up to the four qu adr ati c terms. The four quadratic terms are equal to the sum of the squared norms of the pro jections of x a nd y on to the inv ariant subspace. Thus, lik e the norm of the pro jections, they contribute information ab out the dis- cernibility of transformations. This makes the energy resp onse dep end more on the alignment of the images with its subspace. How ev er, like for the inner pro duct detector (Eq. 4 ), the p eak r e sp onse is attained when b oth images reside within the detector’s subspace and when their pro jections are rotated b y the detectors preferred angle θ . By p ooling ov er multiple rotation detectors, r θ , we ob- tain the equiv alen t of an energy resp onse (Eq. 3 ). This sho ws that energy mo dels applied to the concatenation of tw o images are well-suited to mo deling transforma- tions, to o. It is interesting to note that b oth, multi- view sparse co ding mo dels ( T a ylor et al. , 2010 ) and energy mo dels ( Le et al. , 2011 ) w ere recently sho wn to yield highly comp etitiv e p erformance in action recog- nition tasks, which requi r e the enco ding of motion in videos. 4. Exp erimen ts 4.1. Learning quadrature pairs Figure 2 shows random subsets of input/output fil- ter pairs learned from rotations of random dot im- ages (top plot), and from a mixed dataset, consisting of random rotations and random trans lati ons (b ottom plot). W e separate the t wo lay ers of p ooling, W and P , and we constrain P to b e band-diagonal with en- tries P i,i = P i,i +1 = 1 and 0 elsewhere. Thus, fil- ters need to come in pairs, which w e exp ect to b e ap- pro ximately in quadrature (each pairs s pans the sub- space asso ciated with a rotation detector r θ ). Figure 2 sho ws that this is indeed the case after training. Here, w e use a mo dification of a higher-order auto encoder ( Memisevic , 2011 ) for training, but we exp ect simi- On m ulti-view feature learning Figure 2. Learned quadrature filters. T op: Filters learned from rotated images. Bottom: Filters learned from im- ages showing both ro ta t io n s and translations. lar results to hold for other multi-view mo dels 5 . W e discuss an applic ation of separating p ooling in detail in Section 5 . Note that for the mixed dataset, b oth the set of rotations and the set of t r ans lation s are sets of commuting w arps (up to edge effects), but rotations do not comm ute wit h translations and vice v ersa. The figure shows that the mo del has learned to se par ate out the tw o types of transformation b y dev oting a subset of filter s to enco ding rotations and another subset to mo deling translations . 4.2. Learning “eigenmo vies” Both energy mo dels and cross-cor r e lation mo dels can b e applied to more than tw o images: Eq. 4 ma y b e mo dified to contain all cross-terms, or all the ones that are deemed relev ant (f or example, adjacent frames, whic h would amount to a “Marko v”-t yp e gating mo del of a video). Alternatively , for the energy mechanism, w e can compute the square of the concatenation of more than t wo images in place of Eq. 7 , in which case, w e obtain the detector resp onse r = X s v s R T x s 2 + X s v s I T x s 2 = Ω + X st v s R T x s v t R T x t + X st v s I T x s v t I T x t (8) 5 Co de and datasets are av ailable at http://www.cs.toronto.edu/ ~ rfm/code/multiview/index.html where Ω contains the quadratic terms of the ener gy mo del. In analogy to Section 3.4 , for the detector to function prop erly , features will need to s atis fy v s R = exp( iθ s ) v R and v s I = exp( iθ s ) v I for appropriate filters v R and v I . W e verify that tr aini ng on videos leads to filters which appro ximately satisfy this condition as follo ws: W e use a gated auto enco der where w e set U = V , and we set x = y to the concatenation of the 10 frames. In con trast to Section 4.1 , we use a single (full) p ooling matrix W . Figure 3 shows subsets of learned filters af- ter training the mo del on shifted random dots (top) and natural movies cropp ed from the v an Hateren database ( v an Hateren & Ruderman , 1998 ) (center). The learned filter-sequences represen t rep eated phase- shifts as exp ected. Thus, they form the “eigenmo vies” of each resp ectiv e tr ans for mation class. Eq. 8 implies that learning videos requires consistency b et ween the filters across time. Each factor – corre- sp onding to a sequence of T filters – can mod e l only the rep eated application of the same transformation. An inhomogeneous sequence that inv olves m ultiple dif- ferent types of trans for mation can only b e modeled b y devoting separate filter se ts to homogeneous sub- sequences. W e verify that this is what happ ens dur- ing tr ainin g, b y using 10-frame videos sho wing random dots that first rotate at a constant speed for 5 frames, then translate at a constant sp eed for the remaining 5 frames. Orientation, sp eed and direction v ary across mo vies. W e trained a gated auto encoder like in the previous exp eriment. The b ottom plot in Figure 3 sho ws that the mo del learns to de c omp os e the movies in to (a) F ourier filters which are quiet in t he first half of a mo vie and (b) rotation features which are quiet in the second half of th e movie. 5. Learning inv ariances b y learning transformations Our analysis suggests that detector resp onses, r θ , will not b e affected by transformations they are tuned t o, as these will only cause a rotation within the detec- tor’s subspace, leaving the norm of the pro jections unc hanged. An y other transformation (like show- ing a completely different image pair), how ever, may c hange the representation: Pro jections may get larger or smaller as the transformation changes the degree of alignmen t of the images with the inv ariant su b sp aces . This suggests, that we can learn features that are in- variant wit h resp ect to one t yp e of tran sf ormati on and at the same time sele ctive with resp ect to an y other type of transformation as follows: we separate On m ulti-view feature learning F rame: 1 2 4 6 8 10 Figure 3. Subsets of filters showing six frames f ro m the “eigenmovies” of synthetic mo vies showing translating random- dots (t o p ); natural movies, cropp ed from the v an Hateren broadcast TV datab a s e (middle); synthetic movies showing random-dots which rotate for 5 frames then translate for 5 frames (b ottom). the tw o p ooling-levels in to a band -matr i x P and a full matrix W (cf., Section 4.1 ). After tr aini ng the mo del on a transformation class, the first-level p ool- ing activities P T U T x ∗ V T y computed from a test image pair ( x , y ) will constitute a transformation in- v ari ant co de for this pair. Alternatively , we can use P T U T x ∗ V T x if the test data do es not come in the for m of pairs but consists only of single images. In this case w e obtain a representation of the null- transformation, but it will still b e inv ariant. W e tested this approach on the “rotated MNIST”- dataset from ( Laro chelle et al. , 2007 ), wh ich consists of 72000 MNIST digit images of size 28 × 28 pixels that ar e rotated b y arbitrary random angles ( − 180 to 180 degrees; 12000 train-, 60000 test-cases, classes range from 0 − 9). Since the num b er of training cases is fairly large, mos t exemplars are represented at most angles, so even linear classifiers p erform well ( Laro c helle et al. , 2007 ) . Ho wev er, when reducing the n umber of training cases, the num b er of p oten tial matc hes for any test case dramatically reduces, so clas- sification error rates b ecome muc h worse when using ra w images or standard features. W e used a gated auto-en co der with 2000 factors and 200 mapping units, which we trained on image pairs sho wing rotating random-dots. Figure 4 sho ws the error rates when using subspace features (resp onses of the first la yer p ooling units) with subspace dimen- sion 2. W e us ed the feature s in a logistic regre s si on classifier vs. k-nearest neighbors on the original im- ages (we also tried logistic regression on raw images and logistic regression as well as nearest neighbors on 200-dimensional PCA-features, but the p erformance is w orse in all these cases). The learned features are sim- ilar to the features shown in Figure 2 , so they are not tuned to digits, and they are not train ed discrimina- tiv ely . They nevertheless consistently p erform ab out as w ell as, or b etter than, nearest neighbor. Also, ev en at half t he or iginal dataset size, the subspace features still attain ab out the same classifi cation p erformance as raw images on the whole training set. All parame- ters were set using a fixed hold-out set of 2000 images. The exp eriment s hows that the rotation detectors, r θ , are affected sufficiently b y th e ap erture problem, such that they are sele ctive to image conten t while b eing invariant to rotation. This shows that we can “har- ness the ap erture problem” to learn inv ariant features. 6. Conclusions W e analyzed multi-view s par s e co ding mo dels i n terms of the joint eigen s pace s of a set of transformations. Our analysis helps understand why F ourier features and circular F ourier features emerge when training transformation mo dels on shifts and rotations, and wh y square-po oling mo dels work w ell in action and On m ulti-view feature learning Figure 4. Classification error rate as a function of training set size on the “rotated mnist” dataset, using raw images vs. subspace features trained on rotations. motion recognition tasks. Our analysis furthermore sho ws how the ap erture problem implies that w e can learn in v arian t features as a by-product of learning ab out transformations. The fact that squaring nonlinearities and m ultiplica- tiv e interactions can supp ort the lear ni ng of relations suggests that these may help increase the role of sta- tistical learning in vision in general. By learning about relations we may extend the applicabilit y of spar s e co ding mo dels b eyond recognizing ob jects in static, single images, tow ards task s that inv olv e the fusion of m ultiple views, including inference ab out geometry . Ac kno wledgments This w ork w as supp orted by the German F ederal Ministry of Education and Research (BMBF) in the pro ject 01GQ0841 (BFNT F rankfurt). References Adelson, E.H. and Bergen, J.R. Spatiotemp oral energy mo dels for the p erception of motion. J. Op t. So c. Am. A , 2(2):284–299, 1985. Bergstra, James, Bengio, Y oshua, and Louradour, J ´ erˆ ome. Suitability of V1 energy mo dels for ob ject classification. Neur al Computation , pp. 1–17, 2010. Bethge, M, Gerwinn, S, and Mac ke, JH. Unsupervised learning of a steerable basis for inv ariant image repre- sentations. In Human Vision and Ele ctr oni c Imaging XII . SPIE, F ebruary 2007. Fleet, D., W agner, H., and Heeger, D. Neural en c o ding of bino cular disparity: Energy mo dels, p osition shifts and phase shifts. Vision R ese ar ch , 36(12):1839–1857, 1996. Gray , Robert M. T o eplitz and circulan t matrices: a review. Commun. Inf. The ory , 2:155–239, August 2005. Horn, Roger A. a n d Johnson, Charles R. Matrix Analysis . Cambridge Universit y Press, 1990. Hyv¨ arinen, Aap o and Hoy er, Patrik. Emer g enc e of phase- and shift-inv ariant features by decomp osition of natu- ral images in to indep endent feature subspaces. Neur al Computation , 12:1705–1720, Ju ly 2000. Hyv¨ arinen, Aap o, Hurri, J., and Hoy er, Patrik O. Natu- r al Image Statistics: A Prob abilistic Appr o ach to Early Computational Vision . Springer V erlag, 2 0 09 . Kohonen, T euvo. The Adaptive-Subspace SOM (ASSOM) and its u se for the implemen tation of inv ariant feature detection. I n Pro c. ICANN ’ 9 5 , Int. Conf. on Artificial Neur al Networks . Laro c helle, Hugo, Erhan, Dumitru, Courville, Aaron, Bergstra, James, and Bengio, Y oshua. An empirical ev al- uation of deep architectures on problems with man y fac- tors of v ariation. In Pr o c e edings of the 24th international c onfer enc e on Machine le arning , 2007. Le, Q.V., Zou, W.Y., Y eung, S.Y., and Ng, A.Y. Learning hierarchical spatio-temp oral features for action reco g - nition w ith indep enden t subspace analysis. In Pr o c. CVPR, 2011 . IEEE, 2011. Memisevic, Roland. Gradien t-based lea rn in g of higher- order imag e features. I n Pr o c e e dings of the International Confer enc e on Computer Vision , 2011. Memisevic, Roland and Hinton, Geoffrey E . Learning to represent spa tia l transformations with factored higher- order Boltzmann machines. Neur al Computation , 22(6): 1473–92, 2010. Qian, Ning. Computing stereo disparity and motion with known bino cular cell propert ies. Neur al Computa ti on , 6: 390–404, May 1994. Ranzato, Marc’Aur elio and Hinton, Geoffrey E. Mo deling Pixel Means and Co v ariances Using F actorized Third- Order Boltzmann M a chines. In Computer Vision and Pattern R e c o gnition , 2010. Susskind, J., Memisevic, R., Hin ton, G., and P ollefeys, M. Mo deling the joint density of t wo images under a v ariety of transformations. In Pr o c e e dings of IEEE Confer enc e on Computer Vision and Pattern R e c o gnition , 2011. T aylor, Graham, W., F ergus, Rob, LeCun, Y ann, and Bregler, Christoph. Conv olutional learning of spa t io - temp oral feat u r es. In Pr o c. Europ e an Confer enc e on Computer Vision , 2010. v an Hateren, L. an d Ruderman, J. Indep enden t comp o- nent analysis of na t u ra l image sequences yields spatio- temp oral filters similar to simple cells in primary visual cortex. Pr o c. Biolo gic al Scienc es , 265(1412):231 5 – 2 3 2 0, 1998.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment