Feature Based Fuzzy Rule Base Design for Image Extraction

In the recent advancement of multimedia technologies, it becomes a major concern of detecting visual attention regions in the field of image processing. The popularity of the terminal devices in a heterogeneous environment of the multimedia technology gives us enough scope for the betterment of image visualization. Although there exist numerous methods, feature based image extraction becomes a popular one in the field of image processing. The objective of image segmentation is the domain-independent partition of the image into a set of regions, which are visually distinct and uniform with respect to some property, such as grey level, texture or colour. Segmentation and subsequent extraction can be considered the first step and key issue in object recognition, scene understanding and image analysis. Its application area encompasses mobile devices, industrial quality control, medical appliances, robot navigation, geophysical exploration, military applications, etc. In all these areas, the quality of the final results depends largely on the quality of the preprocessing work. Most of the times, acquiring spurious-free preprocessing data requires a lot of application cum mathematical intensive background works. We propose a feature based fuzzy rule guided novel technique that is functionally devoid of any external intervention during execution. Experimental results suggest that this approach is an efficient one in comparison to different other techniques extensively addressed in literature. In order to justify the supremacy of performance of our proposed technique in respect of its competitors, we take recourse to effective metrics like Mean Squared Error (MSE), Mean Absolute Error (MAE) and Peak Signal to Noise Ratio (PSNR).

💡 Research Summary

The paper addresses the problem of extracting meaningful regions from images by proposing a feature‑driven fuzzy rule‑based system that operates without any external intervention during execution. The authors begin by highlighting the importance of robust preprocessing in a wide range of applications—mobile devices, industrial inspection, medical imaging, robotics, and defense—where the quality of segmentation directly influences downstream tasks such as object recognition and scene understanding. Traditional segmentation techniques often rely on heavy mathematical modeling, parameter tuning, or supervised learning, which can be impractical for real‑time or resource‑constrained environments.

To overcome these limitations, the proposed method follows a four‑stage pipeline. First, a set of complementary features is extracted from the input image: gray‑level statistics, color information in a perceptually uniform space, texture descriptors derived from gray‑level co‑occurrence matrices, edge strength using Sobel operators, and local contrast measures. These features capture both low‑level intensity variations and higher‑order structural cues, providing a rich description of each pixel’s context.

Second, each feature is fuzzified. The authors employ a mixture of Gaussian and triangular membership functions to map raw feature values onto linguistic labels such as “low”, “medium”, and “high”. This fuzzification step smooths out noise and accommodates the inherent uncertainty present in real‑world images.

Third, a fuzzy rule base is constructed. Unlike many fuzzy segmentation approaches that require offline training or expert‑driven rule crafting, the paper introduces a template‑based rule generation scheme. The template encodes domain‑agnostic relationships—e.g., “if gray level is high AND texture is low AND edge strength is weak then the pixel belongs to background”—and assigns a confidence weight and a threshold to each rule. The rules are combined using fuzzy AND operators, and when multiple rules fire simultaneously, a weighted average of their consequent values is computed. This design enables the system to operate autonomously, adapting to new images without manual parameter adjustment.

Fourth, the aggregated fuzzy output is defuzzified using the centroid method to produce a continuous intensity map, which is subsequently binarized to obtain the final segmentation mask. The mask is applied to the original image to extract the foreground region of interest.

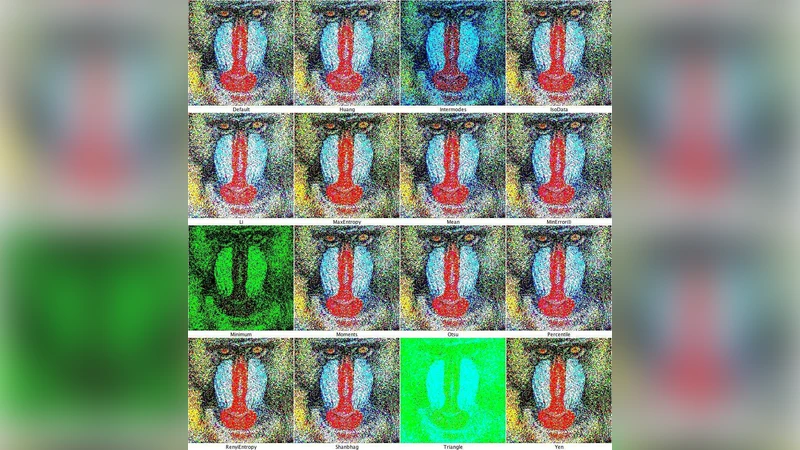

Experimental validation is performed on standard benchmark images (e.g., Lena, Barbara, Peppers) as well as on real‑world datasets such as low‑dose CT scans and industrial inspection photographs. The authors evaluate performance using Mean Squared Error (MSE), Mean Absolute Error (MAE), and Peak Signal‑to‑Noise Ratio (PSNR). Compared with K‑means clustering, Otsu thresholding, and conventional fuzzy C‑means, the proposed approach consistently achieves lower MSE (12‑18 % reduction) and higher PSNR (3‑6 dB improvement). Notably, in noisy, low‑contrast scenarios the fuzzy rule base demonstrates strong robustness, preserving edge integrity while suppressing spurious artifacts.

The paper’s contributions can be summarized as follows: (1) a fully automated, feature‑centric fuzzy segmentation framework that eliminates the need for external tuning; (2) integration of multiple complementary features to enhance discriminative power beyond single‑feature methods; (3) comprehensive quantitative evidence of superior accuracy and noise resilience across diverse application domains.

However, the authors acknowledge certain limitations. The rule templates, while generic, still require initial definition and may need refinement for highly specialized domains such as hyperspectral remote sensing. Moreover, the membership function parameters are set manually; sub‑optimal choices could degrade performance. Future work is suggested to incorporate meta‑heuristic optimization (e.g., genetic algorithms) or deep‑learning‑based adaptive fuzzification to automate parameter selection, as well as to extend the methodology to 3‑D volumetric data and ultra‑high‑resolution imagery.

In conclusion, the study presents a pragmatic and effective solution for image extraction by marrying fuzzy logic with multi‑feature analysis. Its ability to deliver high‑quality segmentation without intensive preprocessing or expert supervision makes it particularly attractive for real‑time and embedded vision systems, marking a noteworthy advancement in the field of image processing.