Knowledge Combination in Graphical Multiagent Model

A graphical multiagent model (GMM) represents a joint distribution over the behavior of a set of agents. One source of knowledge about agents’ behavior may come from gametheoretic analysis, as captured by several graphical game representations developed in recent years. GMMs generalize this approach to express arbitrary distributions, based on game descriptions or other sources of knowledge bearing on beliefs about agent behavior. To illustrate the flexibility of GMMs, we exhibit game-derived models that allow probabilistic deviation from equilibrium, as well as models based on heuristic action choice. We investigate three different methods of integrating these models into a single model representing the combined knowledge sources. To evaluate the predictive performance of the combined model, we treat as actual outcome the behavior produced by a reinforcement learning process. We find that combining the two knowledge sources, using any of the methods, provides better predictions than either source alone. Among the combination methods, mixing data outperforms the opinion pool and direct update methods investigated in this empirical trial.

💡 Research Summary

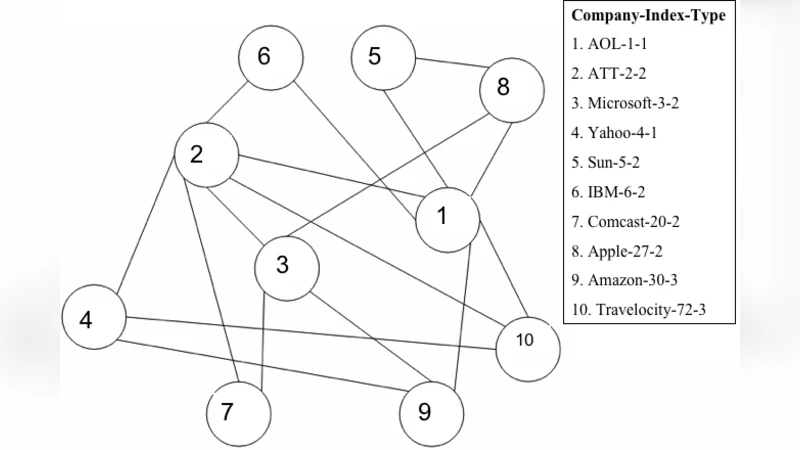

The paper introduces a unified framework for combining heterogeneous sources of knowledge about multi‑agent behavior using Graphical Multiagent Models (GMMs). A GMM represents a joint probability distribution over agents’ actions in a graphical structure, extending traditional graphical game representations that usually assume agents play exact equilibrium strategies. The authors first construct two distinct families of GMMs. The “game‑derived” models start from a formal game description (payoff matrices and interaction graph) but relax the strict equilibrium assumption by allowing each agent’s action to deviate probabilistically from its equilibrium strategy. This is achieved through a deviation parameter that controls the spread of a probability distribution around the equilibrium action. The second family, “heuristic” models, encodes simple decision rules such as reward‑proportional or experience‑based action selection, capturing behavior that may arise from bounded rationality or domain‑specific heuristics.

To integrate these models, three combination mechanisms are explored. 1) Opinion Pool treats each model as an independent belief and forms a weighted Bayesian average of their probability tables; the weights reflect prior confidence in each knowledge source. 2) Direct Update selects one model as a base and updates its parameters by treating samples generated by the other model as additional training data, effectively performing a Bayesian posterior update. 3) Mixing Data simply merges the sample sets produced by both models into a single dataset and re‑learns a GMM of the same graphical structure from this combined data. This method is the most straightforward and leverages the complementary information without imposing strong assumptions about independence.

The empirical evaluation uses a reinforcement‑learning (RL) agent that repeatedly plays a graphical game. The RL agent’s trajectory, which gradually converges toward a Nash equilibrium but exhibits stochastic deviations, is taken as the ground‑truth behavior. For each combination method, the authors compute the log‑likelihood of the RL data under the resulting GMM and the Kullback‑Leibler (KL) divergence from the empirical distribution. All three combined models outperform the single‑source baselines, confirming that integrating game‑theoretic and heuristic knowledge yields a richer predictive distribution. Among the methods, mixing data achieves the highest log‑likelihood and the lowest KL divergence, indicating that the simple data‑fusion approach captures both the rational structure of the game and the variability introduced by learning dynamics more effectively than the opinion‑pool or direct‑update schemes.

The study contributes (i) a formal definition of GMMs that can encode arbitrary probabilistic beliefs about multi‑agent actions, (ii) concrete constructions for game‑derived and heuristic‑derived GMMs, (iii) three principled combination strategies, and (iv) an empirical demonstration that even a naïve data‑mixing approach can substantially improve predictive performance. The authors suggest future work on scaling to larger, possibly dynamic interaction graphs, handling adversarial or malicious behavior, and developing online updating mechanisms that can incorporate new observations in real time. Overall, the paper positions GMMs as a flexible, extensible tool for synthesizing diverse knowledge sources in multi‑agent systems, with practical implications for prediction, planning, and control in complex interactive environments.