DEUS Full Observable {Lambda}CDM Universe Simulation: the numerical challenge

We have performed the first-ever numerical N- body simulation of the full observable universe (DEUS “Dark Energy Universe Simulation” FUR “Full Universe Run”). This has evolved 550 billion particles on an Adaptive Mesh Refinement grid with more than two trillion computing points along the entire evolutionary history of the universe and across 6 order of magnitudes length scales, from the size of the Milky Way to that of the whole observable universe. To date, this is the largest and most advanced cosmological simulation ever run. It provides unique information on the formation and evolution of the largest structure in the universe and an exceptional support to future observational programs dedicated to mapping the distribution of matter and galaxies in the universe. The simulation has run on 4752 (of 5040) thin nodes of BULL supercomputer CURIE, using more than 300 TB of memory for 10 million hours of computing time. About 50 PBytes of data were generated throughout the run. Using an advanced and innovative reduction workflow the amount of useful stored data has been reduced to 500 TBytes.

💡 Research Summary

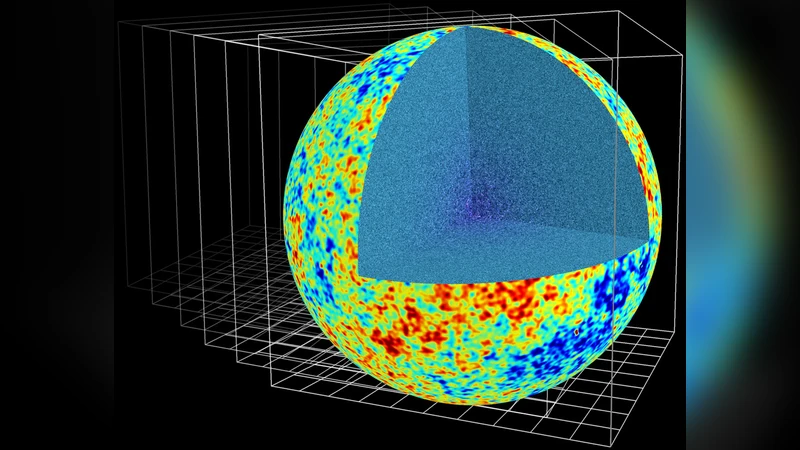

The paper presents the DEUS (Dark Energy Universe Simulation) Full Universe Run (FUR), the first ever N‑body simulation that directly models the entire observable universe within a ΛCDM framework. Using 550 billion particles, the authors employed an Adaptive Mesh Refinement (AMR) grid that expands to more than two trillion cells, thereby covering six orders of magnitude in spatial scale—from the size of a Milky Way‑type galaxy (tens of kiloparsecs) up to the full horizon of the observable cosmos (several gigaparsecs). The AMR approach dynamically refines high‑density regions, allowing the simulation to resolve non‑linear gravitational collapse and the emergence of large‑scale structures with unprecedented fidelity while maintaining a uniform cosmological volume.

Computationally, the run was executed on the CURIE supercomputer at the French national computing center. Out of 5 040 thin‑node compute units, 4 752 were allocated, representing roughly 94 % of the machine’s capacity. The job consumed over 300 TB of RAM and accumulated approximately 10 million CPU‑hours (≈ 1 × 10⁷ core‑hours), making it one of the most demanding cosmological calculations to date. Throughout the 13‑year equivalent cosmic evolution, the simulation generated about 50 PB of raw data. To overcome storage constraints, the team designed a real‑time reduction workflow that extracts scientifically relevant fields (density, potential, particle subsamples, statistical summaries) and compresses them, reducing the final archive to roughly 500 TB—about 1 % of the original volume—without sacrificing the information needed for downstream analyses.

The scientific output provides a high‑resolution, statistically robust picture of structure formation under the standard ΛCDM model. Power spectra, bias parameters, two‑point correlation functions, and higher‑order statistics derived from the simulation match current observational constraints and extend them to larger scales and earlier epochs. The data reveal the formation histories of superclusters, cosmic walls, and the filamentary cosmic web, offering a benchmark for interpreting upcoming surveys such as Euclid, the Vera C. Rubin Observatory’s LSST, and the Square Kilometre Array (SKA). Moreover, the simulation enables precise testing of dark energy and dark matter parameter spaces by comparing predicted large‑scale clustering with future measurements.

On the technical side, the authors discuss challenges related to massive parallelism, memory distribution, I/O bandwidth, and fault tolerance. They introduced novel data structures and communication protocols to synchronize particle data with the hierarchical AMR grid efficiently. The real‑time data reduction pipeline, built on a combination of in‑situ analysis and lossless compression, dramatically cuts I/O overhead and storage costs, setting a new standard for exascale scientific workflows.

In summary, DEUS‑FUR represents a watershed achievement that bridges theoretical cosmology and observational astronomy. By faithfully reproducing the full observable universe at a resolution sufficient to resolve galactic‑scale physics, it provides an essential reference model for interpreting next‑generation cosmological surveys and pushes the limits of high‑performance computing in astrophysics.