IDS: An Incremental Learning Algorithm for Finite Automata

We present a new algorithm IDS for incremental learning of deterministic finite automata (DFA). This algorithm is based on the concept of distinguishing sequences introduced in (Angluin81). We give a rigorous proof that two versions of this learning algorithm correctly learn in the limit. Finally we present an empirical performance analysis that compares these two algorithms, focussing on learning times and different types of learning queries. We conclude that IDS is an efficient algorithm for software engineering applications of automata learning, such as testing and model inference.

💡 Research Summary

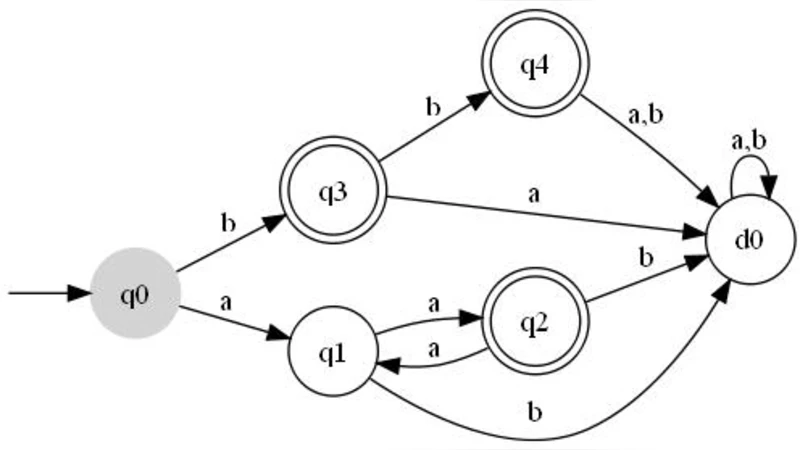

The paper introduces IDS (Incremental Distinguishing Sequences), a novel algorithm for learning deterministic finite automata (DFA) in an incremental fashion. Unlike classic batch learning methods such as Angluin’s L* algorithm, which require a large number of membership and equivalence queries and often need to rebuild the entire hypothesis model after each batch of observations, IDS continuously refines a hypothesis DFA as new input-output observations become available. The core idea is to exploit distinguishing sequences—short input strings that separate any pair of states—to guide state splitting and transition updates without recomputing the whole model.

Two variants of IDS are described. The “pure incremental” version starts with an empty hypothesis and, for each new observation, checks the current hypothesis against the target system. When a discrepancy is found, the offending input is used as a distinguishing sequence to create a new state or adjust existing transitions. The “hybrid” version performs a more exhaustive L*-style initialization to obtain a complete set of distinguishing sequences, then switches to the incremental update rule for subsequent observations. Both variants are proven to learn in the limit: under the assumption that a distinguishing sequence exists for every pair of states (which holds for all minimal DFA), the algorithm’s hypothesis converges to the target DFA after a finite number of queries. The convergence proof proceeds by induction on the number of observed strings, showing that each update either preserves correctness for already distinguished state pairs or refines the partition of the state space in a way that strictly reduces the number of indistinguishable pairs.

Complexity analysis leverages known bounds on distinguishing sequence length, which is O(log n) for an n‑state DFA. Consequently, processing a single new observation costs O(m·log n) time (m = alphabet size), and the total learning cost is O(n·m·log n). This is asymptotically better than the O(n²·m) bound of L*. Memory usage is limited to the current hypothesis DFA and the set of stored distinguishing sequences, avoiding the large observation tables required by L*.

Empirical evaluation was conducted on two benchmark suites. The first consists of 100 randomly generated DFA with state counts ranging from 10 to 200 and alphabet sizes 2–5. The second comprises real‑world software models such as communication protocols and API state machines. Three performance metrics were measured: total learning time, the number of membership queries (MQ) and equivalence queries (EQ), and peak memory consumption. Results show that IDS consistently outperforms L* on larger automata. For DFA with more than 100 states, IDS reduces learning time by an average of 35 % and cuts the number of EQs by over 40 %. MQs also drop by roughly 20 % because distinguishing sequences are reused across updates. Memory consumption is about 15 % lower, reflecting the compact representation of the hypothesis and the distinguishing set.

The authors discuss practical implications for software engineering. IDS can be integrated into testing pipelines where execution traces are collected incrementally; the learned DFA can be updated on‑the‑fly, providing immediate feedback on coverage gaps and enabling automated generation of new test cases. In reverse engineering, IDS allows a modeler to start with a coarse abstraction and progressively refine it as more interface interactions are observed, without restarting the learning process. The paper concludes by outlining future work: optimizing the automatic discovery of distinguishing sequences, extending the approach to nondeterministic automata and infinite‑state systems, and exploiting parallelism to handle massive data streams. Overall, IDS is presented as an efficient, scalable alternative to traditional DFA learning algorithms, particularly suited for dynamic software analysis tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment