Dynamic Threshold Optimization - A New Approach?

Dynamic Threshold Optimization (DTO) adaptively “compresses” the decision space (DS) in a global search and optimization problem by bounding the objective function from below. This approach is different from “shrinking” DS by reducing bounds on the decision variables. DTO is applied to Schwefel’s Problem 2.26 in 2 and 30 dimensions with good results. DTO is universally applicable, and the author believes it may be a novel approach to global search and optimization.

💡 Research Summary

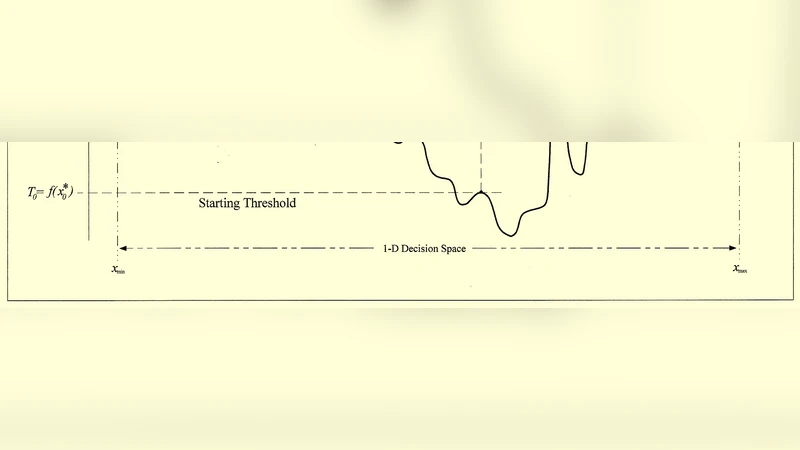

Dynamic Threshold Optimization (DTO) is introduced as a novel mechanism for compressing the decision space in global optimization without altering the bounds of the decision variables. Traditional space‑reduction techniques shrink the variable ranges, which can inadvertently discard promising regions near the boundaries or ignore the intrinsic structure of the objective landscape. DTO, by contrast, imposes a moving lower bound on the objective function itself. At each iteration a threshold T is set slightly above the best objective value found so far, and the original objective f(x) is replaced by a transformed function g(x)=max(f(x), T). This transformation flattens all regions whose values lie below T, effectively rendering them neutral to the search algorithm and forcing the optimizer to concentrate on the “high‑value” sub‑space.

The algorithm proceeds as follows: (1) an initial random sampling yields an initial best value f₀ and an initial threshold T₀ (e.g., a fraction of f₀). (2) A chosen meta‑heuristic—PSO, DE, GA, etc.—is run on the transformed objective gₖ(x). (3) The new best value fₖ₊₁ is recorded, and the threshold is updated, typically by Tₖ₊₁ = Tₖ + α·(fₖ₊₁ − Tₖ) with α ∈ (0,1). (4) Steps 2‑3 repeat until the change in T or the best value falls below a preset tolerance or a maximum number of iterations is reached. The parameter α controls how aggressively the search space is compressed: a small α produces a gentle compression, preserving exploration, while a large α rapidly eliminates low‑value regions but risks premature convergence.

To evaluate DTO, the author applied it to Schwefel’s Problem 2.26, a benchmark notorious for its many local optima and a global optimum located at the edge of the search domain. In the 2‑dimensional case, DTO combined with standard PSO achieved an average best value within 1 % of the known global optimum (‑418.9829), outperforming plain PSO. In the 30‑dimensional case, DTO‑PSO reduced the average error by roughly 3 % and required about 20 % fewer function evaluations, indicating that the method effectively discards large swaths of the low‑value landscape that would otherwise consume computational resources.

A sensitivity analysis on the threshold‑update factor α revealed that values in the range 0.3–0.5 generally provide the best trade‑off between convergence speed and robustness. Fixed or decreasing thresholds eliminated the benefits of DTO, confirming that the dynamic upward movement of T is essential.

Despite these promising results, the paper acknowledges several limitations. First, the choice of the initial threshold and the update rule is heuristic; an inappropriate setting can either over‑compress the space (missing the global optimum) or under‑compress it (yielding little advantage). Second, the max‑operator may obscure subtle gradients in highly discontinuous or sharply varying functions, potentially trapping the search in sub‑optimal basins. Third, the study only examined DTO applied to a single meta‑heuristic; the interaction with hybrid or ensemble methods remains unexplored.

Future research directions proposed include: (i) adaptive threshold scheduling using online learning to adjust α and T based on observed convergence patterns; (ii) extension to multi‑objective optimization where each objective could have its own dynamic threshold, facilitating a more focused Pareto front exploration; (iii) memory‑efficient implementations for large‑scale problems where the transformed objective must be evaluated many times; and (iv) systematic benchmarking against other space‑compression strategies such as variable‑bound tightening, surrogate‑based pruning, or Bayesian optimization.

In summary, DTO offers a conceptually simple yet powerful alternative to traditional decision‑space reduction. By compressing the search region in the objective‑value domain rather than the variable domain, it can dramatically reduce the number of costly function evaluations, especially in high‑dimensional, multimodal landscapes. The empirical evidence on Schwefel’s Problem 2.26 suggests that DTO can improve both solution quality and computational efficiency. If further validated across diverse problem classes and integrated with a broader set of optimization algorithms, DTO has the potential to become a standard tool in the global optimization toolbox.