Current Web Application Development and Measurement Practices for Small Software Firms

This paper discusses issues on current development and measurement practices that were identified from a pilot study conducted on Jordanian small software firms. The study was to investigate whether developers follow development and measurement best practices in web applications development. The analysis was conducted in two stages: first, grouping the development and measurement practices using variable clustering, and second, identifying the acceptance degree. Mean interval was used to determine the degree of acceptance. Hierarchal clustering was used to group the development and measurement practices. The actual findings of this survey will be used for building a new methodology for developing web applications in small software firms.

💡 Research Summary

The paper reports on an exploratory study that examined the current development and measurement practices employed by small software firms in Jordan when building web applications. The authors set out to determine whether these firms adhere to recognized best‑practice guidelines and to quantify the degree of acceptance for each practice. Data were collected through a structured questionnaire administered to 120 developers across roughly 30 companies, covering 45 distinct practices ranging from traditional requirements management and version control to more modern techniques such as continuous integration, automated performance testing, and user‑experience evaluation.

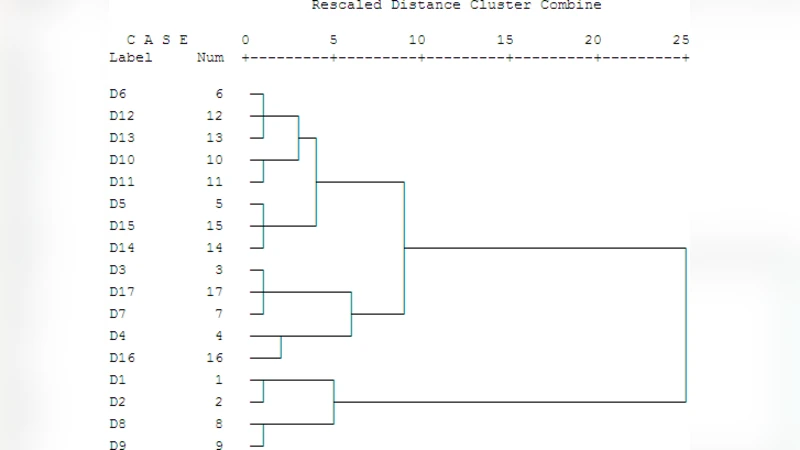

The analysis proceeded in two distinct phases. First, the authors applied variable clustering to the questionnaire responses, grouping highly correlated items into coherent clusters. Using principal‑component‑based clustering (implemented in SPSS/R), they identified five major clusters: (1) Requirements and Design Management, (2) Code Quality Management, (3) Testing and Verification, (4) Deployment/Operations Automation, and (5) User‑Centric Evaluation. Hierarchical clustering (Ward’s method with Euclidean distance) was then used to visualize the relationships among these clusters and to confirm their stability.

In the second phase, the mean score for each practice within a cluster was calculated and compared against a predefined acceptance interval: 1‑2 (not accepted), 2‑3 (low acceptance), 3‑4 (moderate acceptance), and 4‑5 (high acceptance). The results showed that the first three clusters—especially requirements management, version control, and basic unit testing—received moderate to high acceptance (average scores around 3.5‑3.8). In contrast, the clusters related to automation and user‑experience received low acceptance (average scores near 2.3‑2.5). Practices such as continuous integration, automated performance measurement, and UX testing were rarely employed, indicating a gap between contemporary web‑development trends and the realities of small‑firm operations.

The authors investigated underlying causes for this disparity. Interviews and supplemental questionnaire items revealed four primary constraints: (1) limited skilled personnel and the perceived cost of acquiring new tools, (2) small project sizes that diminish the perceived need for formal processes, (3) a cultural emphasis on rapid delivery over systematic quality assurance, and (4) insufficient training opportunities. These factors collectively explain why many modern measurement practices remain underutilized.

Based on the findings, the paper proposes a lightweight, incremental methodology tailored to small firms. The suggested approach emphasizes (a) standardizing core requirements and version‑control practices, (b) introducing a minimal continuous‑integration pipeline (e.g., simple script‑based builds), (c) gradually expanding automated testing, and (d) incorporating lightweight user‑feedback loops (such as short usability surveys). The authors also outline a pilot‑implementation plan, including key performance indicators—defect density, deployment frequency, and user satisfaction—to monitor the impact of the new methodology.

In conclusion, the study provides the first systematic, quantitative snapshot of web‑application development and measurement practices in Jordanian small software firms. It highlights both the adherence to traditional engineering practices and the neglect of newer, automation‑centric techniques. While the research is limited by its regional focus and reliance on self‑reported data, it offers a solid empirical foundation for designing context‑appropriate development frameworks and for guiding future training initiatives. The authors recommend expanding the sample to other regions, conducting longitudinal field studies, and evaluating the proposed methodology in real‑world projects to strengthen external validity and to refine the suggested best‑practice set.

Comments & Academic Discussion

Loading comments...

Leave a Comment