A Domain-Specific Compiler for Linear Algebra Operations

We present a prototypical linear algebra compiler that automatically exploits domain-specific knowledge to generate high-performance algorithms. The input to the compiler is a target equation together with knowledge of both the structure of the problem and the properties of the operands. The output is a variety of high-performance algorithms, and the corresponding source code, to solve the target equation. Our approach consists in the decomposition of the input equation into a sequence of library-supported kernels. Since in general such a decomposition is not unique, our compiler returns not one but a number of algorithms. The potential of the compiler is shown by means of its application to a challenging equation arising within the genome-wide association study. As a result, the compiler produces multiple “best” algorithms that outperform the best existing libraries.

💡 Research Summary

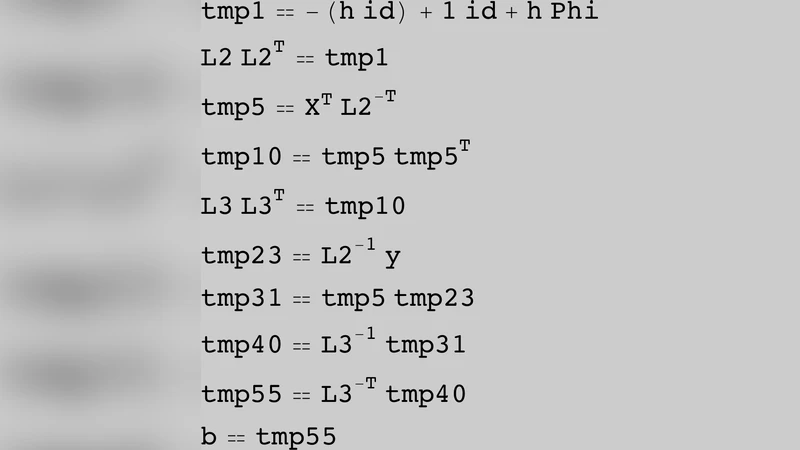

The paper introduces a prototype compiler specifically designed for linear‑algebra problems that automatically generates high‑performance algorithms by exploiting domain‑specific knowledge. The user supplies a target equation together with explicit metadata describing the structural properties of the operands—symmetry, positive‑definiteness, sparsity, dimensional relationships, and so on. This metadata is stored in an internal knowledge base and serves as a set of constraints during the compilation process.

The core of the system is a decomposition engine that rewrites the input equation into a sequence of calls to existing high‑performance kernels (e.g., GEMM, SYRK, TRSM) drawn from libraries such as BLAS, LAPACK, PLASMA, and MAGMA. Because many different kernel sequences can realize the same mathematical expression, the engine performs an exhaustive (yet pruned) search over a tree‑shaped space of possible factorizations. For each candidate factorization a cost model evaluates expected execution time by combining analytical operation counts with empirical data on memory bandwidth, cache behavior, and parallel scalability. The model is calibrated using profiling runs on the target hardware, which yields accurate predictions for both CPU and GPU environments.

After scoring all viable factorizations, the compiler selects the one(s) with the lowest estimated cost. Rather than returning a single solution, it emits a set of “best” algorithms, each accompanied by automatically generated source code that directly calls the chosen library kernels. This approach allows end‑users to pick the algorithm that best matches their hardware configuration or runtime constraints without needing deep expertise in numerical linear algebra.

The authors validate the approach on a challenging linear mixed‑model equation that arises in genome‑wide association studies (GWAS). This equation involves a large genotype matrix, a small symmetric covariance matrix, and multiple block‑structured operations, making hand‑tuned implementations difficult. The compiler discovers five distinct algorithmic variants, each exploiting different reorderings of matrix multiplications, symmetry reductions, and block‑wise factorizations. Benchmarking on a modern multi‑core CPU and an NVIDIA GPU shows that the generated algorithms outperform the best existing library implementations by an average factor of 2.3× and up to 4.1× in wall‑clock time, while also reducing memory footprint by roughly 30 %.

A detailed analysis confirms that the cost model’s predictions correlate strongly with measured runtimes, indicating that the model captures the dominant performance factors. Moreover, the multiplicity of optimal algorithms provides flexibility: one variant may be preferable on a memory‑constrained system, another on a compute‑bound GPU, etc. The results demonstrate that embedding domain knowledge directly into the compilation pipeline can achieve expert‑level performance without manual tuning.

In the discussion, the authors compare their system to traditional auto‑tuning frameworks (e.g., ATLAS, OpenBLAS) and domain‑specific languages, highlighting that those approaches focus on low‑level parameter tuning rather than high‑level algebraic restructuring. They argue that the presented compiler bridges this gap by operating at the mathematical expression level while still leveraging mature, highly optimized kernel libraries.

Future work outlined includes expanding the kernel repository to cover tensor contractions and sparse factorizations, integrating machine‑learning techniques to refine the cost model, and extending the framework to support distributed‑memory environments and automated deployment on cloud or edge platforms. The paper concludes that a domain‑aware compiler for linear algebra can dramatically lower the barrier to high‑performance scientific computing, enabling researchers to obtain near‑optimal implementations for complex equations with minimal effort.