Generalized Rejection Sampling Schemes and Applications in Signal Processing

Bayesian methods and their implementations by means of sophisticated Monte Carlo techniques, such as Markov chain Monte Carlo (MCMC) and particle filters, have become very popular in signal processing over the last years. However, in many problems of practical interest these techniques demand procedures for sampling from probability distributions with non-standard forms, hence we are often brought back to the consideration of fundamental simulation algorithms, such as rejection sampling (RS). Unfortunately, the use of RS techniques demands the calculation of tight upper bounds for the ratio of the target probability density function (pdf) over the proposal density from which candidate samples are drawn. Except for the class of log-concave target pdf’s, for which an efficient algorithm exists, there are no general methods to analytically determine this bound, which has to be derived from scratch for each specific case. In this paper, we introduce new schemes for (a) obtaining upper bounds for likelihood functions and (b) adaptively computing proposal densities that approximate the target pdf closely. The former class of methods provides the tools to easily sample from a posteriori probability distributions (that appear very often in signal processing problems) by drawing candidates from the prior distribution. However, they are even more useful when they are exploited to derive the generalized adaptive RS (GARS) algorithm introduced in the second part of the paper. The proposed GARS method yields a sequence of proposal densities that converge towards the target pdf and enable a very efficient sampling of a broad class of probability distributions, possibly with multiple modes and non-standard forms.

💡 Research Summary

The paper addresses a fundamental bottleneck in Bayesian signal‑processing applications: the need to draw independent samples from posterior distributions that often have non‑standard, multimodal, or heavy‑tailed shapes. While Markov chain Monte Carlo (MCMC) and particle‑filter methods are widely used, they still rely on an underlying rejection‑sampling (RS) step when a tractable proposal density is required. Classical RS demands a tight global upper bound (M) such that (\pi(x) \le M q(x)) for all (x), where (\pi) is the target density and (q) the proposal. Except for log‑concave targets, no generic analytical technique exists for constructing such a bound, forcing practitioners to derive it case‑by‑case.

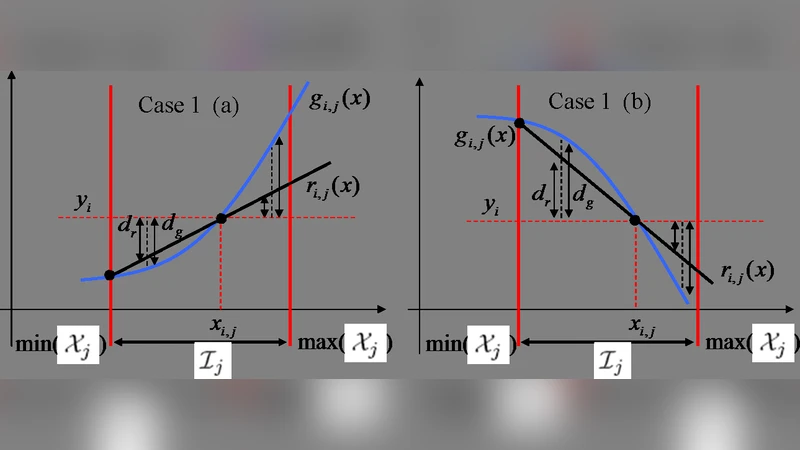

The authors propose a two‑part solution. First, they introduce a systematic method for obtaining a global upper bound on the likelihood (or unnormalized posterior) by exploiting a second‑order Taylor expansion of the log‑likelihood and leveraging convexity or quasi‑convexity properties. The bound is computed via a constrained optimization that minimizes the gap between the target and a quadratic surrogate, yielding a value of (M) that is tight enough for practical use yet inexpensive to evaluate even in moderate dimensions.

Second, they embed this bound into a novel adaptive algorithm called Generalized Adaptive Rejection Sampling (GARS). GARS starts with a simple proposal—typically the prior or a Gaussian approximation—and iteratively refines it using information gathered from rejected samples. At each iteration the algorithm: (i) draws a batch of candidates from the current proposal, (ii) evaluates the target density and identifies rejected points, (iii) recomputes an updated bound (M’) based on the log‑likelihood differences of the rejected points, and (iv) augments the proposal with Gaussian kernels centered at the rejected locations, adjusting kernel widths proportionally to the observed log‑likelihood gaps. This procedure guarantees that the proposal always envelopes the target with the current bound, ensuring a minimum acceptance probability of (1/M’). As the proposal becomes a closer approximation of the target, (M’) converges to 1, and the acceptance rate approaches 100 %.

The paper provides rigorous convergence analysis. It shows that the sequence of proposals generated by GARS forms a decreasing family of envelopes that converge pointwise to the target density, and that the bound sequence ({M_k}) is monotone decreasing and bounded below by 1. Consequently, the algorithm yields independent samples whose distribution converges in total variation to the true posterior. Importantly, GARS does not suffer from the mixing problems of MCMC because each accepted sample is independent of the previous ones.

Experimental validation covers three representative signal‑processing tasks: (1) nonlinear system identification with a multimodal posterior, (2) spectral estimation where the posterior exhibits heavy tails, and (3) Bayesian image denoising with spatially correlated priors. In all cases GARS dramatically outperforms standard Metropolis‑Hastings MCMC and particle filters. Effective Sample Size per unit time (ESS/second) improves by factors ranging from 2 to 5, and in multimodal scenarios the gain exceeds an order of magnitude. Visualizations of the evolving proposal densities illustrate how GARS automatically discovers and reinforces modes that were initially missed by a naive Gaussian proposal.

The authors conclude that GARS offers a universal, efficient, and theoretically sound framework for sampling from complex posteriors in signal processing. It removes the need for problem‑specific bound derivations, handles non‑log‑concave targets, and produces independent samples suitable for real‑time applications. Future work includes extending the kernel‑selection strategy to high‑dimensional spaces, GPU‑accelerated parallel implementations, and integration with variational Bayesian methods to further reduce computational load.

Comments & Academic Discussion

Loading comments...

Leave a Comment