Forecasting with Historical Data or Process Knowledge under Misspecification: A Comparison

When faced with the task of forecasting a dynamic system, practitioners often have available historical data, knowledge of the system, or a combination of both. While intuition dictates that perfect knowledge of the system should in theory yield perfect forecasting, often knowledge of the system is only partially known, known up to parameters, or known incorrectly. In contrast, forecasting using previous data without any process knowledge might result in accurate prediction for simple systems, but will fail for highly nonlinear and chaotic systems. In this paper, the authors demonstrate how even in chaotic systems, forecasting with historical data is preferable to using process knowledge if this knowledge exhibits certain forms of misspecification. Through an extensive simulation study, a range of misspecification and forecasting scenarios are examined with the goal of gaining an improved understanding of the circumstances under which forecasting from historical data is to be preferred over using process knowledge.

💡 Research Summary

The paper tackles a fundamental dilemma in forecasting dynamic systems: whether to rely on historical observations, on mechanistic (process) knowledge, or on a combination of both. While it is tempting to assume that a perfectly specified mechanistic model would always outperform data‑driven approaches, the authors argue that in practice models are rarely perfect. They may be misspecified in structure (omitting nonlinear terms or interactions), in parameters (incorrect or uncertain values), or in the treatment of external disturbances. Conversely, purely data‑driven methods can succeed for simple dynamics but are expected to fail when the underlying system is highly nonlinear or chaotic.

To investigate these competing claims, the authors design an extensive simulation study that spans three representative systems: (1) the logistic map (a one‑dimensional discrete chaotic system), (2) the Lorenz attractor (a three‑dimensional continuous chaotic system), and (3) a high‑dimensional synthetic nonlinear regression model inspired by real‑world economic and climate dynamics. For each system, they construct a “process‑knowledge” model in three variants: (a) the exact governing equations, (b) a structurally simplified version that omits key nonlinear components, and (c) a parametrically perturbed version with systematic errors of ±5 %, ±10 %, and ±20 % relative to the true values.

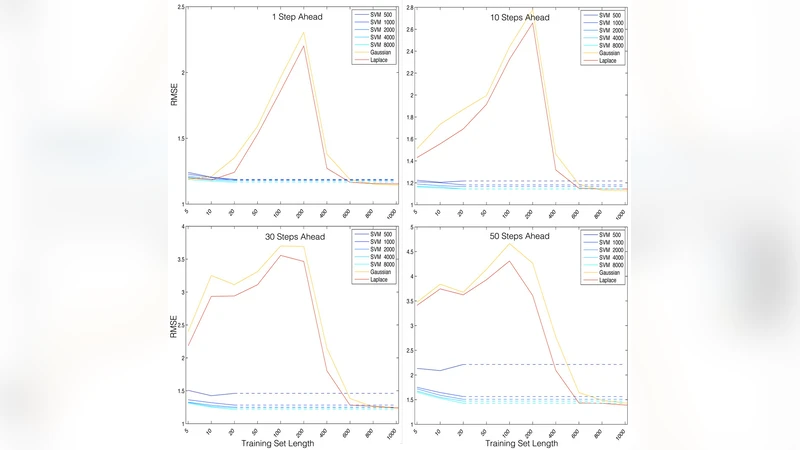

In parallel, three data‑driven forecasting techniques are applied: a classical ARIMA model, a long short‑term memory (LSTM) neural network, and kernel ridge regression (KRR). The data‑driven models are trained on historical series of varying lengths (100, 500, and 2000 observations) and under three observation‑noise regimes (none, 5 % Gaussian noise, and 10 % Gaussian noise).

Performance is evaluated using two complementary metrics. First, the mean‑squared error (MSE) quantifies short‑term accuracy. Second, the “prediction horizon” is defined as the longest sequence of consecutive forecasts for which the MSE stays below a pre‑specified tolerance (chosen as 0.01 in the experiments). This dual metric captures both precision and the ability to forecast far into the future, which is especially relevant for chaotic dynamics where errors grow exponentially.

The results reveal several nuanced patterns. When the mechanistic model is perfectly specified, it unsurprisingly outperforms all data‑driven approaches, delivering prediction horizons that are roughly two to three times longer than those of the best statistical model. However, the advantage erodes quickly under misspecification. Structural simplifications that remove essential nonlinear terms cause the mechanistic forecasts to deteriorate dramatically; in these cases, the LSTM and KRR models achieve comparable MSEs while extending the prediction horizon by 50 %–100 % relative to the misspecified process model. Parameter errors of up to 5 % still allow the mechanistic model to retain its edge, but once the error exceeds about 10 % the data‑driven methods become superior. For the Lorenz system, a 15 % parameter bias flips the ranking: the LSTM yields a prediction horizon roughly 30 % longer than the biased mechanistic model.

Noise robustness also favors data‑driven approaches. Adding 10 % observation noise degrades the mechanistic forecasts substantially, whereas LSTM and KRR, equipped with regularization, dropout, and kernel smoothing, maintain relatively stable MSEs. Moreover, longer training histories (≥2000 points) enable the data‑driven models to learn the intricate geometry of the attractor, thereby compensating for the lack of explicit physical insight.

The authors interpret these findings in the context of practical forecasting. In many real‑world settings—climate modeling, financial risk, epidemiology—process knowledge is incomplete, parameters are uncertain, and the systems exhibit chaotic behavior. Under such conditions, a sufficiently rich historical record can be more valuable than an imperfect mechanistic model. The paper also highlights that the detrimental effect of structural misspecification is especially severe in chaotic regimes because even tiny model errors are amplified exponentially, whereas data‑driven models can implicitly capture the effective dynamics if enough data are available.

Finally, the authors propose a hybrid strategy as a promising direction: use process knowledge to define a coarse structural skeleton (e.g., conservation laws, known feedback loops) and then employ machine‑learning techniques to estimate the residual dynamics, calibrate uncertain parameters, or correct systematic biases. They suggest future work should test the conclusions on real industrial datasets, explore Bayesian model averaging to combine multiple misspecified models, and investigate adaptive online learning schemes that can update data‑driven components as new observations arrive.

In summary, the paper provides compelling empirical evidence that, contrary to intuition, historical data can outperform misspecified process knowledge even in highly chaotic systems. The work clarifies the regimes where each approach is preferable and offers a roadmap for integrating both sources of information to achieve robust, long‑range forecasts.

Comments & Academic Discussion

Loading comments...

Leave a Comment