Uncertainties and Ambiguities in Percentiles and how to Avoid Them

The recently proposed fractional scoring scheme is used to attribute publications to percentile rank classes. It is shown that in this way uncertainties and ambiguities in the evaluation of percentile ranks do not occur. Using the fractional scoring the total score of all papers exactly reproduces the theoretical value.

💡 Research Summary

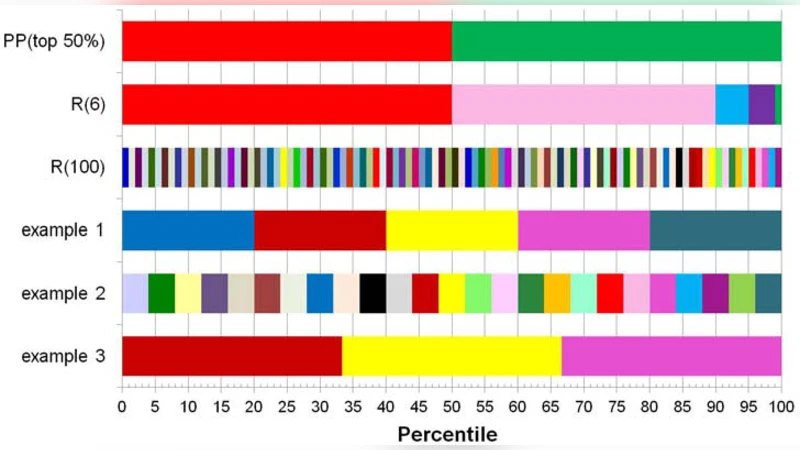

The paper addresses a long‑standing problem in bibliometric and other performance‑evaluation contexts: the ambiguity that arises when assigning individual items (e.g., publications) to percentile‑rank classes. Traditional percentile‑ranking schemes treat each item as belonging entirely to a single class. This binary approach creates two kinds of uncertainty. First, when several items share the same raw score (ties), the decision to place all of them in the higher class, the lower class, or to split them arbitrarily introduces subjectivity. Second, items whose calculated percentile falls exactly on a class boundary (for example, the 90th percentile) are forced into one class or the other, again leading to inconsistent results. The problem is amplified in small datasets or in fields where citation distributions are highly skewed, because a single item can shift the composition of a class by a noticeable amount.

To eliminate these sources of error, the authors propose a “fractional scoring” (also called “fractional allocation”) method. The method proceeds as follows. For a set of N items, each item is first ordered by its raw metric (e.g., citation count). The rank r of an item (with r = 1 for the most cited) is used to compute a continuous percentile position p = (r – 0.5)/N. This definition places each item at the centre of its rank interval, avoiding the half‑open interval problem of the conventional p = r/N definition. The percentile axis

Comments & Academic Discussion

Loading comments...

Leave a Comment