Intelligent learning environments within blended learning for ensuring effective C programming course

This paper describes a blended learning implementation and experience supported with intelligent learning environments included in a learning management system (LMS) called @KU-UZEM. The blended learning model is realized as a combination of face to face education and e-learning. The intelligent learning environments consist of two applications named CTutor, ITest. In addition to standard e-learning tools, students can use CTutor to resolve C programming exercises. CTutor is a problem-solving environment, which diagnoses students’ knowledge level but also gives feedbacks and tips to help them to understand the course subject, overcome their misconceptions and reinforce learnt concepts. ITest provides an assessment environment in which students can take quizzes that were prepared according to their learning levels. The realized model was used for two terms in the “C Programming” course given at Afyon Kocatepe University. A survey was conducted at the end of the course to find out to what extent the students were accepting the blended learning model supported with @KU-UZEM and to discover students’ attitude towards intelligent learning environments. Additionally, an experiment formed with an experimental group who took an active part in the realized model and a control group who only took the face to face education was performed during the first term of the course. According to the results, students were satisfied with intelligent learning environments and the realized learning model. Furthermore, the use of intelligent learning environments improved the students’ knowledge about C programming.

💡 Research Summary

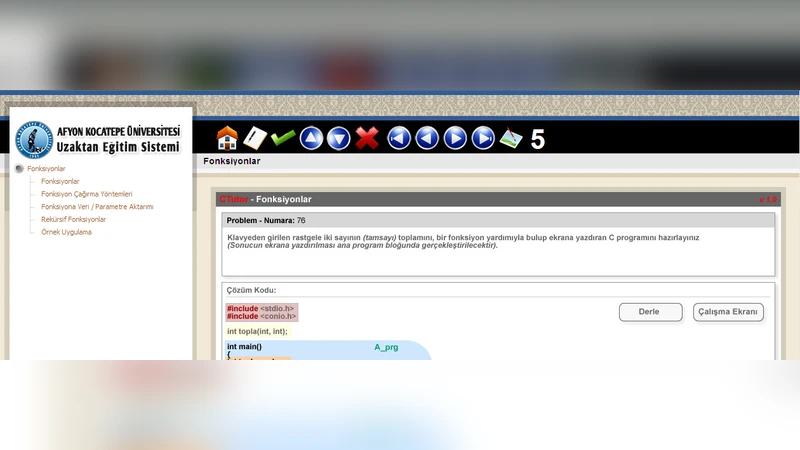

The paper reports on the design, implementation, and evaluation of a blended‑learning model for an undergraduate C programming course at Afyon Kocatepe University, enriched with two intelligent learning applications—CTutor and ITest—integrated into the university’s learning management system, @KU‑UZEM. The blended model combines traditional face‑to‑face lectures with online activities. CTutor serves as a problem‑solving environment: students submit C code, the system automatically parses the program, detects syntactic, semantic, and memory‑related errors, and then delivers tiered hints, conceptual explanations, and corrective feedback. By logging error patterns, CTutor builds a lightweight knowledge‑level model for each learner and dynamically adjusts the difficulty of subsequent exercises, thereby providing an adaptive learning experience. ITest complements CTutor by generating quizzes that are personalized to each learner’s current proficiency, as inferred from CTutor’s diagnostics and from a learner profile stored in the LMS. Quizzes include multiple‑choice, short‑answer, and coding‑task items; they are auto‑graded and accompanied by detailed solution explanations, enabling immediate self‑assessment.

The study spanned two academic terms (Fall 2023 and Spring 2024) and involved more than 120 students per term. Participants were randomly assigned to an experimental group (full use of the blended model, CTutor, and ITest) or a control group (traditional lecture‑only instruction). Both groups took identical pre‑ and post‑tests comprising objective and subjective items to measure knowledge gain. At the end of each term, a Likert‑scale survey captured students’ attitudes toward the LMS, the intelligent tools, perceived usefulness of feedback, and overall satisfaction.

Quantitative results show that the experimental group achieved a statistically significant improvement over the control group, with an average post‑test increase of 12.4 points on a 100‑point scale (p < 0.01). Moreover, a positive correlation was observed between the frequency of CTutor usage and reductions in error‑correction time, as well as higher code correctness rates. Survey data reveal high acceptance: 85 % of students rated CTutor’s instant feedback as “very helpful,” and 78 % considered ITest’s personalized quizzes to be a strong motivator. Usability scores for the LMS exceeded 4 out of 5, indicating smooth integration.

The authors discuss these findings as evidence of a synergistic effect: face‑to‑face instruction delivers foundational concepts, while the intelligent online components provide immediate, individualized scaffolding that reinforces learning and corrects misconceptions in real time. Limitations include the relatively short study horizon (two terms), potential confounding due to heterogeneous prior programming experience, and the rule‑based nature of CTutor’s feedback, which may not adequately support complex algorithmic design tasks.

Future work is proposed in three directions. First, incorporating machine‑learning models for error prediction and feedback generation could enhance the granularity and adaptability of the tutoring system. Second, extending the architecture to support additional programming languages (Python, Java) and other STEM courses would test the generalizability of the approach. Third, longitudinal studies tracking retention, transfer to industry coding tasks, and development of metacognitive skills would provide deeper insight into the lasting impact of intelligent blended learning environments.

In conclusion, the research demonstrates that embedding intelligent learning environments within a blended‑learning framework can substantially improve both learning outcomes and student satisfaction in a C programming course. The results suggest that such a model is a viable blueprint for other disciplines seeking to combine the strengths of traditional instruction with adaptive, data‑driven online support.