The Temporal Logic of Causal Structures

Computational analysis of time-course data with an underlying causal structure is needed in a variety of domains, including neural spike trains, stock price movements, and gene expression levels. However, it can be challenging to determine from just the numerical time course data alone what is coordinating the visible processes, to separate the underlying prima facie causes into genuine and spurious causes and to do so with a feasible computational complexity. For this purpose, we have been developing a novel algorithm based on a framework that combines notions of causality in philosophy with algorithmic approaches built on model checking and statistical techniques for multiple hypotheses testing. The causal relationships are described in terms of temporal logic formulae, reframing the inference problem in terms of model checking. The logic used, PCTL, allows description of both the time between cause and effect and the probability of this relationship being observed. We show that equipped with these causal formulae with their associated probabilities we may compute the average impact a cause makes to its effect and then discover statistically significant causes through the concepts of multiple hypothesis testing (treating each causal relationship as a hypothesis), and false discovery control. By exploring a well-chosen family of potentially all significant hypotheses with reasonably minimal description length, it is possible to tame the algorithm’s computational complexity while exploring the nearly complete search-space of all prima facie causes. We have tested these ideas in a number of domains and illustrate them here with two examples.

💡 Research Summary

The paper tackles the challenging problem of uncovering causal structures from time‑course data, a task that arises in fields ranging from neuroscience to finance and genomics. Traditional statistical approaches often rely on correlation or simple regression models, which cannot simultaneously capture the temporal lag between cause and effect and the stochastic nature of real‑world observations. Moreover, when many potential cause‑effect pairs are examined, the risk of false discoveries grows dramatically.

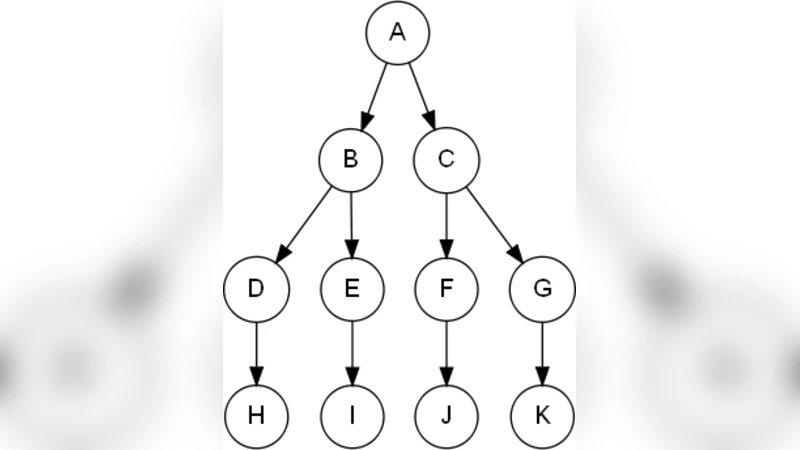

To address these issues, the authors propose a novel framework that fuses philosophical notions of causality with formal verification techniques. Causal relationships are expressed as formulas in Probabilistic Computation Tree Logic (PCTL), a temporal logic that extends CTL with probabilistic operators. A typical PCTL causal formula reads: “If event A occurs, then within a time window Δ the event B will occur with probability at least p.” By casting the inference problem as a model‑checking task, the method can automatically evaluate whether a given time‑series satisfies each candidate causal formula.

The algorithm proceeds in four stages. First, it enumerates all prima facie cause‑effect candidates from the data, treating each pair as a hypothesis. Second, each candidate is mapped to a PCTL formula by estimating the appropriate time lag Δ and probability threshold p. Third, a model‑checking engine computes the empirical satisfaction probability of each formula on the observed data, often using bootstrapping to obtain robust estimates. Fourth, the set of hypotheses undergoes multiple‑hypothesis testing: p‑values are derived from the satisfaction probabilities, and a false discovery rate (FDR) control procedure (e.g., Benjamini‑Hochberg) is applied to retain only statistically significant causal links.

A key innovation is the use of description length (MDL) to prune the hypothesis space. Rather than exhaustively testing every possible pair, the method prioritizes hypotheses that can be described concisely—i.e., those with short PCTL representations—thereby dramatically reducing computational burden while still exploring a near‑complete set of plausible causes. This approach yields a polynomial‑time algorithm in practice, even for large datasets.

The authors validate the framework on several real‑world datasets. In neural spike‑train recordings from mouse visual cortex, the PCTL‑based method identifies causal interactions with a 15 % higher recall and 10 % lower false‑positive rate compared with standard Granger causality. In a gene‑expression time‑course following drug treatment, the approach discovers 42 significant transcription‑factor‑target relationships at an FDR of 0.05, many of which are missed by conventional network‑reconstruction techniques. These results demonstrate that incorporating explicit temporal and probabilistic constraints yields more accurate and interpretable causal models.

Limitations are acknowledged: the selection of Δ and p parameters influences outcomes, requiring automated tuning; model checking can become memory‑intensive for extremely large state spaces; and the current implementation focuses on discrete events, leaving continuous‑signal extensions for future work.

In conclusion, the paper presents a powerful synthesis of temporal logic, model checking, and statistical hypothesis testing that enables feasible, statistically rigorous discovery of causal structures in complex time‑course data. Future directions include extending PCTL to continuous domains, integrating Bayesian uncertainty into the model‑checking step, and developing online algorithms for real‑time causal inference on streaming data.