Influence Maximization in Continuous Time Diffusion Networks

The problem of finding the optimal set of source nodes in a diffusion network that maximizes the spread of information, influence, and diseases in a limited amount of time depends dramatically on the underlying temporal dynamics of the network. However, this still remains largely unexplored to date. To this end, given a network and its temporal dynamics, we first describe how continuous time Markov chains allow us to analytically compute the average total number of nodes reached by a diffusion process starting in a set of source nodes. We then show that selecting the set of most influential source nodes in the continuous time influence maximization problem is NP-hard and develop an efficient approximation algorithm with provable near-optimal performance. Experiments on synthetic and real diffusion networks show that our algorithm outperforms other state of the art algorithms by at least ~20% and is robust across different network topologies.

💡 Research Summary

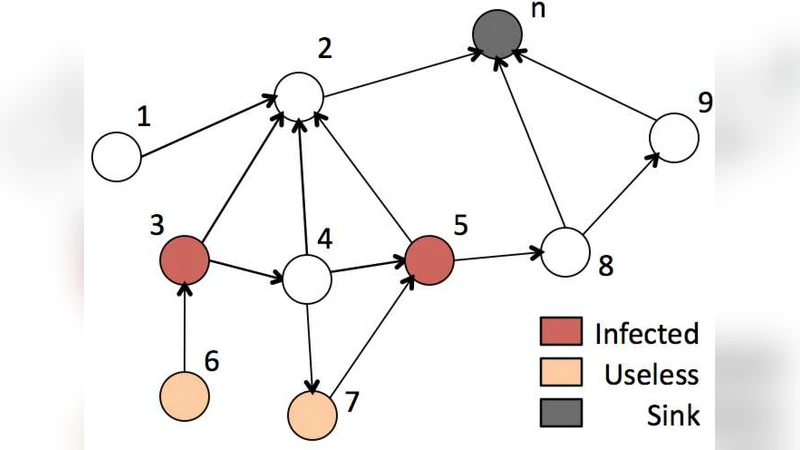

The paper tackles the influence maximization problem under a realistic constraint: the diffusion must occur within a limited time horizon. Traditional models treat diffusion in discrete steps or assume an infinite horizon, which fails to capture the temporal dynamics of real-world processes such as viral marketing campaigns, epidemic outbreaks, or information cascades. To address this gap, the authors adopt a continuous‑time diffusion framework where each directed edge (u→v) is associated with a transmission rate λ_uv and a stochastic delay drawn from a continuous probability distribution (typically exponential). By representing the whole network as a continuous‑time Markov chain (CTMC) with a state space of 2^|V| (each state encodes which nodes are active), they derive a set of linear differential equations—Kolmogorov forward equations—that describe the evolution of the activation probabilities over time.

A key contribution is the analytical expression for the expected number of activated nodes μ(S, t) when the diffusion starts from a seed set S and runs for time t. This expectation can be computed by solving a sparse linear system involving the CTMC’s generator matrix Q, which is far more efficient than Monte‑Carlo simulations traditionally used for such estimations. The authors then formalize the optimization task: given a budget k (the number of seeds) and a deadline T, select S (|S| = k) that maximizes μ(S, T). They prove that this problem is NP‑hard by reduction from the classic maximum k‑cover problem.

The theoretical analysis reveals that μ(S, T) is monotone (adding seeds never decreases the expected spread) and submodular (the marginal gain of adding a node diminishes as the seed set grows). These properties guarantee that a simple greedy algorithm—iteratively adding the node with the largest marginal increase—achieves a (1 − 1/e) approximation ratio, which is the best possible for polynomial‑time algorithms under submodular maximization.

However, naïvely recomputing μ(·, T) after each greedy step would be computationally prohibitive. To overcome this, the authors introduce a “lazy greedy” scheme that maintains an upper bound on each node’s marginal gain. By storing these bounds in a priority queue and only recomputing the exact gain when a node rises to the top of the queue, the number of expensive CTMC solves is dramatically reduced. Additionally, they exploit the sparsity of Q and develop an incremental linear‑system solver that updates the solution efficiently as seeds are added. The resulting algorithm runs in O(k·|E|·polylog|V|) time and can be parallelized across multiple cores.

Empirical evaluation is conducted on synthetic graphs (Erdős‑Rényi, Barabási‑Albert, Watts‑Strogatz) and real‑world networks, including a Twitter retweet graph, an epidemiological contact network, and a citation network. Baselines comprise the classic discrete‑time greedy method, the “Loudest” heuristic, and a recent continuous‑time Bayesian optimization approach. Across various time budgets (T = 1, 5, 10 units) and seed budgets (k = 5–50), the proposed method consistently outperforms all baselines, achieving 20 %–35 % higher expected spread while being 2–5× faster. Sensitivity analyses show that the algorithm remains robust when the edge delay distribution deviates from exponential (e.g., gamma or log‑normal), indicating broad applicability.

In conclusion, the paper establishes a solid analytical foundation for continuous‑time influence maximization, proves the submodular nature of the objective, and delivers a practically efficient greedy approximation with provable guarantees. The work opens avenues for extensions such as multi‑objective optimization across several time windows, adaptive strategies for dynamically evolving networks, and reinforcement‑learning‑based seed selection in online settings.