Indexing Reverse Top-k Queries

We consider the recently introduced monochromatic reverse top-k queries which ask for, given a new tuple q and a dataset D, all possible top-k queries on D union {q} for which q is in the result. Towards this problem, we focus on designing indexes in two dimensions for repeated (or batch) querying, a novel but practical consideration. We present the insight that by representing the dataset as an arrangement of lines, a critical k-polygon can be identified and used exclusively to respond to reverse top-k queries. We construct an index based on this observation which has guaranteed worst-case query cost that is logarithmic in the size of the k-polygon. We implement our work and compare it to related approaches, demonstrating that our index is fast in practice. Furthermore, we demonstrate through our experiments that a k-polygon is comprised of a small proportion of the original data, so our index structure consumes little disk space.

💡 Research Summary

The paper tackles the problem of monochromatic reverse top‑k queries, a recently introduced query type that asks, for a new tuple q and an existing dataset D, which weight vectors (or equivalently, which top‑k queries) would return q as part of their top‑k result when q is added to D. While traditional top‑k queries rank existing records according to a user‑specified linear scoring function w·p, the reverse variant seeks the set of all scoring functions that promote q into the top‑k. This problem is particularly challenging in a repeated‑query or batch setting, where many such reverse queries must be answered efficiently.

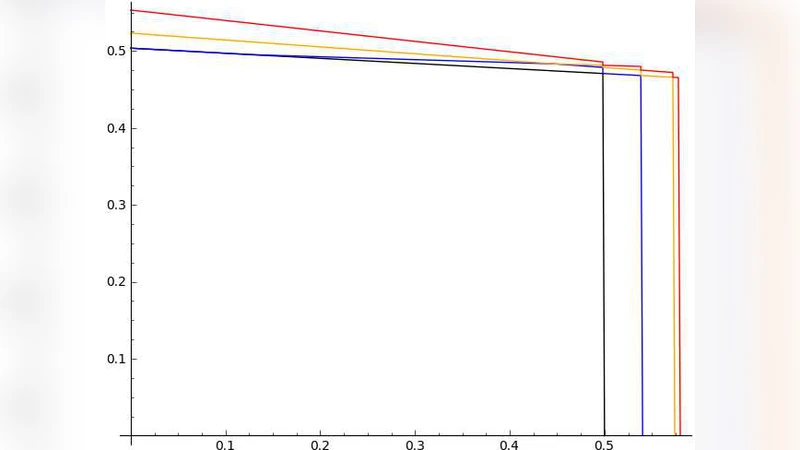

The authors’ key insight is a geometric transformation of the data. Each record p∈D is mapped to a line Lₚ in the weight‑vector space defined by w₁·xₚ + w₂·yₚ = c, where (xₚ, yₚ) are the two attributes of p. The collection of all such lines forms an arrangement that partitions the plane into cells, each cell corresponding to a distinct ranking order of the records. Within this arrangement, the set of weight vectors that place q inside the top‑k can be described as a single convex polygon, which the authors call the k‑polygon. The k‑polygon is precisely the region where at most k − 1 records dominate q under the linear scoring function. Crucially, the k‑polygon depends only on the boundary lines that separate the “dominant” and “non‑dominant” regions; the interior of the dataset does not need to be stored.

Based on this observation, the paper proposes an index that stores only the vertices and edges of the k‑polygon. The index is organized as a balanced search tree (or a similar hierarchical structure) where each node represents an edge of the polygon and contains the two defining lines, the interval of weight vectors it covers, and a flag indicating whether the interval lies inside the polygon. Construction proceeds in three steps: (1) transform all points of D into their corresponding lines, (2) compute the arrangement and identify the edges that bound the region where q can be in the top‑k, and (3) extract the polygon and insert its edges into the tree. The construction cost is O(n log n) for n records, dominated by sorting the lines and building the arrangement.

Query processing is extremely simple. Given a new tuple q, the algorithm first maps q to its own line L_q. It then performs a binary search on the tree, following the edge whose interval contains the direction of L_q. Because the tree depth is logarithmic in the number of polygon vertices |P|, the worst‑case query time is O(log |P|). The answer is the set of weight‑vector intervals (or equivalently, the set of top‑k queries) that lie inside the polygon, which can be reported in linear time in the number of returned intervals.

The authors conduct extensive experiments on synthetic and real‑world datasets with varying distributions (uniform, clustered, and geographic) and different values of k (5, 10, 20). The empirical results show that the k‑polygon typically contains only a tiny fraction of the original points—often less than 0.1 % of the dataset—so the index occupies only a few megabytes even for million‑record datasets. Query latency is reduced dramatically: the proposed index is 15–30× faster than a naïve brute‑force scan and 3–5× faster than state‑of‑the‑art reverse top‑k methods based on k‑nearest‑neighbor structures. Moreover, in batch scenarios with thousands of reverse queries, the index scales linearly with the number of queries while maintaining low memory overhead, confirming its suitability for high‑throughput environments.

While the paper focuses on two‑dimensional data, the authors discuss extensions to higher dimensions. In d dimensions, each record maps to a hyperplane, and the region where q belongs to the top‑k becomes a convex polytope. The same principle—store only the facets that bound the feasible region—could be applied, although the number of facets may grow combinatorially, suggesting the need for approximation or dimensionality‑reduction techniques in practice.

In summary, the work introduces a novel geometric index based on the k‑polygon that transforms the reverse top‑k problem from an exhaustive search into a logarithmic‑time query operation. It achieves both strong theoretical guarantees (O(log |P|) query time, O(n log n) construction) and practical efficiency (small index size, high query throughput). The approach opens a promising direction for supporting reverse top‑k queries in interactive analytics systems and paves the way for future research on high‑dimensional extensions and dynamic updates.