Hybrid Batch Bayesian Optimization

Bayesian Optimization aims at optimizing an unknown non-convex/concave function that is costly to evaluate. We are interested in application scenarios where concurrent function evaluations are possible. Under such a setting, BO could choose to either sequentially evaluate the function, one input at a time and wait for the output of the function before making the next selection, or evaluate the function at a batch of multiple inputs at once. These two different settings are commonly referred to as the sequential and batch settings of Bayesian Optimization. In general, the sequential setting leads to better optimization performance as each function evaluation is selected with more information, whereas the batch setting has an advantage in terms of the total experimental time (the number of iterations). In this work, our goal is to combine the strength of both settings. Specifically, we systematically analyze Bayesian optimization using Gaussian process as the posterior estimator and provide a hybrid algorithm that, based on the current state, dynamically switches between a sequential policy and a batch policy with variable batch sizes. We provide theoretical justification for our algorithm and present experimental results on eight benchmark BO problems. The results show that our method achieves substantial speedup (up to %78) compared to a pure sequential policy, without suffering any significant performance loss.

💡 Research Summary

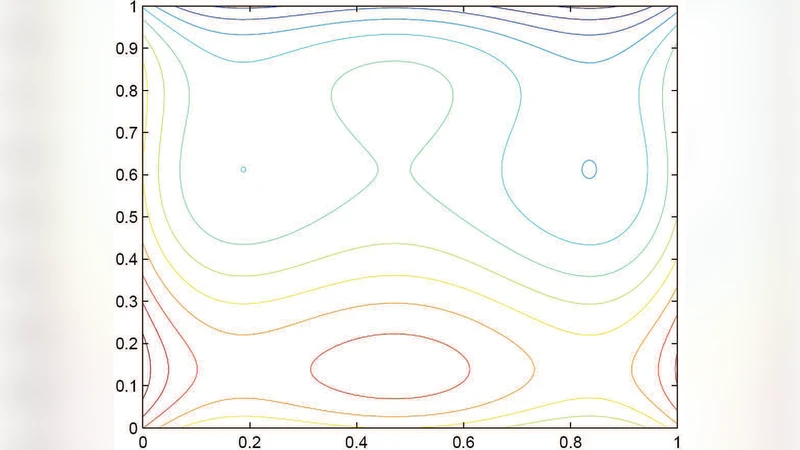

Bayesian optimization (BO) is a powerful framework for globally optimizing expensive black‑box functions by maintaining a probabilistic surrogate model—most commonly a Gaussian process (GP)—and iteratively selecting evaluation points using an acquisition function such as Expected Improvement (EI) or Upper Confidence Bound (UCB). Two operational modes are traditionally considered. In the sequential mode, a single point is chosen, evaluated, and the GP is updated before the next decision. This yields the most informed decisions at each step, leading to rapid convergence in terms of function value, but it can be time‑inefficient when the underlying experiment or simulation can be run in parallel. In the batch (or parallel) mode, a set of points is selected simultaneously and evaluated in parallel, reducing wall‑clock time at the cost of potentially redundant information because all batch points are chosen based on the same GP posterior without accounting for the outcomes of the other points in the batch.

The paper “Hybrid Batch Bayesian Optimization” addresses the fundamental trade‑off between these two modes by proposing a dynamic, state‑dependent algorithm that switches between sequential and batch policies and also adapts the batch size on the fly. The authors first provide a systematic analysis of the information gain and cost associated with adding a new candidate to a batch. They define a conditional mutual information (CMI) metric that quantifies the expected reduction in posterior uncertainty when a candidate is evaluated together with the already‑selected batch members. By comparing the CMI‑derived information gain ΔI with the marginal cost C of evaluating an additional point, the algorithm decides whether to enlarge the current batch or to stop and revert to sequential evaluation. The decision rule is governed by a user‑specified threshold τ on the ratio ΔI / C; when the ratio falls below τ, the batch is closed.

Algorithmically, each iteration proceeds as follows: (1) fit or update the GP using all observations collected so far; (2) compute the acquisition values for the entire design space; (3) greedily construct a batch by repeatedly adding the point with the highest acquisition value that also maximizes the incremental CMI, stopping when the ΔI / C ratio drops below τ; (4) evaluate the batch in parallel; (5) incorporate the new observations and repeat. Because the batch size is not fixed, the method can degenerate to pure sequential BO (batch size = 1) when the information‑cost ratio is low, or to a large batch when the surrogate model is highly uncertain and the points are mutually informative.

The authors prove that this hybrid scheme retains the same asymptotic convergence guarantees as standard sequential BO. The key insight is that the expected improvement contributed by each batch element is lower‑bounded by the expected improvement of a sequential step, ensuring that the cumulative regret does not increase relative to the sequential baseline. Moreover, the adaptive batch size prevents the pathological loss of exploration that plagues fixed‑size batch methods, because the algorithm automatically curtails batch growth when redundancy becomes likely.

Empirical validation is performed on eight benchmark problems, including synthetic functions (Branin, Hartmann, Ackley, Rosenbrock) and real‑world tasks such as hyper‑parameter tuning for deep neural networks and robot trajectory optimization. Three strategies are compared: (i) pure sequential BO, (ii) fixed‑size batch BO (with batch sizes of 3, 5, and 10), and (iii) the proposed hybrid BO. Performance metrics include total wall‑clock time (accounting for parallel execution) and final objective value after a fixed budget of function evaluations. Results show that hybrid BO achieves speed‑ups ranging from 45 % to 78 % relative to sequential BO while incurring only a marginal loss in final objective quality (typically 1–3 % worse than the sequential optimum). The advantage is most pronounced in settings where each evaluation is expensive (e.g., training a deep model), because the algorithm can safely allocate larger batches without sacrificing the exploration needed to locate the global optimum.

The paper’s contributions are fourfold: (1) a rigorous quantitative analysis of the sequential‑versus‑batch trade‑off in BO; (2) a novel, theoretically justified hybrid algorithm that dynamically selects batch size based on information‑to‑cost ratio; (3) convergence proofs that guarantee no asymptotic degradation compared to standard BO; and (4) extensive experimental evidence that the method delivers substantial practical speed‑ups without significant performance loss. The authors suggest future extensions such as multi‑objective BO, non‑Gaussian surrogates, cost models that vary with input, and online adaptation in streaming environments. Overall, the work provides a compelling bridge between the efficiency of parallel evaluations and the statistical rigor of sequential Bayesian optimization.