Sequential design of computer experiments for the estimation of a probability of failure

This paper deals with the problem of estimating the volume of the excursion set of a function $f: mathbb{R}^d to mathbb{R}$ above a given threshold, under a probability measure on $ mathbb{R}^d$ tha

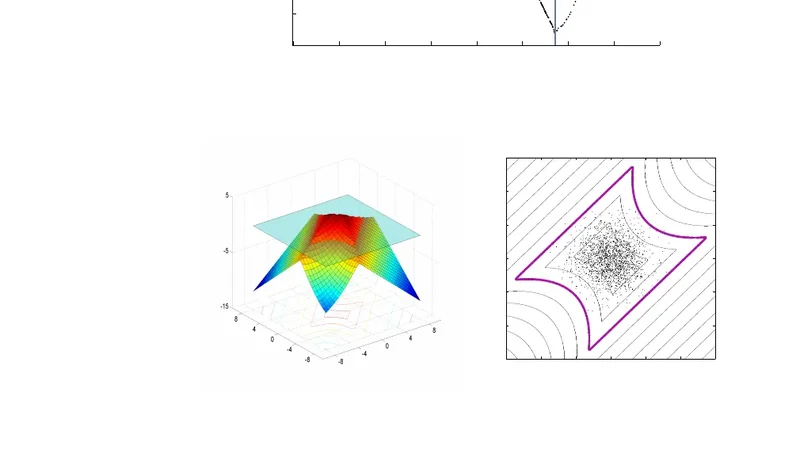

This paper deals with the problem of estimating the volume of the excursion set of a function $f:\mathbb{R}^d \to \mathbb{R}$ above a given threshold, under a probability measure on $\mathbb{R}^d$ that is assumed to be known. In the industrial world, this corresponds to the problem of estimating a probability of failure of a system. When only an expensive-to-simulate model of the system is available, the budget for simulations is usually severely limited and therefore classical Monte Carlo methods ought to be avoided. One of the main contributions of this article is to derive SUR (stepwise uncertainty reduction) strategies from a Bayesian-theoretic formulation of the problem of estimating a probability of failure. These sequential strategies use a Gaussian process model of $f$ and aim at performing evaluations of $f$ as efficiently as possible to infer the value of the probability of failure. We compare these strategies to other strategies also based on a Gaussian process model for estimating a probability of failure.

💡 Research Summary

The paper addresses the challenging problem of estimating the probability that a costly-to‑evaluate computer model exceeds a prescribed failure threshold. Formally, given a deterministic function $f:\mathbb{R}^d\rightarrow\mathbb{R}$, a known input density $p(x)$, and a threshold $u$, the quantity of interest is the failure probability $\alpha=\int_{{x:f(x)>u}}p(x),dx$. Direct Monte‑Carlo estimation would require a large number of model evaluations, which is infeasible when each evaluation is expensive.

To overcome this limitation the authors adopt a Bayesian framework. They place a Gaussian Process (GP) prior on $f$, characterized by a mean function $m(\cdot)$ and a covariance kernel $k(\cdot,\cdot)$. After $n$ observations $D_n={(x_i,y_i)}_{i=1}^n$, the GP posterior provides a predictive mean $m_n(x)$, variance $s_n^2(x)$, and a full joint Gaussian distribution over any finite set of points. The failure probability $\alpha$ becomes a random variable under this posterior, with an analytically tractable expectation $\mathbb{E}

📜 Original Paper Content

🚀 Synchronizing high-quality layout from 1TB storage...