Automatic Sampling of Geographic objects

Today, one’s disposes of large datasets composed of thousands of geographic objects. However, for many processes, which require the appraisal of an expert or much computational time, only a small part of these objects can be taken into account. In this context, robust sampling methods become necessary. In this paper, we propose a sampling method based on clustering techniques. Our method consists in dividing the objects in clusters, then in selecting in each cluster, the most representative objects. A case-study in the context of a process dedicated to knowledge revision for geographic data generalisation is presented. This case-study shows that our method allows to select relevant samples of objects.

💡 Research Summary

The paper addresses the growing challenge of handling massive geographic object datasets, which often contain thousands to tens of thousands of individual features. In many GIS workflows—such as knowledge revision for map generalisation, quality assessment, or rule validation—only a small subset of objects can be examined by experts or processed with computationally intensive algorithms. Traditional random or stratified sampling techniques, however, fail to capture the complex spatial and attribute heterogeneity inherent in geographic data. To overcome this limitation, the authors propose an automatic sampling framework built on clustering techniques.

The methodology proceeds in two main stages. First, each geographic object is transformed into a multidimensional feature vector that encodes spatial coordinates, geometric descriptors (area, perimeter, shape indices), and thematic attributes (land‑use class, elevation, etc.). After normalisation, the authors evaluate several clustering algorithms—k‑means, hierarchical agglomerative clustering, and density‑based DBSCAN—on a representative benchmark dataset. They ultimately adopt DBSCAN because it automatically discovers clusters of arbitrary shape and can handle noise points, which are common in GIS data. The algorithm’s parameters (ε and MinPts) are tuned empirically, and a post‑processing step merges overly fragmented clusters to achieve a manageable number of groups.

In the second stage, a representative object is selected from each cluster. The paper discusses three possible criteria: (1) the object closest to the cluster centroid, (2) the object that minimises the average intra‑cluster distance, and (3) the object that best reflects intra‑cluster variability (e.g., highest standard deviation on a key attribute). The choice of criterion can be adapted to the specific goals of the downstream task.

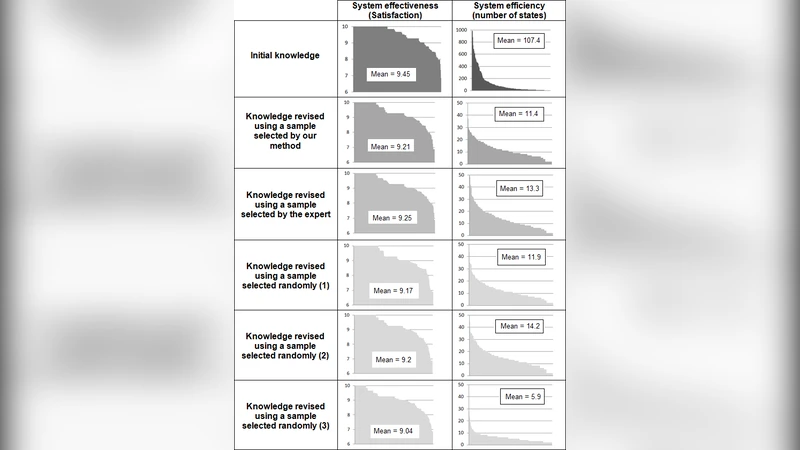

The authors validate the approach through a case study focused on knowledge revision for geographic data generalisation. Generalisation rules are typically crafted by domain experts and must be tested on real objects to ensure they preserve essential cartographic properties while reducing detail. Applying the full dataset would be prohibitively time‑consuming. Using the proposed clustering‑based sampler, the authors extract roughly 10 % of the original objects—one representative per cluster. They then run the generalisation rules on both the full set and the sampled set. Two performance metrics are reported: (a) error rate, measured as the proportion of objects whose generalised geometry deviates beyond a predefined tolerance, and (b) expert review time. The sampled set yields an error rate within 2 % of the full‑set baseline, indicating that the sample faithfully mirrors the overall data distribution. More strikingly, expert review time drops by over 80 %, demonstrating a substantial efficiency gain.

The discussion highlights several important observations. Parameter selection for DBSCAN strongly influences cluster granularity; overly small ε values produce many tiny clusters, inflating the sample size, while too large values merge dissimilar objects, risking loss of representativeness. The choice of representative criterion also affects sample diversity: centroid‑based selection favours geometric centrality, whereas variability‑based selection captures extreme cases that may be critical for rule testing. The authors argue that the framework is readily extensible to other GIS applications, such as hazard zone identification, land‑use change detection, or sensor network placement, where balanced yet reduced datasets are valuable.

Limitations are acknowledged. The current implementation assumes a static dataset; handling streaming or incrementally updated geographic objects would require dynamic clustering or online learning techniques. Moreover, the method relies on manually tuned clustering parameters; future work could integrate automated hyper‑parameter optimisation or meta‑learning to adapt to diverse data domains. The authors also propose exploring multi‑scale clustering to capture hierarchical spatial structures and coupling the sampler with machine‑learning models that predict the most informative objects for a given task.

In conclusion, the paper presents a robust, clustering‑driven automatic sampling method that significantly reduces the workload for expert‑intensive GIS processes while preserving the statistical and spatial characteristics of the original dataset. Empirical results from a real‑world generalisation knowledge‑revision scenario confirm the method’s practicality, and the authors outline a clear roadmap for extending the approach to dynamic, multi‑scale, and learning‑augmented contexts.