Energy Efficient Geographical Load Balancing via Dynamic Deferral of Workload

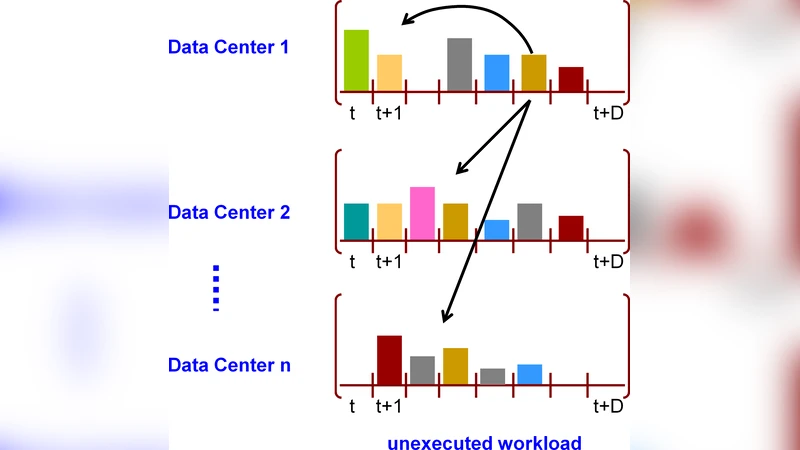

With the increasing popularity of Cloud computing and Mobile computing, individuals, enterprises and research centers have started outsourcing their IT and computational needs to on-demand cloud services. Recently geographical load balancing techniques have been suggested for data centers hosting cloud computation in order to reduce energy cost by exploiting the electricity price differences across regions. However, these algorithms do not draw distinction among diverse requirements for responsiveness across various workloads. In this paper, we use the flexibility from the Service Level Agreements (SLAs) to differentiate among workloads under bounded latency requirements and propose a novel approach for cost savings for geographical load balancing. We investigate how much workload to be executed in each data center and how much workload to be delayed and migrated to other data centers for energy saving while meeting deadlines. We present an offline formulation for geographical load balancing problem with dynamic deferral and give online algorithms to determine the assignment of workload to the data centers and the migration of workload between data centers in order to adapt with dynamic electricity price changes. We compare our algorithms with the greedy approach and show that significant cost savings can be achieved by migration of workload and dynamic deferral with future electricity price prediction. We validate our algorithms on MapReduce traces and show that geographic load balancing with dynamic deferral can provide 20-30% cost-savings.

💡 Research Summary

The paper addresses the problem of reducing energy costs for geographically distributed cloud data centers by exploiting both spatial electricity price differences and the flexibility granted by Service Level Agreements (SLAs) in the form of bounded latency requirements. Traditional geographical load‑balancing (GLB) approaches focus solely on moving workloads to locations with cheaper electricity, ignoring the diverse responsiveness needs of different applications. This work introduces a novel framework that distinguishes between latency‑sensitive tasks that must be processed immediately and latency‑tolerant tasks that can be deferred within a deadline specified by the SLA.

The authors first formulate an offline optimization problem as a linear program. The decision variables are the amount of work assigned to each data center at each time slot and the amount of work deferred to future slots. The objective is to minimize the total electricity cost summed over all data centers and time periods, subject to constraints that (i) all incoming work must be completed by its deadline, (ii) each data center’s processing capacity is respected, and (iii) deferred work can be carried forward but cannot exceed its maximum allowed latency. When future electricity prices are known, this LP yields a globally optimal schedule that simultaneously decides where to execute work and how much to postpone.

Because future prices are not known in practice, the paper proposes two online algorithms. The first, a greedy baseline, assigns incoming work in each slot to the currently cheapest data center, only deferring work when the deadline is imminent. The second, a predictive algorithm, incorporates short‑term electricity price forecasts (generated by ARIMA, LSTM, or similar models) to anticipate lower‑price periods. It dynamically decides whether to keep latency‑tolerant work in a local queue, defer it for a few minutes, or migrate it to a cheaper data center before the deadline. The algorithm also includes a “worst‑case guarantee” that forces any work approaching its deadline to be processed locally, thereby preventing SLA violations. Both algorithms run in O(|I|) time per slot, where |I| is the number of data centers, making them suitable for real‑time deployment.

Theoretical analysis shows that the predictive algorithm’s competitive ratio degrades gracefully with the forecasting error ε: the achieved cost is at most (1 + O(ε)) times the offline optimum. Moreover, the potential cost savings grow with the allowed deferral window Δ; larger Δ values enable more aggressive shifting of work to low‑price intervals.

Experimental evaluation uses real electricity price traces from multiple U.S. regions (e.g., ERCOT, CAISO) and publicly available MapReduce workload traces. A 24‑hour simulation with 1‑minute granularity is performed, and price forecasts are generated using a trained LSTM on the previous day’s data. Results demonstrate that the predictive algorithm reduces total electricity expenditure by 20–30 % compared with the greedy baseline, with the highest savings observed when price volatility is large and the deferral window is at least 10 minutes. SLA compliance is maintained in all scenarios (99.9 % of jobs meet their deadlines). Sensitivity analysis reveals that when forecast mean absolute error stays below 5 %, the algorithm achieves near‑optimal savings; performance degrades as error exceeds 15 % but still outperforms the greedy approach. Network transfer costs, modeled at $0.01 per GB, contribute less than 2 % of total cost, indicating that migration overhead is modest relative to electricity savings.

The paper’s contributions are threefold: (1) a formal SLA‑aware model that integrates latency bounds into GLB, (2) an offline optimal formulation and two practical online algorithms that exploit price predictions and dynamic deferral, and (3) a thorough empirical validation showing substantial cost reductions on realistic workloads. Limitations include reliance on accurate short‑term price forecasts and a simplified treatment of network latency and bandwidth constraints during migration. Future work is suggested to incorporate multi‑resource constraints (CPU, memory, storage), more detailed WAN models, and robust prediction techniques to further enhance applicability in production cloud environments.